mirror of

https://github.com/qurator-spk/eynollah.git

synced 2026-06-02 11:09:16 +02:00

Merge branch 'integrate-training-from-sbb_pixelwise_segmentation' of https://github.com/qurator-spk/eynollah into integrate-training-from-sbb_pixelwise_segmentation

This commit is contained in:

commit

95bb5908bb

15 changed files with 78 additions and 287 deletions

|

|

@ -22,7 +22,7 @@ Added:

|

|||

Fixed:

|

||||

|

||||

* allow empty imports for optional dependencies

|

||||

* avoid Numpy warnings (empty slices etc)

|

||||

* avoid Numpy warnings (empty slices etc.)

|

||||

* remove deprecated Numpy types

|

||||

* binarization CLI: make `dir_in` usable again

|

||||

|

||||

|

|

|

|||

50

README.md

50

README.md

|

|

@ -11,23 +11,24 @@

|

|||

|

||||

|

||||

## Features

|

||||

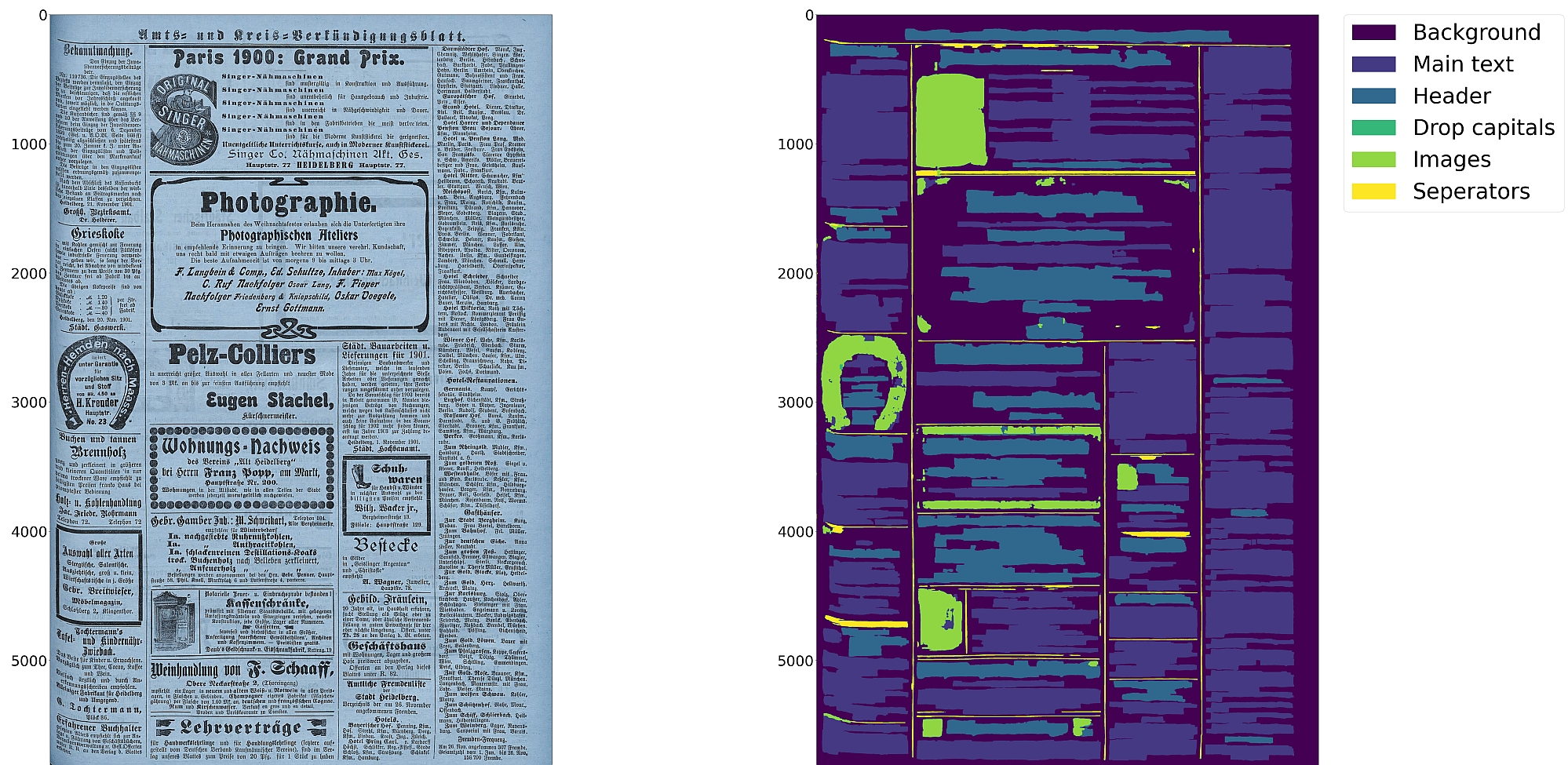

* Support for up to 10 segmentation classes:

|

||||

* Support for 10 distinct segmentation classes:

|

||||

* background, [page border](https://ocr-d.de/en/gt-guidelines/trans/lyRand.html), [text region](https://ocr-d.de/en/gt-guidelines/trans/lytextregion.html#textregionen__textregion_), [text line](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextLineType.html), [header](https://ocr-d.de/en/gt-guidelines/trans/lyUeberschrift.html), [image](https://ocr-d.de/en/gt-guidelines/trans/lyBildbereiche.html), [separator](https://ocr-d.de/en/gt-guidelines/trans/lySeparatoren.html), [marginalia](https://ocr-d.de/en/gt-guidelines/trans/lyMarginalie.html), [initial](https://ocr-d.de/en/gt-guidelines/trans/lyInitiale.html), [table](https://ocr-d.de/en/gt-guidelines/trans/lyTabellen.html)

|

||||

* Support for various image optimization operations:

|

||||

* cropping (border detection), binarization, deskewing, dewarping, scaling, enhancing, resizing

|

||||

* Text line segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

||||

* Detection of reading order (left-to-right or right-to-left)

|

||||

* Textline segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

||||

* Text recognition (OCR) using either CNN-RNN or Transformer models

|

||||

* Detection of reading order (left-to-right or right-to-left) using either heuristics or trainable models

|

||||

* Output in [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML)

|

||||

* [OCR-D](https://github.com/qurator-spk/eynollah#use-as-ocr-d-processor) interface

|

||||

|

||||

:warning: Development is currently focused on achieving the best possible quality of results for a wide variety of

|

||||

historical documents and therefore processing can be very slow. We aim to improve this, but contributions are welcome.

|

||||

:warning: Development is focused on achieving the best quality of results for a wide variety of historical

|

||||

documents and therefore processing can be very slow. We aim to improve this, but contributions are welcome.

|

||||

|

||||

## Installation

|

||||

|

||||

Python `3.8-3.11` with Tensorflow `<2.13` on Linux are currently supported.

|

||||

|

||||

For (limited) GPU support the CUDA toolkit needs to be installed.

|

||||

For (limited) GPU support the CUDA toolkit needs to be installed. A known working config is CUDA `11` with cuDNN `8.6`.

|

||||

|

||||

You can either install from PyPI

|

||||

|

||||

|

|

@ -56,26 +57,27 @@ make install EXTRAS=OCR

|

|||

|

||||

Pretrained models can be downloaded from [zenodo](https://zenodo.org/records/17194824) or [huggingface](https://huggingface.co/SBB?search_models=eynollah).

|

||||

|

||||

For documentation on methods and models, have a look at [`models.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/models.md).

|

||||

For documentation on models, have a look at [`models.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/models.md).

|

||||

Model cards are also provided for our trained models.

|

||||

|

||||

## Training

|

||||

|

||||

In case you want to train your own model with Eynollah, have see the

|

||||

In case you want to train your own model with Eynollah, see the

|

||||

documentation in [`train.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/train.md) and use the

|

||||

tools in the [`train` folder](https://github.com/qurator-spk/eynollah/tree/main/train).

|

||||

|

||||

## Usage

|

||||

|

||||

Eynollah supports five use cases: layout analysis (segmentation), binarization,

|

||||

image enhancement, text recognition (OCR), and (trainable) reading order detection.

|

||||

image enhancement, text recognition (OCR), and reading order detection.

|

||||

|

||||

### Layout Analysis

|

||||

|

||||

The layout analysis module is responsible for detecting layouts, identifying text lines, and determining reading order

|

||||

using both heuristic methods or a machine-based reading order detection model.

|

||||

The layout analysis module is responsible for detecting layout elements, identifying text lines, and determining reading

|

||||

order using either heuristic methods or a [pretrained reading order detection model](https://github.com/qurator-spk/eynollah#machine-based-reading-order).

|

||||

|

||||

Note that there are currently two supported ways for reading order detection: either as part of layout analysis based

|

||||

on image input, or, currently under development, for given layout analysis results based on PAGE-XML data as input.

|

||||

Reading order detection can be performed either as part of layout analysis based on image input, or, currently under

|

||||

development, based on pre-existing layout analysis results in PAGE-XML format as input.

|

||||

|

||||

The command-line interface for layout analysis can be called like this:

|

||||

|

||||

|

|

@ -108,15 +110,15 @@ The following options can be used to further configure the processing:

|

|||

| `-sp <directory>` | save cropped page image to this directory |

|

||||

| `-sa <directory>` | save all (plot, enhanced/binary image, layout) to this directory |

|

||||

|

||||

If no option is set, the tool performs layout detection of main regions (background, text, images, separators

|

||||

If no further option is set, the tool performs layout detection of main regions (background, text, images, separators

|

||||

and marginals).

|

||||

The best output quality is produced when RGB images are used as input rather than greyscale or binarized images.

|

||||

The best output quality is achieved when RGB images are used as input rather than greyscale or binarized images.

|

||||

|

||||

### Binarization

|

||||

|

||||

The binarization module performs document image binarization using pretrained pixelwise segmentation models.

|

||||

|

||||

The command-line interface for binarization of single image can be called like this:

|

||||

The command-line interface for binarization can be called like this:

|

||||

|

||||

```sh

|

||||

eynollah binarization \

|

||||

|

|

@ -127,16 +129,16 @@ eynollah binarization \

|

|||

|

||||

### OCR

|

||||

|

||||

The OCR module performs text recognition from images using two main families of pretrained models: CNN-RNN–based OCR and Transformer-based OCR.

|

||||

The OCR module performs text recognition using either a CNN-RNN model or a Transformer model.

|

||||

|

||||

The command-line interface for ocr can be called like this:

|

||||

The command-line interface for OCR can be called like this:

|

||||

|

||||

```sh

|

||||

eynollah ocr \

|

||||

-i <single image file> | -di <directory containing image files> \

|

||||

-dx <directory of xmls> \

|

||||

-o <output directory> \

|

||||

-m <path to directory containing model files> | --model_name <path to specific model> \

|

||||

-m <directory containing model files> | --model_name <path to specific model> \

|

||||

```

|

||||

|

||||

### Machine-based-reading-order

|

||||

|

|

@ -172,22 +174,20 @@ If the input file group is PAGE-XML (from a previous OCR-D workflow step), Eynol

|

|||

(because some other preprocessing step was in effect like `denoised`), then

|

||||

the output PAGE-XML will be based on that as new top-level (`@imageFilename`)

|

||||

|

||||

ocrd-eynollah-segment -I OCR-D-XYZ -O OCR-D-SEG -P models eynollah_layout_v0_5_0

|

||||

ocrd-eynollah-segment -I OCR-D-XYZ -O OCR-D-SEG -P models eynollah_layout_v0_5_0

|

||||

|

||||

Still, in general, it makes more sense to add other workflow steps **after** Eynollah.

|

||||

In general, it makes more sense to add other workflow steps **after** Eynollah.

|

||||

|

||||

There is also an OCR-D processor for the binarization:

|

||||

There is also an OCR-D processor for binarization:

|

||||

|

||||

ocrd-sbb-binarize -I OCR-D-IMG -O OCR-D-BIN -P models default-2021-03-09

|

||||

|

||||

#### Additional documentation

|

||||

|

||||

Please check the [wiki](https://github.com/qurator-spk/eynollah/wiki).

|

||||

Additional documentation is available in the [docs](https://github.com/qurator-spk/eynollah/tree/main/docs) directory.

|

||||

|

||||

## How to cite

|

||||

|

||||

If you find this tool useful in your work, please consider citing our paper:

|

||||

|

||||

```bibtex

|

||||

@inproceedings{hip23rezanezhad,

|

||||

title = {Document Layout Analysis with Deep Learning and Heuristics},

|

||||

|

|

|

|||

|

|

@ -4886,9 +4886,9 @@ class Eynollah:

|

|||

textline_mask_tot_ea_org[img_revised_tab==drop_label_in_full_layout] = 0

|

||||

|

||||

|

||||

text_only = ((img_revised_tab[:, :] == 1)) * 1

|

||||

text_only = (img_revised_tab[:, :] == 1) * 1

|

||||

if np.abs(slope_deskew) >= SLOPE_THRESHOLD:

|

||||

text_only_d = ((text_regions_p_1_n[:, :] == 1)) * 1

|

||||

text_only_d = (text_regions_p_1_n[:, :] == 1) * 1

|

||||

|

||||

#print("text region early 2 in %.1fs", time.time() - t0)

|

||||

###min_con_area = 0.000005

|

||||

|

|

|

|||

|

|

@ -12,7 +12,7 @@ from .utils import crop_image_inside_box

|

|||

from .utils.rotate import rotate_image_different

|

||||

from .utils.resize import resize_image

|

||||

|

||||

class EynollahPlotter():

|

||||

class EynollahPlotter:

|

||||

"""

|

||||

Class collecting all the plotting and image writing methods

|

||||

"""

|

||||

|

|

|

|||

|

|

@ -138,8 +138,7 @@ def return_x_start_end_mothers_childs_and_type_of_reading_order(

|

|||

min_ys=np.min(y_sep)

|

||||

max_ys=np.max(y_sep)

|

||||

|

||||

y_mains=[]

|

||||

y_mains.append(min_ys)

|

||||

y_mains= [min_ys]

|

||||

y_mains_sep_ohne_grenzen=[]

|

||||

|

||||

for ii in range(len(new_main_sep_y)):

|

||||

|

|

@ -493,8 +492,7 @@ def find_num_col(regions_without_separators, num_col_classifier, tables, multipl

|

|||

# print(forest[np.argmin(z[forest]) ] )

|

||||

if not isNaN(forest[np.argmin(z[forest])]):

|

||||

peaks_neg_true.append(forest[np.argmin(z[forest])])

|

||||

forest = []

|

||||

forest.append(peaks_neg_fin[i + 1])

|

||||

forest = [peaks_neg_fin[i + 1]]

|

||||

if i == (len(peaks_neg_fin) - 1):

|

||||

# print(print(forest[np.argmin(z[forest]) ] ))

|

||||

if not isNaN(forest[np.argmin(z[forest])]):

|

||||

|

|

@ -662,8 +660,7 @@ def find_num_col_only_image(regions_without_separators, multiplier=3.8):

|

|||

# print(forest[np.argmin(z[forest]) ] )

|

||||

if not isNaN(forest[np.argmin(z[forest])]):

|

||||

peaks_neg_true.append(forest[np.argmin(z[forest])])

|

||||

forest = []

|

||||

forest.append(peaks_neg_fin[i + 1])

|

||||

forest = [peaks_neg_fin[i + 1]]

|

||||

if i == (len(peaks_neg_fin) - 1):

|

||||

# print(print(forest[np.argmin(z[forest]) ] ))

|

||||

if not isNaN(forest[np.argmin(z[forest])]):

|

||||

|

|

@ -1211,7 +1208,7 @@ def order_of_regions(textline_mask, contours_main, contours_header, y_ref):

|

|||

|

||||

##plt.plot(z)

|

||||

##plt.show()

|

||||

if contours_main != None:

|

||||

if contours_main is not None:

|

||||

areas_main = np.array([cv2.contourArea(contours_main[j]) for j in range(len(contours_main))])

|

||||

M_main = [cv2.moments(contours_main[j]) for j in range(len(contours_main))]

|

||||

cx_main = [(M_main[j]["m10"] / (M_main[j]["m00"] + 1e-32)) for j in range(len(M_main))]

|

||||

|

|

@ -1222,7 +1219,7 @@ def order_of_regions(textline_mask, contours_main, contours_header, y_ref):

|

|||

y_min_main = np.array([np.min(contours_main[j][:, 0, 1]) for j in range(len(contours_main))])

|

||||

y_max_main = np.array([np.max(contours_main[j][:, 0, 1]) for j in range(len(contours_main))])

|

||||

|

||||

if len(contours_header) != None:

|

||||

if len(contours_header) is not None:

|

||||

areas_header = np.array([cv2.contourArea(contours_header[j]) for j in range(len(contours_header))])

|

||||

M_header = [cv2.moments(contours_header[j]) for j in range(len(contours_header))]

|

||||

cx_header = [(M_header[j]["m10"] / (M_header[j]["m00"] + 1e-32)) for j in range(len(M_header))]

|

||||

|

|

@ -1235,17 +1232,16 @@ def order_of_regions(textline_mask, contours_main, contours_header, y_ref):

|

|||

y_max_header = np.array([np.max(contours_header[j][:, 0, 1]) for j in range(len(contours_header))])

|

||||

# print(cy_main,'mainy')

|

||||

|

||||

peaks_neg_new = []

|

||||

peaks_neg_new.append(0 + y_ref)

|

||||

peaks_neg_new = [0 + y_ref]

|

||||

for iii in range(len(peaks_neg)):

|

||||

peaks_neg_new.append(peaks_neg[iii] + y_ref)

|

||||

peaks_neg_new.append(textline_mask.shape[0] + y_ref)

|

||||

|

||||

if len(cy_main) > 0 and np.max(cy_main) > np.max(peaks_neg_new):

|

||||

cy_main = np.array(cy_main) * (np.max(peaks_neg_new) / np.max(cy_main)) - 10

|

||||

if contours_main != None:

|

||||

if contours_main is not None:

|

||||

indexer_main = np.arange(len(contours_main))

|

||||

if contours_main != None:

|

||||

if contours_main is not None:

|

||||

len_main = len(contours_main)

|

||||

else:

|

||||

len_main = 0

|

||||

|

|

@ -1271,11 +1267,11 @@ def order_of_regions(textline_mask, contours_main, contours_header, y_ref):

|

|||

top = peaks_neg_new[i]

|

||||

down = peaks_neg_new[i + 1]

|

||||

indexes_in = matrix_of_orders[:, 0][(matrix_of_orders[:, 3] >= top) &

|

||||

((matrix_of_orders[:, 3] < down))]

|

||||

(matrix_of_orders[:, 3] < down)]

|

||||

cxs_in = matrix_of_orders[:, 2][(matrix_of_orders[:, 3] >= top) &

|

||||

((matrix_of_orders[:, 3] < down))]

|

||||

(matrix_of_orders[:, 3] < down)]

|

||||

cys_in = matrix_of_orders[:, 3][(matrix_of_orders[:, 3] >= top) &

|

||||

((matrix_of_orders[:, 3] < down))]

|

||||

(matrix_of_orders[:, 3] < down)]

|

||||

types_of_text = matrix_of_orders[:, 1][(matrix_of_orders[:, 3] >= top) &

|

||||

(matrix_of_orders[:, 3] < down)]

|

||||

index_types_of_text = matrix_of_orders[:, 4][(matrix_of_orders[:, 3] >= top) &

|

||||

|

|

@ -1404,8 +1400,7 @@ def combine_hor_lines_and_delete_cross_points_and_get_lines_features_back_new(

|

|||

return img_p_in[:,:,0], special_separators

|

||||

|

||||

def return_points_with_boundies(peaks_neg_fin, first_point, last_point):

|

||||

peaks_neg_tot = []

|

||||

peaks_neg_tot.append(first_point)

|

||||

peaks_neg_tot = [first_point]

|

||||

for ii in range(len(peaks_neg_fin)):

|

||||

peaks_neg_tot.append(peaks_neg_fin[ii])

|

||||

peaks_neg_tot.append(last_point)

|

||||

|

|

@ -1413,7 +1408,7 @@ def return_points_with_boundies(peaks_neg_fin, first_point, last_point):

|

|||

|

||||

def find_number_of_columns_in_document(region_pre_p, num_col_classifier, tables, pixel_lines, contours_h=None):

|

||||

t_ins_c0 = time.time()

|

||||

separators_closeup=( (region_pre_p[:,:,:]==pixel_lines))*1

|

||||

separators_closeup= (region_pre_p[:, :, :] == pixel_lines) * 1

|

||||

separators_closeup[0:110,:,:]=0

|

||||

separators_closeup[separators_closeup.shape[0]-150:,:,:]=0

|

||||

|

||||

|

|

@ -1452,7 +1447,7 @@ def find_number_of_columns_in_document(region_pre_p, num_col_classifier, tables,

|

|||

gray = cv2.bitwise_not(separators_closeup_n_binary)

|

||||

gray=gray.astype(np.uint8)

|

||||

|

||||

bw = cv2.adaptiveThreshold(gray, 255, cv2.ADAPTIVE_THRESH_MEAN_C, \

|

||||

bw = cv2.adaptiveThreshold(gray, 255, cv2.ADAPTIVE_THRESH_MEAN_C,

|

||||

cv2.THRESH_BINARY, 15, -2)

|

||||

horizontal = np.copy(bw)

|

||||

vertical = np.copy(bw)

|

||||

|

|

@ -1588,8 +1583,7 @@ def find_number_of_columns_in_document(region_pre_p, num_col_classifier, tables,

|

|||

args_cy_splitter=np.argsort(cy_main_splitters)

|

||||

cy_main_splitters_sort=cy_main_splitters[args_cy_splitter]

|

||||

|

||||

splitter_y_new=[]

|

||||

splitter_y_new.append(0)

|

||||

splitter_y_new= [0]

|

||||

for i in range(len(cy_main_splitters_sort)):

|

||||

splitter_y_new.append( cy_main_splitters_sort[i] )

|

||||

splitter_y_new.append(region_pre_p.shape[0])

|

||||

|

|

@ -1663,8 +1657,7 @@ def return_boxes_of_images_by_order_of_reading_new(

|

|||

num_col, peaks_neg_fin = find_num_col(

|

||||

regions_without_separators[int(splitter_y_new[i]):int(splitter_y_new[i+1]),:],

|

||||

num_col_classifier, tables, multiplier=3.)

|

||||

peaks_neg_fin_early=[]

|

||||

peaks_neg_fin_early.append(0)

|

||||

peaks_neg_fin_early= [0]

|

||||

#print(peaks_neg_fin,'peaks_neg_fin')

|

||||

for p_n in peaks_neg_fin:

|

||||

peaks_neg_fin_early.append(p_n)

|

||||

|

|

|

|||

|

|

@ -239,8 +239,7 @@ def do_back_rotation_and_get_cnt_back(contour_par, index_r_con, img, slope_first

|

|||

|

||||

cont_int, _ = cv2.findContours(thresh, cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

|

||||

if len(cont_int)==0:

|

||||

cont_int = []

|

||||

cont_int.append(contour_par)

|

||||

cont_int = [contour_par]

|

||||

confidence_contour = 0

|

||||

else:

|

||||

cont_int[0][:, 0, 0] = cont_int[0][:, 0, 0] + np.abs(img_copy.shape[1] - img.shape[1])

|

||||

|

|

|

|||

|

|

@ -3,7 +3,7 @@ from collections import Counter

|

|||

REGION_ID_TEMPLATE = 'region_%04d'

|

||||

LINE_ID_TEMPLATE = 'region_%04d_line_%04d'

|

||||

|

||||

class EynollahIdCounter():

|

||||

class EynollahIdCounter:

|

||||

|

||||

def __init__(self, region_idx=0, line_idx=0):

|

||||

self._counter = Counter()

|

||||

|

|

|

|||

|

|

@ -76,7 +76,7 @@ def get_marginals(text_with_lines, text_regions, num_col, slope_deskew, light_ve

|

|||

|

||||

peaks, _ = find_peaks(text_with_lines_y_rev, height=0)

|

||||

peaks=np.array(peaks)

|

||||

peaks=peaks[(peaks>first_nonzero) & ((peaks<last_nonzero))]

|

||||

peaks=peaks[(peaks>first_nonzero) & (peaks < last_nonzero)]

|

||||

peaks=peaks[region_sum_0[peaks]<min_textline_thickness ]

|

||||

|

||||

|

||||

|

|

|

|||

|

|

@ -1174,8 +1174,7 @@ def separate_lines_new_inside_tiles(img_path, thetha):

|

|||

if diff_peaks[i] > cut_off:

|

||||

if not np.isnan(forest[np.argmin(z[forest])]):

|

||||

peaks_neg_true.append(forest[np.argmin(z[forest])])

|

||||

forest = []

|

||||

forest.append(peaks_neg[i + 1])

|

||||

forest = [peaks_neg[i + 1]]

|

||||

if i == (len(peaks_neg) - 1):

|

||||

if not np.isnan(forest[np.argmin(z[forest])]):

|

||||

peaks_neg_true.append(forest[np.argmin(z[forest])])

|

||||

|

|

@ -1195,8 +1194,7 @@ def separate_lines_new_inside_tiles(img_path, thetha):

|

|||

if diff_peaks_pos[i] > cut_off:

|

||||

if not np.isnan(forest[np.argmax(z[forest])]):

|

||||

peaks_pos_true.append(forest[np.argmax(z[forest])])

|

||||

forest = []

|

||||

forest.append(peaks[i + 1])

|

||||

forest = [peaks[i + 1]]

|

||||

if i == (len(peaks) - 1):

|

||||

if not np.isnan(forest[np.argmax(z[forest])]):

|

||||

peaks_pos_true.append(forest[np.argmax(z[forest])])

|

||||

|

|

@ -1430,9 +1428,9 @@ def separate_lines_new2(img_path, thetha, num_col, slope_region, logger=None, pl

|

|||

img_int = np.zeros((img_xline.shape[0], img_xline.shape[1]))

|

||||

img_int[:, :] = img_xline[:, :] # img_patch_org[:,:,0]

|

||||

|

||||

img_resized = np.zeros((int(img_int.shape[0] * (1.2)), int(img_int.shape[1] * (3))))

|

||||

img_resized[int(img_int.shape[0] * (0.1)) : int(img_int.shape[0] * (0.1)) + img_int.shape[0],

|

||||

int(img_int.shape[1] * (1.0)) : int(img_int.shape[1] * (1.0)) + img_int.shape[1]] = img_int[:, :]

|

||||

img_resized = np.zeros((int(img_int.shape[0] * 1.2), int(img_int.shape[1] * 3)))

|

||||

img_resized[int(img_int.shape[0] * 0.1): int(img_int.shape[0] * 0.1) + img_int.shape[0],

|

||||

int(img_int.shape[1] * 1.0): int(img_int.shape[1] * 1.0) + img_int.shape[1]] = img_int[:, :]

|

||||

# plt.imshow(img_xline)

|

||||

# plt.show()

|

||||

img_line_rotated = rotate_image(img_resized, slopes_tile_wise[i])

|

||||

|

|

@ -1444,8 +1442,8 @@ def separate_lines_new2(img_path, thetha, num_col, slope_region, logger=None, pl

|

|||

img_patch_separated_returned[:, :][img_patch_separated_returned[:, :] != 0] = 1

|

||||

|

||||

img_patch_separated_returned_true_size = img_patch_separated_returned[

|

||||

int(img_int.shape[0] * (0.1)) : int(img_int.shape[0] * (0.1)) + img_int.shape[0],

|

||||

int(img_int.shape[1] * (1.0)) : int(img_int.shape[1] * (1.0)) + img_int.shape[1]]

|

||||

int(img_int.shape[0] * 0.1): int(img_int.shape[0] * 0.1) + img_int.shape[0],

|

||||

int(img_int.shape[1] * 1.0): int(img_int.shape[1] * 1.0) + img_int.shape[1]]

|

||||

|

||||

img_patch_separated_returned_true_size = img_patch_separated_returned_true_size[:, margin : length_x - margin]

|

||||

img_patch_ineterst_revised[:, index_x_d + margin : index_x_u - margin] = img_patch_separated_returned_true_size

|

||||

|

|

@ -1473,7 +1471,7 @@ def return_deskew_slop(img_patch_org, sigma_des,n_tot_angles=100,

|

|||

img_int[:,:]=img_patch_org[:,:]#img_patch_org[:,:,0]

|

||||

|

||||

max_shape=np.max(img_int.shape)

|

||||

img_resized=np.zeros((int( max_shape*(1.1) ) , int( max_shape*(1.1) ) ))

|

||||

img_resized=np.zeros((int(max_shape * 1.1) , int(max_shape * 1.1)))

|

||||

|

||||

onset_x=int((img_resized.shape[1]-img_int.shape[1])/2.)

|

||||

onset_y=int((img_resized.shape[0]-img_int.shape[0])/2.)

|

||||

|

|

@ -1538,7 +1536,7 @@ def return_deskew_slop_old_mp(img_patch_org, sigma_des,n_tot_angles=100,

|

|||

img_int[:,:]=img_patch_org[:,:]#img_patch_org[:,:,0]

|

||||

|

||||

max_shape=np.max(img_int.shape)

|

||||

img_resized=np.zeros((int( max_shape*(1.1) ) , int( max_shape*(1.1) ) ))

|

||||

img_resized=np.zeros((int(max_shape * 1.1) , int(max_shape * 1.1)))

|

||||

|

||||

onset_x=int((img_resized.shape[1]-img_int.shape[1])/2.)

|

||||

onset_y=int((img_resized.shape[0]-img_int.shape[0])/2.)

|

||||

|

|

|

|||

|

|

@ -21,7 +21,7 @@ from ocrd_models.ocrd_page import (

|

|||

)

|

||||

import numpy as np

|

||||

|

||||

class EynollahXmlWriter():

|

||||

class EynollahXmlWriter:

|

||||

|

||||

def __init__(self, *, dir_out, image_filename, curved_line,textline_light, pcgts=None):

|

||||

self.logger = getLogger('eynollah.writer')

|

||||

|

|

|

|||

201

train/LICENSE

201

train/LICENSE

|

|

@ -1,201 +0,0 @@

|

|||

Apache License

|

||||

Version 2.0, January 2004

|

||||

http://www.apache.org/licenses/

|

||||

|

||||

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

||||

|

||||

1. Definitions.

|

||||

|

||||

"License" shall mean the terms and conditions for use, reproduction,

|

||||

and distribution as defined by Sections 1 through 9 of this document.

|

||||

|

||||

"Licensor" shall mean the copyright owner or entity authorized by

|

||||

the copyright owner that is granting the License.

|

||||

|

||||

"Legal Entity" shall mean the union of the acting entity and all

|

||||

other entities that control, are controlled by, or are under common

|

||||

control with that entity. For the purposes of this definition,

|

||||

"control" means (i) the power, direct or indirect, to cause the

|

||||

direction or management of such entity, whether by contract or

|

||||

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

||||

outstanding shares, or (iii) beneficial ownership of such entity.

|

||||

|

||||

"You" (or "Your") shall mean an individual or Legal Entity

|

||||

exercising permissions granted by this License.

|

||||

|

||||

"Source" form shall mean the preferred form for making modifications,

|

||||

including but not limited to software source code, documentation

|

||||

source, and configuration files.

|

||||

|

||||

"Object" form shall mean any form resulting from mechanical

|

||||

transformation or translation of a Source form, including but

|

||||

not limited to compiled object code, generated documentation,

|

||||

and conversions to other media types.

|

||||

|

||||

"Work" shall mean the work of authorship, whether in Source or

|

||||

Object form, made available under the License, as indicated by a

|

||||

copyright notice that is included in or attached to the work

|

||||

(an example is provided in the Appendix below).

|

||||

|

||||

"Derivative Works" shall mean any work, whether in Source or Object

|

||||

form, that is based on (or derived from) the Work and for which the

|

||||

editorial revisions, annotations, elaborations, or other modifications

|

||||

represent, as a whole, an original work of authorship. For the purposes

|

||||

of this License, Derivative Works shall not include works that remain

|

||||

separable from, or merely link (or bind by name) to the interfaces of,

|

||||

the Work and Derivative Works thereof.

|

||||

|

||||

"Contribution" shall mean any work of authorship, including

|

||||

the original version of the Work and any modifications or additions

|

||||

to that Work or Derivative Works thereof, that is intentionally

|

||||

submitted to Licensor for inclusion in the Work by the copyright owner

|

||||

or by an individual or Legal Entity authorized to submit on behalf of

|

||||

the copyright owner. For the purposes of this definition, "submitted"

|

||||

means any form of electronic, verbal, or written communication sent

|

||||

to the Licensor or its representatives, including but not limited to

|

||||

communication on electronic mailing lists, source code control systems,

|

||||

and issue tracking systems that are managed by, or on behalf of, the

|

||||

Licensor for the purpose of discussing and improving the Work, but

|

||||

excluding communication that is conspicuously marked or otherwise

|

||||

designated in writing by the copyright owner as "Not a Contribution."

|

||||

|

||||

"Contributor" shall mean Licensor and any individual or Legal Entity

|

||||

on behalf of whom a Contribution has been received by Licensor and

|

||||

subsequently incorporated within the Work.

|

||||

|

||||

2. Grant of Copyright License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

copyright license to reproduce, prepare Derivative Works of,

|

||||

publicly display, publicly perform, sublicense, and distribute the

|

||||

Work and such Derivative Works in Source or Object form.

|

||||

|

||||

3. Grant of Patent License. Subject to the terms and conditions of

|

||||

this License, each Contributor hereby grants to You a perpetual,

|

||||

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

||||

(except as stated in this section) patent license to make, have made,

|

||||

use, offer to sell, sell, import, and otherwise transfer the Work,

|

||||

where such license applies only to those patent claims licensable

|

||||

by such Contributor that are necessarily infringed by their

|

||||

Contribution(s) alone or by combination of their Contribution(s)

|

||||

with the Work to which such Contribution(s) was submitted. If You

|

||||

institute patent litigation against any entity (including a

|

||||

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

||||

or a Contribution incorporated within the Work constitutes direct

|

||||

or contributory patent infringement, then any patent licenses

|

||||

granted to You under this License for that Work shall terminate

|

||||

as of the date such litigation is filed.

|

||||

|

||||

4. Redistribution. You may reproduce and distribute copies of the

|

||||

Work or Derivative Works thereof in any medium, with or without

|

||||

modifications, and in Source or Object form, provided that You

|

||||

meet the following conditions:

|

||||

|

||||

(a) You must give any other recipients of the Work or

|

||||

Derivative Works a copy of this License; and

|

||||

|

||||

(b) You must cause any modified files to carry prominent notices

|

||||

stating that You changed the files; and

|

||||

|

||||

(c) You must retain, in the Source form of any Derivative Works

|

||||

that You distribute, all copyright, patent, trademark, and

|

||||

attribution notices from the Source form of the Work,

|

||||

excluding those notices that do not pertain to any part of

|

||||

the Derivative Works; and

|

||||

|

||||

(d) If the Work includes a "NOTICE" text file as part of its

|

||||

distribution, then any Derivative Works that You distribute must

|

||||

include a readable copy of the attribution notices contained

|

||||

within such NOTICE file, excluding those notices that do not

|

||||

pertain to any part of the Derivative Works, in at least one

|

||||

of the following places: within a NOTICE text file distributed

|

||||

as part of the Derivative Works; within the Source form or

|

||||

documentation, if provided along with the Derivative Works; or,

|

||||

within a display generated by the Derivative Works, if and

|

||||

wherever such third-party notices normally appear. The contents

|

||||

of the NOTICE file are for informational purposes only and

|

||||

do not modify the License. You may add Your own attribution

|

||||

notices within Derivative Works that You distribute, alongside

|

||||

or as an addendum to the NOTICE text from the Work, provided

|

||||

that such additional attribution notices cannot be construed

|

||||

as modifying the License.

|

||||

|

||||

You may add Your own copyright statement to Your modifications and

|

||||

may provide additional or different license terms and conditions

|

||||

for use, reproduction, or distribution of Your modifications, or

|

||||

for any such Derivative Works as a whole, provided Your use,

|

||||

reproduction, and distribution of the Work otherwise complies with

|

||||

the conditions stated in this License.

|

||||

|

||||

5. Submission of Contributions. Unless You explicitly state otherwise,

|

||||

any Contribution intentionally submitted for inclusion in the Work

|

||||

by You to the Licensor shall be under the terms and conditions of

|

||||

this License, without any additional terms or conditions.

|

||||

Notwithstanding the above, nothing herein shall supersede or modify

|

||||

the terms of any separate license agreement you may have executed

|

||||

with Licensor regarding such Contributions.

|

||||

|

||||

6. Trademarks. This License does not grant permission to use the trade

|

||||

names, trademarks, service marks, or product names of the Licensor,

|

||||

except as required for reasonable and customary use in describing the

|

||||

origin of the Work and reproducing the content of the NOTICE file.

|

||||

|

||||

7. Disclaimer of Warranty. Unless required by applicable law or

|

||||

agreed to in writing, Licensor provides the Work (and each

|

||||

Contributor provides its Contributions) on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

||||

implied, including, without limitation, any warranties or conditions

|

||||

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

||||

PARTICULAR PURPOSE. You are solely responsible for determining the

|

||||

appropriateness of using or redistributing the Work and assume any

|

||||

risks associated with Your exercise of permissions under this License.

|

||||

|

||||

8. Limitation of Liability. In no event and under no legal theory,

|

||||

whether in tort (including negligence), contract, or otherwise,

|

||||

unless required by applicable law (such as deliberate and grossly

|

||||

negligent acts) or agreed to in writing, shall any Contributor be

|

||||

liable to You for damages, including any direct, indirect, special,

|

||||

incidental, or consequential damages of any character arising as a

|

||||

result of this License or out of the use or inability to use the

|

||||

Work (including but not limited to damages for loss of goodwill,

|

||||

work stoppage, computer failure or malfunction, or any and all

|

||||

other commercial damages or losses), even if such Contributor

|

||||

has been advised of the possibility of such damages.

|

||||

|

||||

9. Accepting Warranty or Additional Liability. While redistributing

|

||||

the Work or Derivative Works thereof, You may choose to offer,

|

||||

and charge a fee for, acceptance of support, warranty, indemnity,

|

||||

or other liability obligations and/or rights consistent with this

|

||||

License. However, in accepting such obligations, You may act only

|

||||

on Your own behalf and on Your sole responsibility, not on behalf

|

||||

of any other Contributor, and only if You agree to indemnify,

|

||||

defend, and hold each Contributor harmless for any liability

|

||||

incurred by, or claims asserted against, such Contributor by reason

|

||||

of your accepting any such warranty or additional liability.

|

||||

|

||||

END OF TERMS AND CONDITIONS

|

||||

|

||||

APPENDIX: How to apply the Apache License to your work.

|

||||

|

||||

To apply the Apache License to your work, attach the following

|

||||

boilerplate notice, with the fields enclosed by brackets "[]"

|

||||

replaced with your own identifying information. (Don't include

|

||||

the brackets!) The text should be enclosed in the appropriate

|

||||

comment syntax for the file format. We also recommend that a

|

||||

file or class name and description of purpose be included on the

|

||||

same "printed page" as the copyright notice for easier

|

||||

identification within third-party archives.

|

||||

|

||||

Copyright [yyyy] [name of copyright owner]

|

||||

|

||||

Licensed under the Apache License, Version 2.0 (the "License");

|

||||

you may not use this file except in compliance with the License.

|

||||

You may obtain a copy of the License at

|

||||

|

||||

http://www.apache.org/licenses/LICENSE-2.0

|

||||

|

||||

Unless required by applicable law or agreed to in writing, software

|

||||

distributed under the License is distributed on an "AS IS" BASIS,

|

||||

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

||||

See the License for the specific language governing permissions and

|

||||

limitations under the License.

|

||||

|

|

@ -173,7 +173,7 @@ class sbb_predict:

|

|||

##if self.weights_dir!=None:

|

||||

##self.model.load_weights(self.weights_dir)

|

||||

|

||||

if (self.task != 'classification' and self.task != 'reading_order'):

|

||||

if self.task != 'classification' and self.task != 'reading_order':

|

||||

self.img_height=self.model.layers[len(self.model.layers)-1].output_shape[1]

|

||||

self.img_width=self.model.layers[len(self.model.layers)-1].output_shape[2]

|

||||

self.n_classes=self.model.layers[len(self.model.layers)-1].output_shape[3]

|

||||

|

|

@ -305,8 +305,7 @@ class sbb_predict:

|

|||

input_1= np.zeros( (inference_bs, img_height, img_width,3))

|

||||

|

||||

|

||||

starting_list_of_regions = []

|

||||

starting_list_of_regions.append( list(range(labels_con.shape[2])) )

|

||||

starting_list_of_regions = [list(range(labels_con.shape[2]))]

|

||||

|

||||

index_update = 0

|

||||

index_selected = starting_list_of_regions[0]

|

||||

|

|

@ -561,7 +560,7 @@ class sbb_predict:

|

|||

if self.image:

|

||||

res=self.predict(image_dir = self.image)

|

||||

|

||||

if (self.task == 'classification' or self.task == 'reading_order'):

|

||||

if self.task == 'classification' or self.task == 'reading_order':

|

||||

pass

|

||||

elif self.task == 'enhancement':

|

||||

if self.save:

|

||||

|

|

@ -584,7 +583,7 @@ class sbb_predict:

|

|||

image_dir = os.path.join(self.dir_in, ind_image)

|

||||

res=self.predict(image_dir)

|

||||

|

||||

if (self.task == 'classification' or self.task == 'reading_order'):

|

||||

if self.task == 'classification' or self.task == 'reading_order':

|

||||

pass

|

||||

elif self.task == 'enhancement':

|

||||

self.save = os.path.join(self.out, f_name+'.png')

|

||||

|

|

@ -665,7 +664,7 @@ def main(image, dir_in, model, patches, save, save_layout, ground_truth, xml_fil

|

|||

with open(os.path.join(model,'config.json')) as f:

|

||||

config_params_model = json.load(f)

|

||||

task = config_params_model['task']

|

||||

if (task != 'classification' and task != 'reading_order'):

|

||||

if task != 'classification' and task != 'reading_order':

|

||||

if image and not save:

|

||||

print("Error: You used one of segmentation or binarization task with image input but not set -s, you need a filename to save visualized output with -s")

|

||||

sys.exit(1)

|

||||

|

|

|

|||

|

|

@ -394,7 +394,9 @@ def resnet50_unet(n_classes, input_height=224, input_width=224, task="segmentati

|

|||

return model

|

||||

|

||||

|

||||

def vit_resnet50_unet(n_classes, patch_size_x, patch_size_y, num_patches, mlp_head_units=[128, 64], transformer_layers=8, num_heads =4, projection_dim = 64, input_height=224, input_width=224, task="segmentation", weight_decay=1e-6, pretraining=False):

|

||||

def vit_resnet50_unet(n_classes, patch_size_x, patch_size_y, num_patches, mlp_head_units=None, transformer_layers=8, num_heads =4, projection_dim = 64, input_height=224, input_width=224, task="segmentation", weight_decay=1e-6, pretraining=False):

|

||||

if mlp_head_units is None:

|

||||

mlp_head_units = [128, 64]

|

||||

inputs = layers.Input(shape=(input_height, input_width, 3))

|

||||

|

||||

#transformer_units = [

|

||||

|

|

@ -516,7 +518,9 @@ def vit_resnet50_unet(n_classes, patch_size_x, patch_size_y, num_patches, mlp_he

|

|||

|

||||

return model

|

||||

|

||||

def vit_resnet50_unet_transformer_before_cnn(n_classes, patch_size_x, patch_size_y, num_patches, mlp_head_units=[128, 64], transformer_layers=8, num_heads =4, projection_dim = 64, input_height=224, input_width=224, task="segmentation", weight_decay=1e-6, pretraining=False):

|

||||

def vit_resnet50_unet_transformer_before_cnn(n_classes, patch_size_x, patch_size_y, num_patches, mlp_head_units=None, transformer_layers=8, num_heads =4, projection_dim = 64, input_height=224, input_width=224, task="segmentation", weight_decay=1e-6, pretraining=False):

|

||||

if mlp_head_units is None:

|

||||

mlp_head_units = [128, 64]

|

||||

inputs = layers.Input(shape=(input_height, input_width, 3))

|

||||

|

||||

##transformer_units = [

|

||||

|

|

|

|||

|

|

@ -269,10 +269,10 @@ def run(_config, n_classes, n_epochs, input_height,

|

|||

num_patches = num_patches_x * num_patches_y

|

||||

|

||||

if transformer_cnn_first:

|

||||

if (input_height != (num_patches_y * transformer_patchsize_y * 32) ):

|

||||

if input_height != (num_patches_y * transformer_patchsize_y * 32):

|

||||

print("Error: transformer_patchsize_y or transformer_num_patches_xy height value error . input_height should be equal to ( transformer_num_patches_xy height value * transformer_patchsize_y * 32)")

|

||||

sys.exit(1)

|

||||

if (input_width != (num_patches_x * transformer_patchsize_x * 32) ):

|

||||

if input_width != (num_patches_x * transformer_patchsize_x * 32):

|

||||

print("Error: transformer_patchsize_x or transformer_num_patches_xy width value error . input_width should be equal to ( transformer_num_patches_xy width value * transformer_patchsize_x * 32)")

|

||||

sys.exit(1)

|

||||

if (transformer_projection_dim % (transformer_patchsize_y * transformer_patchsize_x)) != 0:

|

||||

|

|

@ -282,10 +282,10 @@ def run(_config, n_classes, n_epochs, input_height,

|

|||

|

||||

model = vit_resnet50_unet(n_classes, transformer_patchsize_x, transformer_patchsize_y, num_patches, transformer_mlp_head_units, transformer_layers, transformer_num_heads, transformer_projection_dim, input_height, input_width, task, weight_decay, pretraining)

|

||||

else:

|

||||

if (input_height != (num_patches_y * transformer_patchsize_y) ):

|

||||

if input_height != (num_patches_y * transformer_patchsize_y):

|

||||

print("Error: transformer_patchsize_y or transformer_num_patches_xy height value error . input_height should be equal to ( transformer_num_patches_xy height value * transformer_patchsize_y)")

|

||||

sys.exit(1)

|

||||

if (input_width != (num_patches_x * transformer_patchsize_x) ):

|

||||

if input_width != (num_patches_x * transformer_patchsize_x):

|

||||

print("Error: transformer_patchsize_x or transformer_num_patches_xy width value error . input_width should be equal to ( transformer_num_patches_xy width value * transformer_patchsize_x)")

|

||||

sys.exit(1)

|

||||

if (transformer_projection_dim % (transformer_patchsize_y * transformer_patchsize_x)) != 0:

|

||||

|

|

@ -297,7 +297,7 @@ def run(_config, n_classes, n_epochs, input_height,

|

|||

model.summary()

|

||||

|

||||

|

||||

if (task == "segmentation" or task == "binarization"):

|

||||

if task == "segmentation" or task == "binarization":

|

||||

if not is_loss_soft_dice and not weighted_loss:

|

||||

model.compile(loss='categorical_crossentropy',

|

||||

optimizer=Adam(learning_rate=learning_rate), metrics=['accuracy'])

|

||||

|

|

@ -365,8 +365,7 @@ def run(_config, n_classes, n_epochs, input_height,

|

|||

|

||||

y_tot=np.zeros((testX.shape[0],n_classes))

|

||||

|

||||

score_best=[]

|

||||

score_best.append(0)

|

||||

score_best= [0]

|

||||

|

||||

num_rows = return_number_of_total_training_data(dir_train)

|

||||

weights=[]

|

||||

|

|

|

|||

|

|

@ -260,7 +260,7 @@ def generate_data_from_folder_training(path_classes, batchsize, height, width, n

|

|||

|

||||

if batchcount>=batchsize:

|

||||

ret_x = ret_x/255.

|

||||

yield (ret_x, ret_y)

|

||||

yield ret_x, ret_y

|

||||

ret_x= np.zeros((batchsize, height,width, 3)).astype(np.int16)

|

||||

ret_y= np.zeros((batchsize, n_classes)).astype(np.int16)

|

||||

batchcount = 0

|

||||

|

|

@ -446,7 +446,7 @@ def generate_arrays_from_folder_reading_order(classes_file_dir, modal_dir, batch

|

|||

ret_y[batchcount, :] = label_class

|

||||

batchcount+=1

|

||||

if batchcount>=batchsize:

|

||||

yield (ret_x, ret_y)

|

||||

yield ret_x, ret_y

|

||||

ret_x= np.zeros((batchsize, height, width, 3))#.astype(np.int16)

|

||||

ret_y= np.zeros((batchsize, n_classes)).astype(np.int16)

|

||||

batchcount = 0

|

||||

|

|

@ -464,7 +464,7 @@ def generate_arrays_from_folder_reading_order(classes_file_dir, modal_dir, batch

|

|||

ret_y[batchcount, :] = label_class

|

||||

batchcount+=1

|

||||

if batchcount>=batchsize:

|

||||

yield (ret_x, ret_y)

|

||||

yield ret_x, ret_y

|

||||

ret_x= np.zeros((batchsize, height, width, 3))#.astype(np.int16)

|

||||

ret_y= np.zeros((batchsize, n_classes)).astype(np.int16)

|

||||

batchcount = 0

|

||||

|

|

|

|||

Loading…

Add table

Add a link

Reference in a new issue