mirror of

https://github.com/qurator-spk/eynollah.git

synced 2026-05-13 01:13:54 +02:00

Merge pull request #138 from qurator-spk/extracting_images_only

Extracting images only

This commit is contained in:

commit

bceeeb56c1

34 changed files with 778 additions and 723 deletions

|

|

@ -1,51 +0,0 @@

|

||||||

version: 2

|

|

||||||

|

|

||||||

jobs:

|

|

||||||

|

|

||||||

build-python37:

|

|

||||||

machine:

|

|

||||||

- image: ubuntu-2004:2023.02.1

|

|

||||||

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- restore_cache:

|

|

||||||

keys:

|

|

||||||

- model-cache

|

|

||||||

- run: make models

|

|

||||||

- save_cache:

|

|

||||||

key: model-cache

|

|

||||||

paths:

|

|

||||||

models_eynollah.tar.gz

|

|

||||||

models_eynollah

|

|

||||||

- run:

|

|

||||||

name: "Set Python Version"

|

|

||||||

command: pyenv install -s 3.7.16 && pyenv global 3.7.16

|

|

||||||

- run: make install

|

|

||||||

- run: make smoke-test

|

|

||||||

|

|

||||||

build-python38:

|

|

||||||

machine:

|

|

||||||

- image: ubuntu-2004:2023.02.1

|

|

||||||

steps:

|

|

||||||

- checkout

|

|

||||||

- restore_cache:

|

|

||||||

keys:

|

|

||||||

- model-cache

|

|

||||||

- run: make models

|

|

||||||

- save_cache:

|

|

||||||

key: model-cache

|

|

||||||

paths:

|

|

||||||

models_eynollah.tar.gz

|

|

||||||

models_eynollah

|

|

||||||

- run:

|

|

||||||

name: "Set Python Version"

|

|

||||||

command: pyenv install -s 3.8.16 && pyenv global 3.8.16

|

|

||||||

- run: make install

|

|

||||||

- run: make smoke-test

|

|

||||||

|

|

||||||

workflows:

|

|

||||||

version: 2

|

|

||||||

build:

|

|

||||||

jobs:

|

|

||||||

# - build-python37

|

|

||||||

- build-python38

|

|

||||||

8

.github/workflows/test-eynollah.yml

vendored

8

.github/workflows/test-eynollah.yml

vendored

|

|

@ -1,7 +1,7 @@

|

||||||

# This workflow will install Python dependencies, run tests and lint with a variety of Python versions

|

# This workflow will install Python dependencies, run tests and lint with a variety of Python versions

|

||||||

# For more information see: https://help.github.com/actions/language-and-framework-guides/using-python-with-github-actions

|

# For more information see: https://help.github.com/actions/language-and-framework-guides/using-python-with-github-actions

|

||||||

|

|

||||||

name: Python package

|

name: Test

|

||||||

|

|

||||||

on: [push]

|

on: [push]

|

||||||

|

|

||||||

|

|

@ -14,8 +14,8 @@ jobs:

|

||||||

python-version: ['3.8', '3.9', '3.10', '3.11']

|

python-version: ['3.8', '3.9', '3.10', '3.11']

|

||||||

|

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v2

|

- uses: actions/checkout@v4

|

||||||

- uses: actions/cache@v2

|

- uses: actions/cache@v4

|

||||||

id: model_cache

|

id: model_cache

|

||||||

with:

|

with:

|

||||||

path: models_eynollah

|

path: models_eynollah

|

||||||

|

|

@ -24,7 +24,7 @@ jobs:

|

||||||

if: steps.model_cache.outputs.cache-hit != 'true'

|

if: steps.model_cache.outputs.cache-hit != 'true'

|

||||||

run: make models

|

run: make models

|

||||||

- name: Set up Python ${{ matrix.python-version }}

|

- name: Set up Python ${{ matrix.python-version }}

|

||||||

uses: actions/setup-python@v2

|

uses: actions/setup-python@v5

|

||||||

with:

|

with:

|

||||||

python-version: ${{ matrix.python-version }}

|

python-version: ${{ matrix.python-version }}

|

||||||

- name: Install dependencies

|

- name: Install dependencies

|

||||||

|

|

|

||||||

10

CHANGELOG.md

10

CHANGELOG.md

|

|

@ -5,6 +5,14 @@ Versioned according to [Semantic Versioning](http://semver.org/).

|

||||||

|

|

||||||

## Unreleased

|

## Unreleased

|

||||||

|

|

||||||

|

## [0.3.1] - 2024-08-27

|

||||||

|

|

||||||

|

Fixed:

|

||||||

|

|

||||||

|

* regression in OCR-D processor, #106

|

||||||

|

* Expected Ptrcv::UMat for argument 'contour', #110

|

||||||

|

* Memory usage explosion with very narrow images (e.g. book spine), #67

|

||||||

|

|

||||||

## [0.3.0] - 2023-05-13

|

## [0.3.0] - 2023-05-13

|

||||||

|

|

||||||

Changed:

|

Changed:

|

||||||

|

|

@ -117,6 +125,8 @@ Fixed:

|

||||||

Initial release

|

Initial release

|

||||||

|

|

||||||

<!-- link-labels -->

|

<!-- link-labels -->

|

||||||

|

[0.3.1]: ../../compare/v0.3.1...v0.3.0

|

||||||

|

[0.3.0]: ../../compare/v0.3.0...v0.2.0

|

||||||

[0.2.0]: ../../compare/v0.2.0...v0.1.0

|

[0.2.0]: ../../compare/v0.2.0...v0.1.0

|

||||||

[0.1.0]: ../../compare/v0.1.0...v0.0.11

|

[0.1.0]: ../../compare/v0.1.0...v0.0.11

|

||||||

[0.0.11]: ../../compare/v0.0.11...v0.0.10

|

[0.0.11]: ../../compare/v0.0.11...v0.0.10

|

||||||

|

|

|

||||||

8

Makefile

8

Makefile

|

|

@ -22,16 +22,14 @@ help:

|

||||||

models: models_eynollah

|

models: models_eynollah

|

||||||

|

|

||||||

models_eynollah: models_eynollah.tar.gz

|

models_eynollah: models_eynollah.tar.gz

|

||||||

# tar xf models_eynollah_renamed.tar.gz --transform 's/models_eynollah_renamed/models_eynollah/'

|

|

||||||

# tar xf models_eynollah_renamed.tar.gz

|

|

||||||

# tar xf 2022-04-05.SavedModel.tar.gz --transform 's/models_eynollah_renamed/models_eynollah/'

|

|

||||||

tar xf models_eynollah.tar.gz

|

tar xf models_eynollah.tar.gz

|

||||||

|

|

||||||

models_eynollah.tar.gz:

|

models_eynollah.tar.gz:

|

||||||

# wget 'https://qurator-data.de/eynollah/2021-04-25/models_eynollah.tar.gz'

|

# wget 'https://qurator-data.de/eynollah/2021-04-25/models_eynollah.tar.gz'

|

||||||

# wget 'https://qurator-data.de/eynollah/2022-04-05/models_eynollah_renamed.tar.gz'

|

# wget 'https://qurator-data.de/eynollah/2022-04-05/models_eynollah_renamed.tar.gz'

|

||||||

# wget 'https://ocr-d.kba.cloud/2022-04-05.SavedModel.tar.gz'

|

# wget 'https://qurator-data.de/eynollah/2022-04-05/models_eynollah_renamed_savedmodel.tar.gz'

|

||||||

wget https://github.com/qurator-spk/eynollah/releases/download/v0.3.0/models_eynollah.tar.gz

|

# wget 'https://github.com/qurator-spk/eynollah/releases/download/v0.3.0/models_eynollah.tar.gz'

|

||||||

|

wget 'https://github.com/qurator-spk/eynollah/releases/download/v0.3.1/models_eynollah.tar.gz'

|

||||||

|

|

||||||

# Install with pip

|

# Install with pip

|

||||||

install:

|

install:

|

||||||

|

|

|

||||||

67

README.md

67

README.md

|

|

@ -1,10 +1,10 @@

|

||||||

# Eynollah

|

# Eynollah

|

||||||

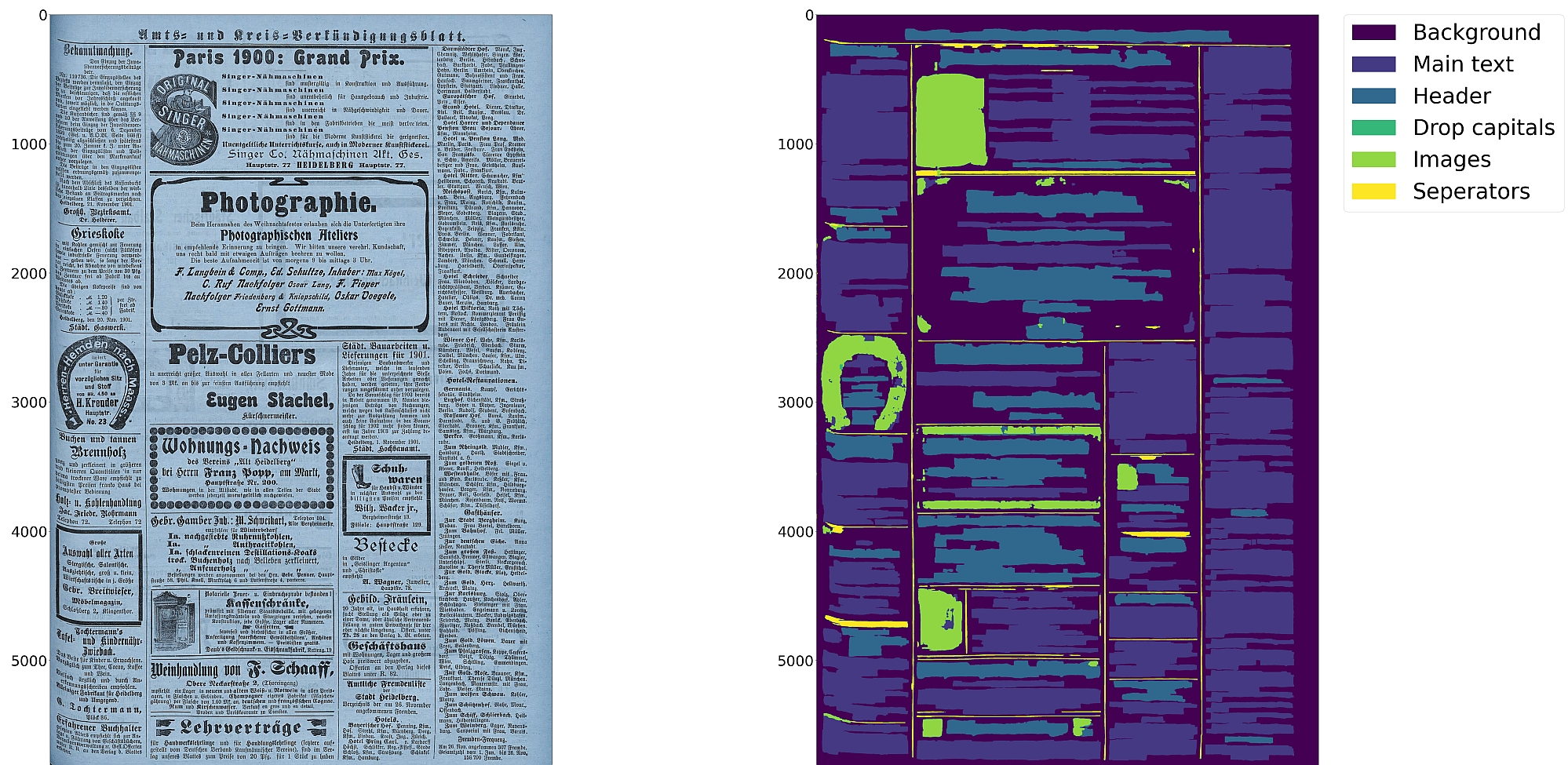

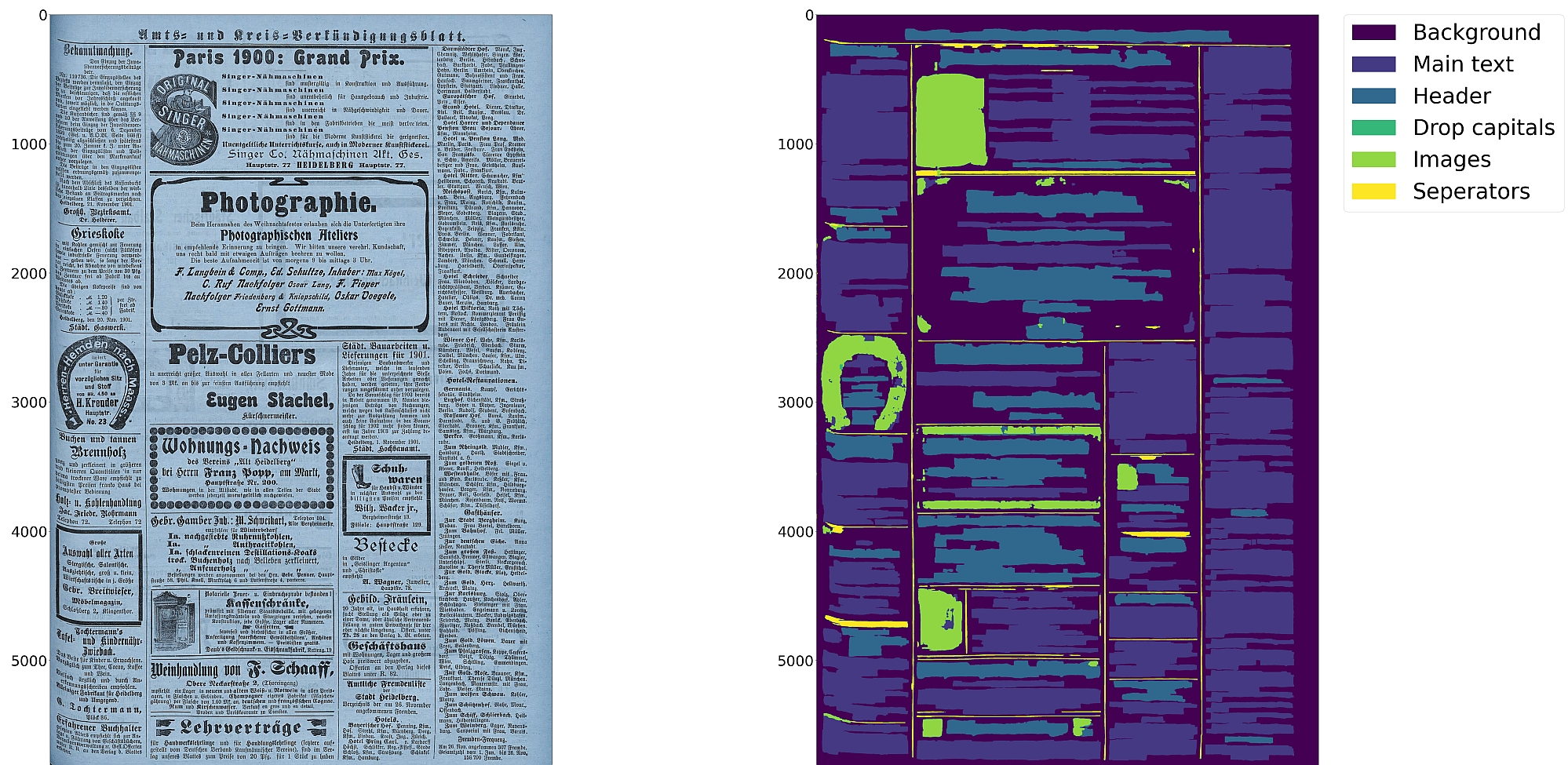

> Document Layout Analysis (segmentation) using pre-trained models and heuristics

|

> Document Layout Analysis with Deep Learning and Heuristics

|

||||||

|

|

||||||

[](https://pypi.org/project/eynollah/)

|

[](https://pypi.org/project/eynollah/)

|

||||||

[](https://circleci.com/gh/qurator-spk/eynollah)

|

|

||||||

[](https://github.com/qurator-spk/eynollah/actions/workflows/test-eynollah.yml)

|

[](https://github.com/qurator-spk/eynollah/actions/workflows/test-eynollah.yml)

|

||||||

[](https://opensource.org/license/apache-2-0/)

|

[](https://opensource.org/license/apache-2-0/)

|

||||||

|

[](https://doi.org/10.1145/3604951.3605513)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

|

@ -14,16 +14,18 @@

|

||||||

* Support for various image optimization operations:

|

* Support for various image optimization operations:

|

||||||

* cropping (border detection), binarization, deskewing, dewarping, scaling, enhancing, resizing

|

* cropping (border detection), binarization, deskewing, dewarping, scaling, enhancing, resizing

|

||||||

* Text line segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

* Text line segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

||||||

* Detection of reading order

|

* Detection of reading order (left-to-right or right-to-left)

|

||||||

* Output in [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML)

|

* Output in [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML)

|

||||||

* [OCR-D](https://github.com/qurator-spk/eynollah#use-as-ocr-d-processor) interface

|

* [OCR-D](https://github.com/qurator-spk/eynollah#use-as-ocr-d-processor) interface

|

||||||

|

|

||||||

|

:warning: Development is currently focused on achieving the best possible quality of results for a wide variety of historical documents and therefore processing can be very slow. We aim to improve this, but contributions are welcome.

|

||||||

|

|

||||||

## Installation

|

## Installation

|

||||||

Python versions `3.8-3.11` with Tensorflow versions >=`2.12` are currently supported.

|

Python `3.8-3.11` with Tensorflow `2.12-2.15` on Linux are currently supported.

|

||||||

|

|

||||||

For (limited) GPU support the CUDA toolkit needs to be installed.

|

For (limited) GPU support the CUDA toolkit needs to be installed.

|

||||||

|

|

||||||

You can either install via

|

You can either install from PyPI

|

||||||

|

|

||||||

```

|

```

|

||||||

pip install eynollah

|

pip install eynollah

|

||||||

|

|

@ -39,45 +41,49 @@ cd eynollah; pip install -e .

|

||||||

Alternatively, you can run `make install` or `make install-dev` for editable installation.

|

Alternatively, you can run `make install` or `make install-dev` for editable installation.

|

||||||

|

|

||||||

## Models

|

## Models

|

||||||

Pre-trained models can be downloaded from [qurator-data.de](https://qurator-data.de/eynollah/).

|

Pre-trained models can be downloaded from [qurator-data.de](https://qurator-data.de/eynollah/) or [huggingface](https://huggingface.co/SBB?search_models=eynollah).

|

||||||

|

|

||||||

In case you want to train your own model to use with Eynollah, have a look at [sbb_pixelwise_segmentation](https://github.com/qurator-spk/sbb_pixelwise_segmentation).

|

## Train

|

||||||

|

🚧 **Work in progress**

|

||||||

|

|

||||||

|

In case you want to train your own model, have a look at [`sbb_pixelwise_segmentation`](https://github.com/qurator-spk/sbb_pixelwise_segmentation).

|

||||||

|

|

||||||

## Usage

|

## Usage

|

||||||

The command-line interface can be called like this:

|

The command-line interface can be called like this:

|

||||||

|

|

||||||

```sh

|

```sh

|

||||||

eynollah \

|

eynollah \

|

||||||

-i <image file> \

|

-i <single image file> | -di <directory containing image files> \

|

||||||

-o <output directory> \

|

-o <output directory> \

|

||||||

-m <path to directory containing model files> \

|

-m <directory containing model files> \

|

||||||

[OPTIONS]

|

[OPTIONS]

|

||||||

```

|

```

|

||||||

|

|

||||||

The following options can be used to further configure the processing:

|

The following options can be used to further configure the processing:

|

||||||

|

|

||||||

| option | description |

|

| option | description |

|

||||||

|----------|:-------------|

|

|-------------------|:-------------------------------------------------------------------------------|

|

||||||

| `-fl` | full layout analysis including all steps and segmentation classes |

|

| `-fl` | full layout analysis including all steps and segmentation classes |

|

||||||

| `-light` | lighter and faster but simpler method for main region detection and deskewing |

|

| `-light` | lighter and faster but simpler method for main region detection and deskewing |

|

||||||

| `-tab` | apply table detection |

|

| `-tab` | apply table detection |

|

||||||

| `-ae` | apply enhancement (the resulting image is saved to the output directory) |

|

| `-ae` | apply enhancement (the resulting image is saved to the output directory) |

|

||||||

| `-as` | apply scaling |

|

| `-as` | apply scaling |

|

||||||

| `-cl` | apply contour detection for curved text lines instead of bounding boxes |

|

| `-cl` | apply contour detection for curved text lines instead of bounding boxes |

|

||||||

| `-ib` | apply binarization (the resulting image is saved to the output directory) |

|

| `-ib` | apply binarization (the resulting image is saved to the output directory) |

|

||||||

| `-ep` | enable plotting (MUST always be used with `-sl`, `-sd`, `-sa`, `-si` or `-ae`) |

|

| `-ep` | enable plotting (MUST always be used with `-sl`, `-sd`, `-sa`, `-si` or `-ae`) |

|

||||||

| `-ho` | ignore headers for reading order dectection |

|

| `-eoi` | extract only images to output directory (other processing will not be done) |

|

||||||

| `-di <directory>` | process all images in a directory in batch mode |

|

| `-ho` | ignore headers for reading order dectection |

|

||||||

| `-si <directory>` | save image regions detected to this directory |

|

| `-si <directory>` | save image regions detected to this directory |

|

||||||

| `-sd <directory>` | save deskewed image to this directory |

|

| `-sd <directory>` | save deskewed image to this directory |

|

||||||

| `-sl <directory>` | save layout prediction as plot to this directory |

|

| `-sl <directory>` | save layout prediction as plot to this directory |

|

||||||

| `-sp <directory>` | save cropped page image to this directory |

|

| `-sp <directory>` | save cropped page image to this directory |

|

||||||

| `-sa <directory>` | save all (plot, enhanced/binary image, layout) to this directory |

|

| `-sa <directory>` | save all (plot, enhanced/binary image, layout) to this directory |

|

||||||

|

|

||||||

If no option is set, the tool will perform layout detection of main regions (background, text, images, separators and marginals).

|

If no option is set, the tool performs layout detection of main regions (background, text, images, separators and marginals).

|

||||||

The tool produces better quality output when RGB images are used as input than greyscale or binarized images.

|

The best output quality is produced when RGB images are used as input rather than greyscale or binarized images.

|

||||||

|

|

||||||

#### Use as OCR-D processor

|

#### Use as OCR-D processor

|

||||||

|

🚧 **Work in progress**

|

||||||

|

|

||||||

Eynollah ships with a CLI interface to be used as [OCR-D](https://ocr-d.de) processor.

|

Eynollah ships with a CLI interface to be used as [OCR-D](https://ocr-d.de) processor.

|

||||||

|

|

||||||

|

|

@ -95,11 +101,14 @@ ocrd-eynollah-segment -I OCR-D-IMG-BIN -O SEG-LINE -P models

|

||||||

|

|

||||||

uses the original (RGB) image despite any binarization that may have occured in previous OCR-D processing steps

|

uses the original (RGB) image despite any binarization that may have occured in previous OCR-D processing steps

|

||||||

|

|

||||||

|

#### Additional documentation

|

||||||

|

Please check the [wiki](https://github.com/qurator-spk/eynollah/wiki).

|

||||||

|

|

||||||

## How to cite

|

## How to cite

|

||||||

If you find this tool useful in your work, please consider citing our paper:

|

If you find this tool useful in your work, please consider citing our paper:

|

||||||

|

|

||||||

```bibtex

|

```bibtex

|

||||||

@inproceedings{rezanezhad2023eynollah,

|

@inproceedings{hip23rezanezhad,

|

||||||

title = {Document Layout Analysis with Deep Learning and Heuristics},

|

title = {Document Layout Analysis with Deep Learning and Heuristics},

|

||||||

author = {Rezanezhad, Vahid and Baierer, Konstantin and Gerber, Mike and Labusch, Kai and Neudecker, Clemens},

|

author = {Rezanezhad, Vahid and Baierer, Konstantin and Gerber, Mike and Labusch, Kai and Neudecker, Clemens},

|

||||||

booktitle = {Proceedings of the 7th International Workshop on Historical Document Imaging and Processing {HIP} 2023,

|

booktitle = {Proceedings of the 7th International Workshop on Historical Document Imaging and Processing {HIP} 2023,

|

||||||

|

|

|

||||||

|

|

@ -1 +1 @@

|

||||||

qurator/eynollah/ocrd-tool.json

|

src/eynollah/ocrd-tool.json

|

||||||

|

|

@ -1,34 +1,43 @@

|

||||||

[build-system]

|

[build-system]

|

||||||

requires = ["setuptools>=61.0"]

|

requires = ["setuptools>=61.0", "wheel", "setuptools-ocrd"]

|

||||||

build-backend = "setuptools.build_meta"

|

|

||||||

|

|

||||||

[project]

|

[project]

|

||||||

name = "eynollah"

|

name = "eynollah"

|

||||||

version = "0.1.0"

|

authors = [

|

||||||

|

{name = "Vahid Rezanezhad"},

|

||||||

|

{name = "Staatsbibliothek zu Berlin - Preußischer Kulturbesitz"},

|

||||||

|

]

|

||||||

|

description = "Document Layout Analysis"

|

||||||

|

readme = "README.md"

|

||||||

|

license.file = "LICENSE"

|

||||||

|

requires-python = ">=3.8"

|

||||||

|

keywords = ["document layout analysis", "image segmentation"]

|

||||||

|

|

||||||

|

dynamic = ["dependencies", "version"]

|

||||||

|

|

||||||

|

classifiers = [

|

||||||

|

"Development Status :: 4 - Beta",

|

||||||

dependencies = [

|

"Environment :: Console",

|

||||||

"ocrd >= 2.23.3",

|

"Intended Audience :: Science/Research",

|

||||||

"tensorflow == 2.12.1",

|

"License :: OSI Approved :: Apache Software License",

|

||||||

"scikit-learn >= 0.23.2",

|

"Programming Language :: Python :: 3",

|

||||||

"imutils >= 0.5.3",

|

"Programming Language :: Python :: 3 :: Only",

|

||||||

"numpy < 1.24.0",

|

"Topic :: Scientific/Engineering :: Image Processing",

|

||||||

"matplotlib",

|

|

||||||

"torch == 2.0.1",

|

|

||||||

"transformers == 4.30.2",

|

|

||||||

"numba == 0.58.1",

|

|

||||||

]

|

]

|

||||||

|

|

||||||

[project.scripts]

|

[project.scripts]

|

||||||

eynollah = "qurator.eynollah.cli:main"

|

eynollah = "eynollah.cli:main"

|

||||||

ocrd-eynollah-segment="qurator.eynollah.ocrd_cli:main"

|

ocrd-eynollah-segment = "eynollah.ocrd_cli:main"

|

||||||

|

|

||||||

|

[project.urls]

|

||||||

|

Homepage = "https://github.com/qurator-spk/eynollah"

|

||||||

|

Repository = "https://github.com/qurator-spk/eynollah.git"

|

||||||

|

|

||||||

|

[tool.setuptools.dynamic]

|

||||||

|

dependencies = {file = ["requirements.txt"]}

|

||||||

|

|

||||||

[tool.setuptools.packages.find]

|

[tool.setuptools.packages.find]

|

||||||

where = ["."]

|

where = ["src"]

|

||||||

include = ["qurator"]

|

|

||||||

|

|

||||||

[tool.setuptools.package-data]

|

[tool.setuptools.package-data]

|

||||||

"*" = ["*.json", '*.yml', '*.xml', '*.xsd']

|

"*" = ["*.json", '*.yml', '*.xml', '*.xsd']

|

||||||

|

|

|

||||||

|

|

@ -1 +0,0 @@

|

||||||

__import__("pkg_resources").declare_namespace(__name__)

|

|

||||||

|

|

@ -1 +0,0 @@

|

||||||

|

|

||||||

|

|

@ -1,8 +1,9 @@

|

||||||

import sys

|

import sys

|

||||||

import click

|

import click

|

||||||

from ocrd_utils import initLogging, setOverrideLogLevel

|

from ocrd_utils import initLogging, setOverrideLogLevel

|

||||||

from qurator.eynollah.eynollah import Eynollah

|

from eynollah.eynollah import Eynollah

|

||||||

from qurator.eynollah.sbb_binarize import SbbBinarizer

|

from eynollah.eynollah import Eynollah

|

||||||

|

from eynollah.sbb_binarize import SbbBinarizer

|

||||||

|

|

||||||

@click.group()

|

@click.group()

|

||||||

def main():

|

def main():

|

||||||

|

|

@ -146,6 +147,12 @@ def binarization(patches, model_dir, input_image, output_image, dir_in, dir_out)

|

||||||

is_flag=True,

|

is_flag=True,

|

||||||

help="If set, will plot intermediary files and images",

|

help="If set, will plot intermediary files and images",

|

||||||

)

|

)

|

||||||

|

@click.option(

|

||||||

|

"--extract_only_images/--disable-extracting_only_images",

|

||||||

|

"-eoi/-noeoi",

|

||||||

|

is_flag=True,

|

||||||

|

help="If a directory is given, only images in documents will be cropped and saved there and the other processing will not be done",

|

||||||

|

)

|

||||||

@click.option(

|

@click.option(

|

||||||

"--allow-enhancement/--no-allow-enhancement",

|

"--allow-enhancement/--no-allow-enhancement",

|

||||||

"-ae/-noae",

|

"-ae/-noae",

|

||||||

|

|

@ -247,7 +254,7 @@ def binarization(patches, model_dir, input_image, output_image, dir_in, dir_out)

|

||||||

help="Override log level globally to this",

|

help="Override log level globally to this",

|

||||||

)

|

)

|

||||||

|

|

||||||

def layout(image, out, dir_in, model, save_images, save_layout, save_deskewed, save_all, save_page, enable_plotting, allow_enhancement, curved_line, textline_light, full_layout, tables, right2left, input_binary, allow_scaling, headers_off, light_version, reading_order_machine_based, do_ocr, num_col_upper, num_col_lower, skip_layout_and_reading_order, ignore_page_extraction, log_level):

|

def layout(image, out, dir_in, model, save_images, save_layout, save_deskewed, save_all, extract_only_images, save_page, enable_plotting, allow_enhancement, curved_line, textline_light, full_layout, tables, right2left, input_binary, allow_scaling, headers_off, light_version, reading_order_machine_based, do_ocr, num_col_upper, num_col_lower, skip_layout_and_reading_order, ignore_page_extraction, log_level):

|

||||||

if log_level:

|

if log_level:

|

||||||

setOverrideLogLevel(log_level)

|

setOverrideLogLevel(log_level)

|

||||||

initLogging()

|

initLogging()

|

||||||

|

|

@ -260,6 +267,9 @@ def layout(image, out, dir_in, model, save_images, save_layout, save_deskewed, s

|

||||||

if textline_light and not light_version:

|

if textline_light and not light_version:

|

||||||

print('Error: You used -tll to enable light textline detection but -light is not enabled')

|

print('Error: You used -tll to enable light textline detection but -light is not enabled')

|

||||||

sys.exit(1)

|

sys.exit(1)

|

||||||

|

|

||||||

|

if extract_only_images and (allow_enhancement or allow_scaling or light_version or curved_line or textline_light or full_layout or tables or right2left or headers_off) :

|

||||||

|

print('Error: You used -eoi which can not be enabled alongside light_version -light or allow_scaling -as or allow_enhancement -ae or curved_line -cl or textline_light -tll or full_layout -fl or tables -tab or right2left -r2l or headers_off -ho')

|

||||||

if light_version and not textline_light:

|

if light_version and not textline_light:

|

||||||

print('Error: You used -light without -tll. Light version need light textline to be enabled.')

|

print('Error: You used -light without -tll. Light version need light textline to be enabled.')

|

||||||

sys.exit(1)

|

sys.exit(1)

|

||||||

|

|

@ -269,6 +279,7 @@ def layout(image, out, dir_in, model, save_images, save_layout, save_deskewed, s

|

||||||

dir_in=dir_in,

|

dir_in=dir_in,

|

||||||

dir_models=model,

|

dir_models=model,

|

||||||

dir_of_cropped_images=save_images,

|

dir_of_cropped_images=save_images,

|

||||||

|

extract_only_images=extract_only_images,

|

||||||

dir_of_layout=save_layout,

|

dir_of_layout=save_layout,

|

||||||

dir_of_deskewed=save_deskewed,

|

dir_of_deskewed=save_deskewed,

|

||||||

dir_of_all=save_all,

|

dir_of_all=save_all,

|

||||||

File diff suppressed because it is too large

Load diff

|

|

@ -1,5 +1,5 @@

|

||||||

{

|

{

|

||||||

"version": "0.3.0",

|

"version": "0.3.1",

|

||||||

"git_url": "https://github.com/qurator-spk/eynollah",

|

"git_url": "https://github.com/qurator-spk/eynollah",

|

||||||

"tools": {

|

"tools": {

|

||||||

"ocrd-eynollah-segment": {

|

"ocrd-eynollah-segment": {

|

||||||

|

|

@ -52,10 +52,10 @@

|

||||||

},

|

},

|

||||||

"resources": [

|

"resources": [

|

||||||

{

|

{

|

||||||

"description": "models for eynollah (TensorFlow format)",

|

"description": "models for eynollah (TensorFlow SavedModel format)",

|

||||||

"url": "https://github.com/qurator-spk/eynollah/releases/download/v0.3.0/models_eynollah.tar.gz",

|

"url": "https://github.com/qurator-spk/eynollah/releases/download/v0.3.1/models_eynollah.tar.gz",

|

||||||

"name": "default",

|

"name": "default",

|

||||||

"size": 1761991295,

|

"size": 1894627041,

|

||||||

"type": "archive",

|

"type": "archive",

|

||||||

"path_in_archive": "models_eynollah"

|

"path_in_archive": "models_eynollah"

|

||||||

}

|

}

|

||||||

|

|

@ -42,7 +42,7 @@ class EynollahProcessor(Processor):

|

||||||

page = pcgts.get_Page()

|

page = pcgts.get_Page()

|

||||||

# XXX loses DPI information

|

# XXX loses DPI information

|

||||||

# page_image, _, _ = self.workspace.image_from_page(page, page_id, feature_filter='binarized')

|

# page_image, _, _ = self.workspace.image_from_page(page, page_id, feature_filter='binarized')

|

||||||

image_filename = self.workspace.download_file(next(self.workspace.mets.find_files(url=page.imageFilename))).local_filename

|

image_filename = self.workspace.download_file(next(self.workspace.mets.find_files(local_filename=page.imageFilename))).local_filename

|

||||||

eynollah_kwargs = {

|

eynollah_kwargs = {

|

||||||

'dir_models': self.resolve_resource(self.parameter['models']),

|

'dir_models': self.resolve_resource(self.parameter['models']),

|

||||||

'allow_enhancement': False,

|

'allow_enhancement': False,

|

||||||

|

|

@ -202,10 +202,18 @@ class EynollahXmlWriter():

|

||||||

page.add_ImageRegion(img_region)

|

page.add_ImageRegion(img_region)

|

||||||

points_co = ''

|

points_co = ''

|

||||||

for lmm in range(len(found_polygons_text_region_img[mm])):

|

for lmm in range(len(found_polygons_text_region_img[mm])):

|

||||||

points_co += str(int((found_polygons_text_region_img[mm][lmm,0,0] + page_coord[2]) / self.scale_x))

|

try:

|

||||||

points_co += ','

|

points_co += str(int((found_polygons_text_region_img[mm][lmm,0,0] + page_coord[2]) / self.scale_x))

|

||||||

points_co += str(int((found_polygons_text_region_img[mm][lmm,0,1] + page_coord[0]) / self.scale_y))

|

points_co += ','

|

||||||

points_co += ' '

|

points_co += str(int((found_polygons_text_region_img[mm][lmm,0,1] + page_coord[0]) / self.scale_y))

|

||||||

|

points_co += ' '

|

||||||

|

except:

|

||||||

|

|

||||||

|

points_co += str(int((found_polygons_text_region_img[mm][lmm][0] + page_coord[2])/ self.scale_x ))

|

||||||

|

points_co += ','

|

||||||

|

points_co += str(int((found_polygons_text_region_img[mm][lmm][1] + page_coord[0])/ self.scale_y ))

|

||||||

|

points_co += ' '

|

||||||

|

|

||||||

img_region.get_Coords().set_points(points_co[:-1])

|

img_region.get_Coords().set_points(points_co[:-1])

|

||||||

|

|

||||||

for mm in range(len(polygons_lines_to_be_written_in_xml)):

|

for mm in range(len(polygons_lines_to_be_written_in_xml)):

|

||||||

|

|

@ -1,5 +1,5 @@

|

||||||

from tests.base import main

|

from tests.base import main

|

||||||

from qurator.eynollah.utils.counter import EynollahIdCounter

|

from eynollah.utils.counter import EynollahIdCounter

|

||||||

|

|

||||||

def test_counter_string():

|

def test_counter_string():

|

||||||

c = EynollahIdCounter()

|

c = EynollahIdCounter()

|

||||||

|

|

|

||||||

|

|

@ -1,6 +1,6 @@

|

||||||

import cv2

|

import cv2

|

||||||

from pathlib import Path

|

from pathlib import Path

|

||||||

from qurator.eynollah.utils.pil_cv2 import check_dpi

|

from eynollah.utils.pil_cv2 import check_dpi

|

||||||

from tests.base import main

|

from tests.base import main

|

||||||

|

|

||||||

def test_dpi():

|

def test_dpi():

|

||||||

|

|

|

||||||

|

|

@ -2,7 +2,7 @@ from os import environ

|

||||||

from pathlib import Path

|

from pathlib import Path

|

||||||

from ocrd_utils import pushd_popd

|

from ocrd_utils import pushd_popd

|

||||||

from tests.base import CapturingTestCase as TestCase, main

|

from tests.base import CapturingTestCase as TestCase, main

|

||||||

from qurator.eynollah.cli import main as eynollah_cli

|

from eynollah.cli import main as eynollah_cli

|

||||||

|

|

||||||

testdir = Path(__file__).parent.resolve()

|

testdir = Path(__file__).parent.resolve()

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -1,7 +1,7 @@

|

||||||

def test_utils_import():

|

def test_utils_import():

|

||||||

import qurator.eynollah.utils

|

import eynollah.utils

|

||||||

import qurator.eynollah.utils.contour

|

import eynollah.utils.contour

|

||||||

import qurator.eynollah.utils.drop_capitals

|

import eynollah.utils.drop_capitals

|

||||||

import qurator.eynollah.utils.drop_capitals

|

import eynollah.utils.drop_capitals

|

||||||

import qurator.eynollah.utils.is_nan

|

import eynollah.utils.is_nan

|

||||||

import qurator.eynollah.utils.rotate

|

import eynollah.utils.rotate

|

||||||

|

|

|

||||||

|

|

@ -1,5 +1,5 @@

|

||||||

from pytest import main

|

from pytest import main

|

||||||

from qurator.eynollah.utils.xml import create_page_xml

|

from eynollah.utils.xml import create_page_xml

|

||||||

from ocrd_models.ocrd_page import to_xml

|

from ocrd_models.ocrd_page import to_xml

|

||||||

|

|

||||||

PAGE_2019 = 'http://schema.primaresearch.org/PAGE/gts/pagecontent/2019-07-15'

|

PAGE_2019 = 'http://schema.primaresearch.org/PAGE/gts/pagecontent/2019-07-15'

|

||||||

|

|

|

||||||

Loading…

Add table

Add a link

Reference in a new issue