mirror of

https://github.com/qurator-spk/eynollah.git

synced 2026-05-26 07:39:22 +02:00

Merge pull request #86 from qurator-spk/eynollah_light

Eynollah light integration

This commit is contained in:

commit

fd9431a678

10 changed files with 1361 additions and 580 deletions

|

|

@ -3,8 +3,9 @@ version: 2

|

||||||

jobs:

|

jobs:

|

||||||

|

|

||||||

build-python37:

|

build-python37:

|

||||||

docker:

|

machine:

|

||||||

- image: python:3.7

|

- image: ubuntu-2004:2023.02.1

|

||||||

|

|

||||||

steps:

|

steps:

|

||||||

- checkout

|

- checkout

|

||||||

- restore_cache:

|

- restore_cache:

|

||||||

|

|

@ -16,12 +17,15 @@ jobs:

|

||||||

paths:

|

paths:

|

||||||

models_eynollah.tar.gz

|

models_eynollah.tar.gz

|

||||||

models_eynollah

|

models_eynollah

|

||||||

|

- run:

|

||||||

|

name: "Set Python Version"

|

||||||

|

command: pyenv install -s 3.7.16 && pyenv global 3.7.16

|

||||||

- run: make install

|

- run: make install

|

||||||

- run: make smoke-test

|

- run: make smoke-test

|

||||||

|

|

||||||

build-python38:

|

build-python38:

|

||||||

docker:

|

machine:

|

||||||

- image: python:3.8

|

- image: ubuntu-2004:2023.02.1

|

||||||

steps:

|

steps:

|

||||||

- checkout

|

- checkout

|

||||||

- restore_cache:

|

- restore_cache:

|

||||||

|

|

@ -33,6 +37,9 @@ jobs:

|

||||||

paths:

|

paths:

|

||||||

models_eynollah.tar.gz

|

models_eynollah.tar.gz

|

||||||

models_eynollah

|

models_eynollah

|

||||||

|

- run:

|

||||||

|

name: "Set Python Version"

|

||||||

|

command: pyenv install -s 3.8.16 && pyenv global 3.8.16

|

||||||

- run: make install

|

- run: make install

|

||||||

- run: make smoke-test

|

- run: make smoke-test

|

||||||

|

|

||||||

|

|

@ -40,6 +47,5 @@ workflows:

|

||||||

version: 2

|

version: 2

|

||||||

build:

|

build:

|

||||||

jobs:

|

jobs:

|

||||||

#- build-python36

|

|

||||||

- build-python37

|

- build-python37

|

||||||

- build-python38

|

- build-python38

|

||||||

|

|

|

||||||

2

.github/workflows/test-eynollah.yml

vendored

2

.github/workflows/test-eynollah.yml

vendored

|

|

@ -11,7 +11,7 @@ jobs:

|

||||||

runs-on: ubuntu-latest

|

runs-on: ubuntu-latest

|

||||||

strategy:

|

strategy:

|

||||||

matrix:

|

matrix:

|

||||||

python-version: ['3.7'] # '3.8'

|

python-version: ['3.7', '3.8']

|

||||||

|

|

||||||

steps:

|

steps:

|

||||||

- uses: actions/checkout@v2

|

- uses: actions/checkout@v2

|

||||||

|

|

|

||||||

8

Makefile

8

Makefile

|

|

@ -22,10 +22,14 @@ help:

|

||||||

models: models_eynollah

|

models: models_eynollah

|

||||||

|

|

||||||

models_eynollah: models_eynollah.tar.gz

|

models_eynollah: models_eynollah.tar.gz

|

||||||

tar xf models_eynollah.tar.gz

|

# tar xf models_eynollah_renamed.tar.gz --transform 's/models_eynollah_renamed/models_eynollah/'

|

||||||

|

# tar xf models_eynollah_renamed.tar.gz

|

||||||

|

tar xf 2022-04-05.SavedModel.tar.gz --transform 's/models_eynollah_renamed/models_eynollah/'

|

||||||

|

|

||||||

models_eynollah.tar.gz:

|

models_eynollah.tar.gz:

|

||||||

wget 'https://qurator-data.de/eynollah/2021-04-25/models_eynollah.tar.gz'

|

# wget 'https://qurator-data.de/eynollah/2021-04-25/models_eynollah.tar.gz'

|

||||||

|

# wget 'https://qurator-data.de/eynollah/2022-04-05/models_eynollah_renamed.tar.gz'

|

||||||

|

wget 'https://ocr-d.kba.cloud/2022-04-05.SavedModel.tar.gz'

|

||||||

|

|

||||||

# Install with pip

|

# Install with pip

|

||||||

install:

|

install:

|

||||||

|

|

|

||||||

219

README.md

219

README.md

|

|

@ -1,186 +1,97 @@

|

||||||

# Eynollah

|

# Eynollah

|

||||||

> Perform document layout analysis (segmentation) from image data and return the results as [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML).

|

> Document Layout Analysis (segmentation) using pre-trained models and heuristics

|

||||||

|

|

||||||

|

[](https://pypi.org/project/eynollah/)

|

||||||

|

[](https://circleci.com/gh/qurator-spk/eynollah)

|

||||||

|

[](https://github.com/qurator-spk/eynollah/actions/workflows/test-eynollah.yml)

|

||||||

|

[](https://opensource.org/license/apache-2-0/)

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

## Features

|

||||||

|

* Support for up to 10 segmentation classes:

|

||||||

|

* background, [page border](https://ocr-d.de/en/gt-guidelines/trans/lyRand.html), [text region](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextRegionType.html), [text line](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextLineType.html), [header](https://ocr-d.de/en/gt-guidelines/trans/lyUeberschrift.html), [image](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_ImageRegionType.html), [separator](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_SeparatorRegionType.html), [marginalia](https://ocr-d.de/en/gt-guidelines/trans/lyMarginalie.html), [initial](https://ocr-d.de/en/gt-guidelines/trans/lyInitiale.html), [table](https://ocr-d.de/en/gt-guidelines/trans/lyTabellen.html)

|

||||||

|

* Support for various image optimization operations:

|

||||||

|

* cropping (border detection), binarization, deskewing, dewarping, scaling, enhancing, resizing

|

||||||

|

* Text line segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

||||||

|

* Detection of reading order

|

||||||

|

* Output in [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML)

|

||||||

|

* [OCR-D](https://github.com/qurator-spk/eynollah#use-as-ocr-d-processor) interface

|

||||||

|

|

||||||

## Installation

|

## Installation

|

||||||

`pip install .` or

|

Python versions `3.7-3.10` with Tensorflow `>=2.4` are currently supported.

|

||||||

|

|

||||||

`pip install -e .` for editable installation

|

For (limited) GPU support the [matching](https://www.tensorflow.org/install/source#gpu) CUDA toolkit `>=10.1` needs to be installed.

|

||||||

|

|

||||||

Alternatively, you can also use `make` with these targets:

|

You can either install via

|

||||||

|

|

||||||

`make install` or

|

```

|

||||||

|

pip install eynollah

|

||||||

|

```

|

||||||

|

|

||||||

`make install-dev` for editable installation

|

or clone the repository, enter it and install (editable) with

|

||||||

|

|

||||||

The current version of Eynollah runs on Python `>=3.6` with Tensorflow `>=2.4`.

|

```

|

||||||

|

git clone git@github.com:qurator-spk/eynollah.git

|

||||||

|

cd eynollah; pip install -e .

|

||||||

|

```

|

||||||

|

|

||||||

In order to use a GPU for inference, the CUDA toolkit version 10.x needs to be installed.

|

Alternatively, you can run `make install` or `make install-dev` for editable installation.

|

||||||

|

|

||||||

### Models

|

|

||||||

|

|

||||||

In order to run this tool you need trained models. You can download our pretrained models from [qurator-data.de](https://qurator-data.de/eynollah/).

|

|

||||||

|

|

||||||

Alternatively, running `make models` will download and extract models to `$(PWD)/models_eynollah`.

|

|

||||||

|

|

||||||

### Training

|

|

||||||

|

|

||||||

In case you want to train your own model to use with Eynollah, have a look at [sbb_pixelwise_segmentation](https://github.com/qurator-spk/sbb_pixelwise_segmentation).

|

|

||||||

|

|

||||||

## Usage

|

## Usage

|

||||||

|

|

||||||

The command-line interface can be called like this:

|

The command-line interface can be called like this:

|

||||||

|

|

||||||

```sh

|

```sh

|

||||||

eynollah \

|

eynollah \

|

||||||

-i <image file name> \

|

-i <image file> \

|

||||||

-o <directory to write output xml or enhanced image> \

|

-o <output directory> \

|

||||||

-m <directory of models> \

|

-m <path to directory containing model files> \

|

||||||

-fl <if true, the tool will perform full layout analysis> \

|

[OPTIONS]

|

||||||

-ae <if true, the tool will resize and enhance the image and produce the resulting image as output. The rescaled and enhanced image will be saved in output directory> \

|

|

||||||

-as <if true, the tool will check whether the document needs rescaling or not> \

|

|

||||||

-cl <if true, the tool will extract the contours of curved textlines instead of rectangle bounding boxes> \

|

|

||||||

-si <if a directory is given here, the tool will output image regions inside documents there> \

|

|

||||||

-sd <if a directory is given, deskewed image will be saved there> \

|

|

||||||

-sa <if a directory is given, all plots needed for documentation will be saved there> \

|

|

||||||

-tab <if true, this tool will try to detect tables> \

|

|

||||||

-ib <in general, eynollah uses RGB as input but if the input document is strongly dark, bright or for any other reason you can turn binarized input on. This option does not mean that you have to provide a binary image, otherwise this means that the tool itself will binarized the RGB input document> \

|

|

||||||

-ho <if true, this tool would ignore headers role in reading order detection> \

|

|

||||||

-sl <if a directory is given, plot of layout will be saved there> \

|

|

||||||

-ep <if true, the tool will be enabled to save desired plot. This should be true alongside with -sl, -sd, -sa , -si or -ae options>

|

|

||||||

|

|

||||||

```

|

```

|

||||||

|

|

||||||

The tool performs better with RGB images than greyscale/binarized images.

|

The following options can be used to further configure the processing:

|

||||||

|

|

||||||

## Documentation

|

| option | description |

|

||||||

|

|----------|:-------------|

|

||||||

|

| `-fl` | full layout analysis including all steps and segmentation classes |

|

||||||

|

| `-light` | lighter and faster but simpler method for main region detection and deskewing |

|

||||||

|

| `-tab` | apply table detection |

|

||||||

|

| `-ae` | apply enhancement (the resulting image is saved to the output directory) |

|

||||||

|

| `-as` | apply scaling |

|

||||||

|

| `-cl` | apply countour detection for curved text lines instead of bounding boxes |

|

||||||

|

| `-ib` | apply binarization (the resulting image is saved to the output directory) |

|

||||||

|

| `-ep` | enable plotting (MUST always be used with `-sl`, `-sd`, `-sa`, `-si` or `-ae`) |

|

||||||

|

| `-ho` | ignore headers for reading order dectection |

|

||||||

|

| `-di <directory>` | process all images in a directory in batch mode |

|

||||||

|

| `-si <directory>` | save image regions detected to this directory |

|

||||||

|

| `-sd <directory>` | save deskewed image to this directory |

|

||||||

|

| `-sl <directory>` | save layout prediction as plot to this directory |

|

||||||

|

| `-sp <directory>` | save cropped page image to this directory |

|

||||||

|

| `-sa <directory>` | save all (plot, enhanced/binary image, layout) to this directory |

|

||||||

|

|

||||||

<details>

|

If no option is set, the tool will perform layout detection of main regions (background, text, images, separators and marginals).

|

||||||

<summary>click to expand/collapse</summary>

|

|

||||||

|

|

||||||

### Region types

|

The tool produces better output from RGB images as input than greyscale or binarized images.

|

||||||

|

|

||||||

<details>

|

## Models

|

||||||

<summary>click to expand/collapse</summary><br/>

|

Pre-trained models can be downloaded from [qurator-data.de](https://qurator-data.de/eynollah/).

|

||||||

|

|

||||||

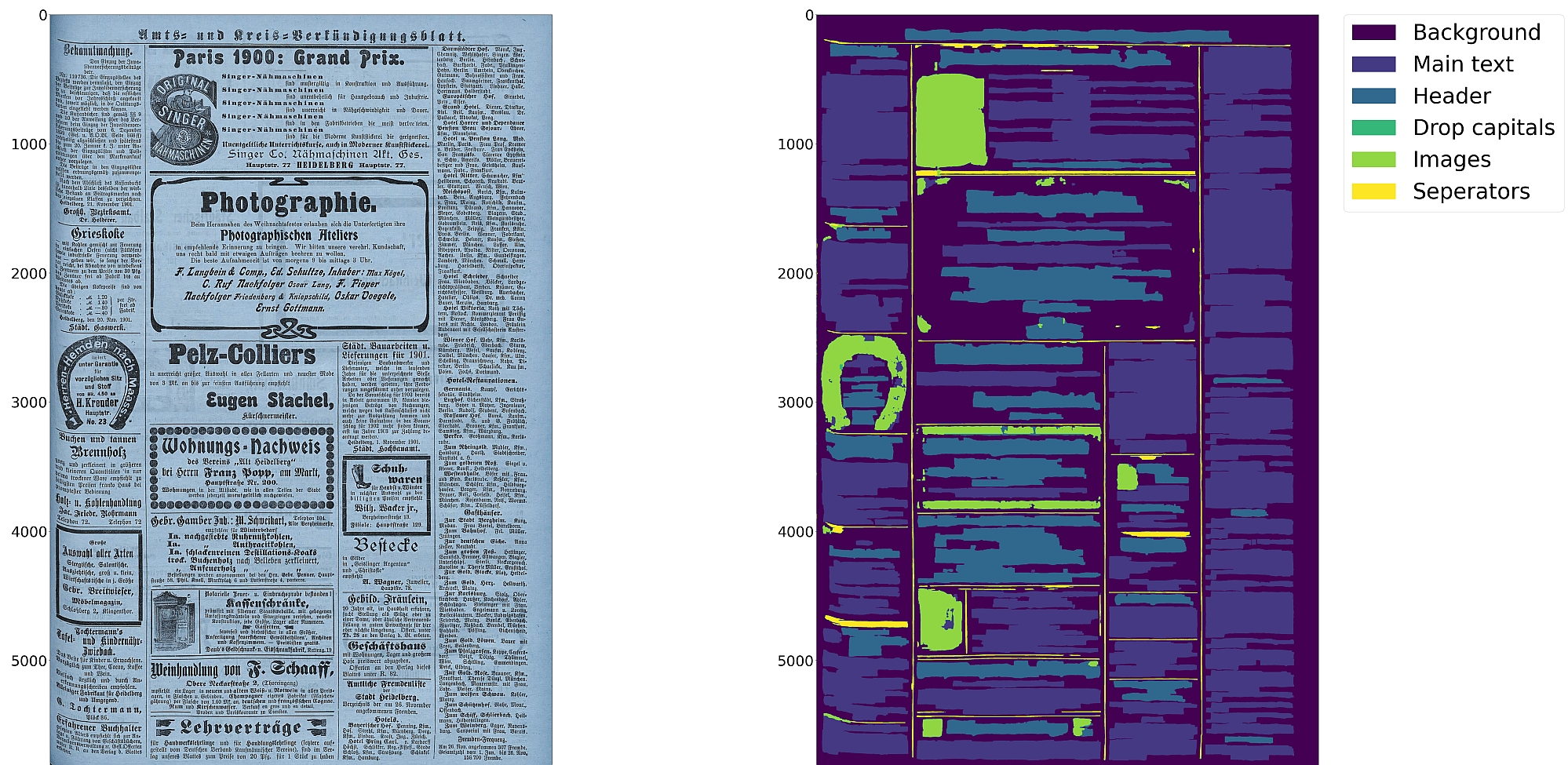

Eynollah can currently be used to detect the following region types/elements:

|

In case you want to train your own model to use with Eynollah, have a look at [sbb_pixelwise_segmentation](https://github.com/qurator-spk/sbb_pixelwise_segmentation).

|

||||||

* [Border](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_BorderType.html)

|

|

||||||

* [Textregion](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextRegionType.html)

|

|

||||||

* [Textline](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextLineType.html)

|

|

||||||

* [Image](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_ImageRegionType.html)

|

|

||||||

* [Separator](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_SeparatorRegionType.html)

|

|

||||||

* [Marginalia](https://ocr-d.de/en/gt-guidelines/trans/lyMarginalie.html)

|

|

||||||

* [Initial (Drop Capital)](https://ocr-d.de/en/gt-guidelines/trans/lyInitiale.html)

|

|

||||||

|

|

||||||

In addition, the tool can detect the [ReadingOrder](https://ocr-d.de/en/gt-guidelines/trans/lyLeserichtung.html) of regions. The final goal is to feed the output to an OCR model.

|

|

||||||

|

|

||||||

</details>

|

|

||||||

|

|

||||||

### Method description

|

|

||||||

|

|

||||||

<details>

|

|

||||||

<summary>click to expand/collapse</summary><br/>

|

|

||||||

|

|

||||||

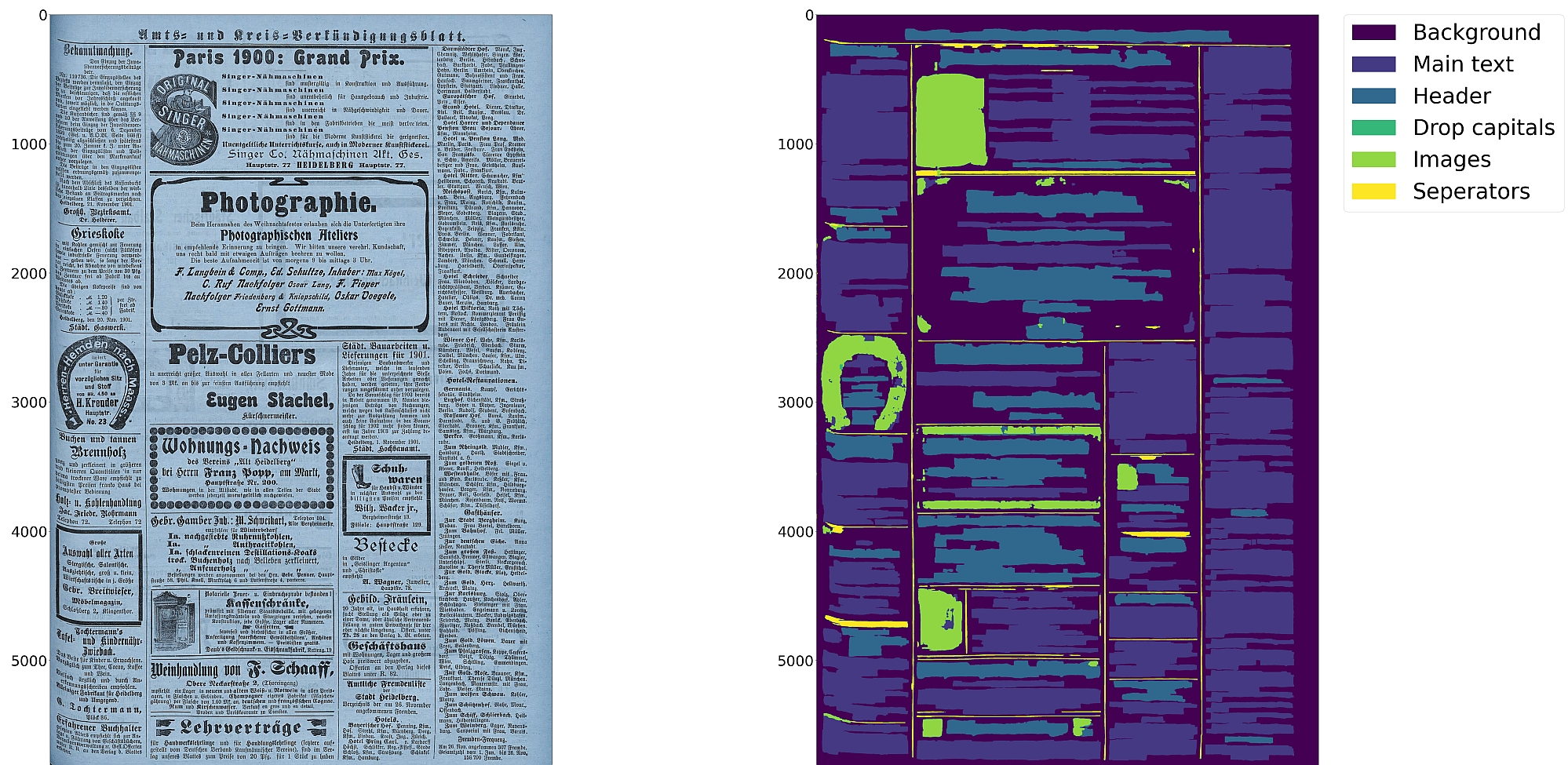

Eynollah uses a combination of various models and heuristics (see flowchart below for the different stages and how they interact):

|

|

||||||

* [Border detection](https://github.com/qurator-spk/eynollah#border-detection)

|

|

||||||

* [Layout detection](https://github.com/qurator-spk/eynollah#layout-detection)

|

|

||||||

* [Textline detection](https://github.com/qurator-spk/eynollah#textline-detection)

|

|

||||||

* [Image enhancement](https://github.com/qurator-spk/eynollah#Image_enhancement)

|

|

||||||

* [Scale classification](https://github.com/qurator-spk/eynollah#Scale_classification)

|

|

||||||

* [Heuristic methods](https://https://github.com/qurator-spk/eynollah#heuristic-methods)

|

|

||||||

|

|

||||||

The first three stages are based on [pixel-wise segmentation](https://github.com/qurator-spk/sbb_pixelwise_segmentation).

|

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

#### Border detection

|

|

||||||

For the purpose of text recognition (OCR) and in order to avoid noise being introduced from texts outside the printspace, one first needs to detect the border of the printed frame. This is done by a binary pixel-wise-segmentation model trained on a dataset of 2,000 documents where about 1,200 of them come from the [dhSegment](https://github.com/dhlab-epfl/dhSegment/) project (you can download the dataset from [here](https://github.com/dhlab-epfl/dhSegment/releases/download/v0.2/pages.zip)) and the remainder having been annotated in SBB. For border detection, the model needs to be fed with the whole image at once rather than separated in patches.

|

|

||||||

|

|

||||||

### Layout detection

|

|

||||||

As a next step, text regions need to be identified by means of layout detection. Again a pixel-wise segmentation model was trained on 131 labeled images from the SBB digital collections, including some data augmentation. Since the target of this tool are historical documents, we consider as main region types text regions, separators, images, tables and background - each with their own subclasses, e.g. in the case of text regions, subclasses like header/heading, drop capital, main body text etc. While it would be desirable to detect and classify each of these classes in a granular way, there are also limitations due to having a suitably large and balanced training set. Accordingly, the current version of this tool is focussed on the main region types background, text region, image and separator.

|

|

||||||

|

|

||||||

#### Textline detection

|

|

||||||

In a subsequent step, binary pixel-wise segmentation is used again to classify pixels in a document that constitute textlines. For textline segmentation, a model was initially trained on documents with only one column/block of text and some augmentation with regard to scaling. By fine-tuning the parameters also for multi-column documents, additional training data was produced that resulted in a much more robust textline detection model.

|

|

||||||

|

|

||||||

#### Image enhancement

|

|

||||||

This is an image to image model which input was low quality of an image and label was actually the original image. For this one we did not have any GT, so we decreased the quality of documents in SBB and then feed them into model.

|

|

||||||

|

|

||||||

#### Scale classification

|

|

||||||

This is simply an image classifier which classifies images based on their scales or better to say based on their number of columns.

|

|

||||||

|

|

||||||

### Heuristic methods

|

|

||||||

Some heuristic methods are also employed to further improve the model predictions:

|

|

||||||

* After border detection, the largest contour is determined by a bounding box, and the image cropped to these coordinates.

|

|

||||||

* For text region detection, the image is scaled up to make it easier for the model to detect background space between text regions.

|

|

||||||

* A minimum area is defined for text regions in relation to the overall image dimensions, so that very small regions that are noise can be filtered out.

|

|

||||||

* Deskewing is applied on the text region level (due to regions having different degrees of skew) in order to improve the textline segmentation result.

|

|

||||||

* After deskewing, a calculation of the pixel distribution on the X-axis allows the separation of textlines (foreground) and background pixels.

|

|

||||||

* Finally, using the derived coordinates, bounding boxes are determined for each textline.

|

|

||||||

|

|

||||||

</details>

|

|

||||||

|

|

||||||

### Model description

|

|

||||||

|

|

||||||

<details>

|

|

||||||

<summary>click to expand/collapse</summary><br/>

|

|

||||||

|

|

||||||

Coming soon

|

|

||||||

|

|

||||||

</details>

|

|

||||||

|

|

||||||

### How to use

|

|

||||||

|

|

||||||

<details>

|

|

||||||

<summary>click to expand/collapse</summary><br/>

|

|

||||||

|

|

||||||

First, this model makes use of up to 9 trained models which are responsible for different operations like size detection, column classification, image enhancement, page extraction, main layout detection, full layout detection and textline detection.That does not mean that all 9 models are always required for every document. Based on the document characteristics and parameters specified, different scenarios can be applied.

|

|

||||||

|

|

||||||

* If none of the parameters is set to `true`, the tool will perform a layout detection of main regions (background, text, images, separators and marginals). An advantage of this tool is that it tries to extract main text regions separately as much as possible.

|

|

||||||

|

|

||||||

* If you set `-ae` (**a**llow image **e**nhancement) parameter to `true`, the tool will first check the ppi (pixel-per-inch) of the image and when it is less than 300, the tool will resize it and only then image enhancement will occur. Image enhancement can also take place without this option, but by setting this option to `true`, the layout xml data (e.g. coordinates) will be based on the resized and enhanced image instead of the original image.

|

|

||||||

|

|

||||||

* For some documents, while the quality is good, their scale is very large, and the performance of tool decreases. In such cases you can set `-as` (**a**llow **s**caling) to `true`. With this option enabled, the tool will try to rescale the image and only then the layout detection process will begin.

|

|

||||||

|

|

||||||

* If you care about drop capitals (initials) and headings, you can set `-fl` (**f**ull **l**ayout) to `true`. With this setting, the tool can currently distinguish 7 document layout classes/elements.

|

|

||||||

|

|

||||||

* In cases where the document includes curved headers or curved lines, rectangular bounding boxes for textlines will not be a great option. In such cases it is strongly recommended setting the flag `-cl` (**c**urved **l**ines) to `true` to find contours of curved lines instead of rectangular bounding boxes. Be advised that enabling this option increases the processing time of the tool.

|

|

||||||

|

|

||||||

* To crop and save image regions inside the document, set the parameter `-si` (**s**ave **i**mages) to true and provide a directory path to store the extracted images.

|

|

||||||

|

|

||||||

* This tool is actively being developed. If problems occur, or the performance does not meet your expectations, we welcome your feedback via [issues](https://github.com/qurator-spk/eynollah/issues).

|

|

||||||

|

|

||||||

#### `--full-layout` vs `--no-full-layout`

|

|

||||||

|

|

||||||

Here are the difference in elements detected depending on the `--full-layout`/`--no-full-layout` command line flags:

|

|

||||||

|

|

||||||

| | `--full-layout` | `--no-full-layout` |

|

|

||||||

| --- | --- | --- |

|

|

||||||

| reading order | x | x |

|

|

||||||

| header regions | x | - |

|

|

||||||

| text regions | x | x |

|

|

||||||

| text regions / text line | x | x |

|

|

||||||

| drop-capitals | x | - |

|

|

||||||

| marginals | x | x |

|

|

||||||

| marginals / text line | x | x |

|

|

||||||

| image region | x | x |

|

|

||||||

|

|

||||||

#### Use as OCR-D processor

|

#### Use as OCR-D processor

|

||||||

|

|

||||||

Eynollah ships with a CLI interface to be used as [OCR-D](https://ocr-d.de) processor. In this case, the source image file group with (preferably) RGB images should be used as input like this:

|

Eynollah ships with a CLI interface to be used as [OCR-D](https://ocr-d.de) processor.

|

||||||

|

|

||||||

`ocrd-eynollah-segment -I OCR-D-IMG -O SEG-LINE -P models`

|

In this case, the source image file group with (preferably) RGB images should be used as input like this:

|

||||||

|

|

||||||

In fact, the image referenced by `@imageFilename` in PAGE-XML is passed on directly to Eynollah as a processor, so that e.g. calling

|

```

|

||||||

|

ocrd-eynollah-segment -I OCR-D-IMG -O SEG-LINE -P models

|

||||||

|

```

|

||||||

|

|

||||||

`ocrd-eynollah-segment -I OCR-D-IMG-BIN -O SEG-LINE -P models`

|

Any image referenced by `@imageFilename` in PAGE-XML is passed on directly to Eynollah as a processor, so that e.g. calling

|

||||||

|

|

||||||

would still use the original (RGB) image despite any binarization that may have occured in previous OCR-D processing steps

|

```

|

||||||

|

ocrd-eynollah-segment -I OCR-D-IMG-BIN -O SEG-LINE -P models

|

||||||

|

```

|

||||||

|

|

||||||

#### Eynollah "light"

|

still uses the original (RGB) image despite any binarization that may have occured in previous OCR-D processing steps

|

||||||

|

|

||||||

TODO

|

|

||||||

|

|

||||||

</details>

|

|

||||||

|

|

||||||

</details>

|

|

||||||

|

|

|

||||||

|

|

@ -10,7 +10,6 @@ from qurator.eynollah.eynollah import Eynollah

|

||||||

"-i",

|

"-i",

|

||||||

help="image filename",

|

help="image filename",

|

||||||

type=click.Path(exists=True, dir_okay=False),

|

type=click.Path(exists=True, dir_okay=False),

|

||||||

required=True,

|

|

||||||

)

|

)

|

||||||

@click.option(

|

@click.option(

|

||||||

"--out",

|

"--out",

|

||||||

|

|

@ -19,6 +18,12 @@ from qurator.eynollah.eynollah import Eynollah

|

||||||

type=click.Path(exists=True, file_okay=False),

|

type=click.Path(exists=True, file_okay=False),

|

||||||

required=True,

|

required=True,

|

||||||

)

|

)

|

||||||

|

@click.option(

|

||||||

|

"--dir_in",

|

||||||

|

"-di",

|

||||||

|

help="directory of images",

|

||||||

|

type=click.Path(exists=True, file_okay=False),

|

||||||

|

)

|

||||||

@click.option(

|

@click.option(

|

||||||

"--model",

|

"--model",

|

||||||

"-m",

|

"-m",

|

||||||

|

|

@ -50,6 +55,12 @@ from qurator.eynollah.eynollah import Eynollah

|

||||||

help="if a directory is given, all plots needed for documentation will be saved there",

|

help="if a directory is given, all plots needed for documentation will be saved there",

|

||||||

type=click.Path(exists=True, file_okay=False),

|

type=click.Path(exists=True, file_okay=False),

|

||||||

)

|

)

|

||||||

|

@click.option(

|

||||||

|

"--save_page",

|

||||||

|

"-sp",

|

||||||

|

help="if a directory is given, page crop of image will be saved there",

|

||||||

|

type=click.Path(exists=True, file_okay=False),

|

||||||

|

)

|

||||||

@click.option(

|

@click.option(

|

||||||

"--enable-plotting/--disable-plotting",

|

"--enable-plotting/--disable-plotting",

|

||||||

"-ep/-noep",

|

"-ep/-noep",

|

||||||

|

|

@ -66,7 +77,13 @@ from qurator.eynollah.eynollah import Eynollah

|

||||||

"--curved-line/--no-curvedline",

|

"--curved-line/--no-curvedline",

|

||||||

"-cl/-nocl",

|

"-cl/-nocl",

|

||||||

is_flag=True,

|

is_flag=True,

|

||||||

help="if this parameter set to true, this tool will try to return contoure of textlines instead of rectabgle bounding box of textline. This should be taken into account that with this option the tool need more time to do process.",

|

help="if this parameter set to true, this tool will try to return contoure of textlines instead of rectangle bounding box of textline. This should be taken into account that with this option the tool need more time to do process.",

|

||||||

|

)

|

||||||

|

@click.option(

|

||||||

|

"--textline_light/--no-textline_light",

|

||||||

|

"-tll/-notll",

|

||||||

|

is_flag=True,

|

||||||

|

help="if this parameter set to true, this tool will try to return contoure of textlines instead of rectangle bounding box of textline with a faster method.",

|

||||||

)

|

)

|

||||||

@click.option(

|

@click.option(

|

||||||

"--full-layout/--no-full-layout",

|

"--full-layout/--no-full-layout",

|

||||||

|

|

@ -93,11 +110,23 @@ from qurator.eynollah.eynollah import Eynollah

|

||||||

help="if this parameter set to true, this tool would check the scale and if needed it will scale it to perform better layout detection",

|

help="if this parameter set to true, this tool would check the scale and if needed it will scale it to perform better layout detection",

|

||||||

)

|

)

|

||||||

@click.option(

|

@click.option(

|

||||||

"--headers-off/--headers-on",

|

"--headers_off/--headers-on",

|

||||||

"-ho/-noho",

|

"-ho/-noho",

|

||||||

is_flag=True,

|

is_flag=True,

|

||||||

help="if this parameter set to true, this tool would ignore headers role in reading order",

|

help="if this parameter set to true, this tool would ignore headers role in reading order",

|

||||||

)

|

)

|

||||||

|

@click.option(

|

||||||

|

"--light_version/--original",

|

||||||

|

"-light/-org",

|

||||||

|

is_flag=True,

|

||||||

|

help="if this parameter set to true, this tool would use lighter version",

|

||||||

|

)

|

||||||

|

@click.option(

|

||||||

|

"--ignore_page_extraction/--extract_page_included",

|

||||||

|

"-ipe/-epi",

|

||||||

|

is_flag=True,

|

||||||

|

help="if this parameter set to true, this tool would ignore page extraction",

|

||||||

|

)

|

||||||

@click.option(

|

@click.option(

|

||||||

"--log-level",

|

"--log-level",

|

||||||

"-l",

|

"-l",

|

||||||

|

|

@ -107,49 +136,63 @@ from qurator.eynollah.eynollah import Eynollah

|

||||||

def main(

|

def main(

|

||||||

image,

|

image,

|

||||||

out,

|

out,

|

||||||

|

dir_in,

|

||||||

model,

|

model,

|

||||||

save_images,

|

save_images,

|

||||||

save_layout,

|

save_layout,

|

||||||

save_deskewed,

|

save_deskewed,

|

||||||

save_all,

|

save_all,

|

||||||

|

save_page,

|

||||||

enable_plotting,

|

enable_plotting,

|

||||||

allow_enhancement,

|

allow_enhancement,

|

||||||

curved_line,

|

curved_line,

|

||||||

|

textline_light,

|

||||||

full_layout,

|

full_layout,

|

||||||

tables,

|

tables,

|

||||||

input_binary,

|

input_binary,

|

||||||

allow_scaling,

|

allow_scaling,

|

||||||

headers_off,

|

headers_off,

|

||||||

|

light_version,

|

||||||

|

ignore_page_extraction,

|

||||||

log_level

|

log_level

|

||||||

):

|

):

|

||||||

if log_level:

|

if log_level:

|

||||||

setOverrideLogLevel(log_level)

|

setOverrideLogLevel(log_level)

|

||||||

initLogging()

|

initLogging()

|

||||||

if not enable_plotting and (save_layout or save_deskewed or save_all or save_images or allow_enhancement):

|

if not enable_plotting and (save_layout or save_deskewed or save_all or save_page or save_images or allow_enhancement):

|

||||||

print("Error: You used one of -sl, -sd, -sa, -si or -ae but did not enable plotting with -ep")

|

print("Error: You used one of -sl, -sd, -sa, -sp, -si or -ae but did not enable plotting with -ep")

|

||||||

sys.exit(1)

|

sys.exit(1)

|

||||||

elif enable_plotting and not (save_layout or save_deskewed or save_all or save_images or allow_enhancement):

|

elif enable_plotting and not (save_layout or save_deskewed or save_all or save_page or save_images or allow_enhancement):

|

||||||

print("Error: You used -ep to enable plotting but set none of -sl, -sd, -sa, -si or -ae")

|

print("Error: You used -ep to enable plotting but set none of -sl, -sd, -sa, -sp, -si or -ae")

|

||||||

|

sys.exit(1)

|

||||||

|

if textline_light and not light_version:

|

||||||

|

print('Error: You used -tll to enable light textline detection but -light is not enabled')

|

||||||

sys.exit(1)

|

sys.exit(1)

|

||||||

eynollah = Eynollah(

|

eynollah = Eynollah(

|

||||||

image_filename=image,

|

image_filename=image,

|

||||||

dir_out=out,

|

dir_out=out,

|

||||||

|

dir_in=dir_in,

|

||||||

dir_models=model,

|

dir_models=model,

|

||||||

dir_of_cropped_images=save_images,

|

dir_of_cropped_images=save_images,

|

||||||

dir_of_layout=save_layout,

|

dir_of_layout=save_layout,

|

||||||

dir_of_deskewed=save_deskewed,

|

dir_of_deskewed=save_deskewed,

|

||||||

dir_of_all=save_all,

|

dir_of_all=save_all,

|

||||||

|

dir_save_page=save_page,

|

||||||

enable_plotting=enable_plotting,

|

enable_plotting=enable_plotting,

|

||||||

allow_enhancement=allow_enhancement,

|

allow_enhancement=allow_enhancement,

|

||||||

curved_line=curved_line,

|

curved_line=curved_line,

|

||||||

|

textline_light=textline_light,

|

||||||

full_layout=full_layout,

|

full_layout=full_layout,

|

||||||

tables=tables,

|

tables=tables,

|

||||||

input_binary=input_binary,

|

input_binary=input_binary,

|

||||||

allow_scaling=allow_scaling,

|

allow_scaling=allow_scaling,

|

||||||

headers_off=headers_off,

|

headers_off=headers_off,

|

||||||

|

light_version=light_version,

|

||||||

|

ignore_page_extraction=ignore_page_extraction,

|

||||||

)

|

)

|

||||||

pcgts = eynollah.run()

|

eynollah.run()

|

||||||

eynollah.writer.write_pagexml(pcgts)

|

#pcgts = eynollah.run()

|

||||||

|

##eynollah.writer.write_pagexml(pcgts)

|

||||||

|

|

||||||

if __name__ == "__main__":

|

if __name__ == "__main__":

|

||||||

main()

|

main()

|

||||||

|

|

|

||||||

File diff suppressed because it is too large

Load diff

|

|

@ -19,6 +19,7 @@ class EynollahPlotter():

|

||||||

*,

|

*,

|

||||||

dir_out,

|

dir_out,

|

||||||

dir_of_all,

|

dir_of_all,

|

||||||

|

dir_save_page,

|

||||||

dir_of_deskewed,

|

dir_of_deskewed,

|

||||||

dir_of_layout,

|

dir_of_layout,

|

||||||

dir_of_cropped_images,

|

dir_of_cropped_images,

|

||||||

|

|

@ -29,6 +30,7 @@ class EynollahPlotter():

|

||||||

):

|

):

|

||||||

self.dir_out = dir_out

|

self.dir_out = dir_out

|

||||||

self.dir_of_all = dir_of_all

|

self.dir_of_all = dir_of_all

|

||||||

|

self.dir_save_page = dir_save_page

|

||||||

self.dir_of_layout = dir_of_layout

|

self.dir_of_layout = dir_of_layout

|

||||||

self.dir_of_cropped_images = dir_of_cropped_images

|

self.dir_of_cropped_images = dir_of_cropped_images

|

||||||

self.dir_of_deskewed = dir_of_deskewed

|

self.dir_of_deskewed = dir_of_deskewed

|

||||||

|

|

@ -74,8 +76,8 @@ class EynollahPlotter():

|

||||||

if self.dir_of_layout is not None:

|

if self.dir_of_layout is not None:

|

||||||

values = np.unique(text_regions_p[:, :])

|

values = np.unique(text_regions_p[:, :])

|

||||||

# pixels=['Background' , 'Main text' , 'Heading' , 'Marginalia' ,'Drop capitals' , 'Images' , 'Seperators' , 'Tables', 'Graphics']

|

# pixels=['Background' , 'Main text' , 'Heading' , 'Marginalia' ,'Drop capitals' , 'Images' , 'Seperators' , 'Tables', 'Graphics']

|

||||||

pixels = ["Background", "Main text", "Header", "Marginalia", "Drop capital", "Image", "Separator"]

|

pixels = ["Background", "Main text", "Header", "Marginalia", "Drop capital", "Image", "Separator", "Tables"]

|

||||||

values_indexes = [0, 1, 2, 8, 4, 5, 6]

|

values_indexes = [0, 1, 2, 8, 4, 5, 6, 10]

|

||||||

plt.figure(figsize=(40, 40))

|

plt.figure(figsize=(40, 40))

|

||||||

plt.rcParams["font.size"] = "40"

|

plt.rcParams["font.size"] = "40"

|

||||||

im = plt.imshow(text_regions_p[:, :])

|

im = plt.imshow(text_regions_p[:, :])

|

||||||

|

|

@ -88,8 +90,8 @@ class EynollahPlotter():

|

||||||

if self.dir_of_all is not None:

|

if self.dir_of_all is not None:

|

||||||

values = np.unique(text_regions_p[:, :])

|

values = np.unique(text_regions_p[:, :])

|

||||||

# pixels=['Background' , 'Main text' , 'Heading' , 'Marginalia' ,'Drop capitals' , 'Images' , 'Seperators' , 'Tables', 'Graphics']

|

# pixels=['Background' , 'Main text' , 'Heading' , 'Marginalia' ,'Drop capitals' , 'Images' , 'Seperators' , 'Tables', 'Graphics']

|

||||||

pixels = ["Background", "Main text", "Header", "Marginalia", "Drop capital", "Image", "Separator"]

|

pixels = ["Background", "Main text", "Header", "Marginalia", "Drop capital", "Image", "Separator", "Tables"]

|

||||||

values_indexes = [0, 1, 2, 8, 4, 5, 6]

|

values_indexes = [0, 1, 2, 8, 4, 5, 6, 10]

|

||||||

plt.figure(figsize=(80, 40))

|

plt.figure(figsize=(80, 40))

|

||||||

plt.rcParams["font.size"] = "40"

|

plt.rcParams["font.size"] = "40"

|

||||||

plt.subplot(1, 2, 1)

|

plt.subplot(1, 2, 1)

|

||||||

|

|

@ -127,6 +129,8 @@ class EynollahPlotter():

|

||||||

def save_page_image(self, image_page):

|

def save_page_image(self, image_page):

|

||||||

if self.dir_of_all is not None:

|

if self.dir_of_all is not None:

|

||||||

cv2.imwrite(os.path.join(self.dir_of_all, self.image_filename_stem + "_page.png"), image_page)

|

cv2.imwrite(os.path.join(self.dir_of_all, self.image_filename_stem + "_page.png"), image_page)

|

||||||

|

if self.dir_save_page is not None:

|

||||||

|

cv2.imwrite(os.path.join(self.dir_save_page, self.image_filename_stem + "_page.png"), image_page)

|

||||||

def save_enhanced_image(self, img_res):

|

def save_enhanced_image(self, img_res):

|

||||||

cv2.imwrite(os.path.join(self.dir_out, self.image_filename_stem + "_enhanced.png"), img_res)

|

cv2.imwrite(os.path.join(self.dir_out, self.image_filename_stem + "_enhanced.png"), img_res)

|

||||||

|

|

||||||

|

|

|

||||||

|

|

@ -797,6 +797,76 @@ def putt_bb_of_drop_capitals_of_model_in_patches_in_layout(layout_in_patch):

|

||||||

return layout_in_patch

|

return layout_in_patch

|

||||||

|

|

||||||

def check_any_text_region_in_model_one_is_main_or_header(regions_model_1,regions_model_full,contours_only_text_parent,all_box_coord,all_found_texline_polygons,slopes,contours_only_text_parent_d_ordered):

|

def check_any_text_region_in_model_one_is_main_or_header(regions_model_1,regions_model_full,contours_only_text_parent,all_box_coord,all_found_texline_polygons,slopes,contours_only_text_parent_d_ordered):

|

||||||

|

|

||||||

|

cx_main,cy_main ,x_min_main , x_max_main, y_min_main ,y_max_main,y_corr_x_min_from_argmin=find_new_features_of_contours(contours_only_text_parent)

|

||||||

|

|

||||||

|

length_con=x_max_main-x_min_main

|

||||||

|

height_con=y_max_main-y_min_main

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

all_found_texline_polygons_main=[]

|

||||||

|

all_found_texline_polygons_head=[]

|

||||||

|

|

||||||

|

all_box_coord_main=[]

|

||||||

|

all_box_coord_head=[]

|

||||||

|

|

||||||

|

slopes_main=[]

|

||||||

|

slopes_head=[]

|

||||||

|

|

||||||

|

contours_only_text_parent_main=[]

|

||||||

|

contours_only_text_parent_head=[]

|

||||||

|

|

||||||

|

contours_only_text_parent_main_d=[]

|

||||||

|

contours_only_text_parent_head_d=[]

|

||||||

|

|

||||||

|

for ii in range(len(contours_only_text_parent)):

|

||||||

|

con=contours_only_text_parent[ii]

|

||||||

|

img=np.zeros((regions_model_1.shape[0],regions_model_1.shape[1],3))

|

||||||

|

img = cv2.fillPoly(img, pts=[con], color=(255, 255, 255))

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

|

all_pixels=((img[:,:,0]==255)*1).sum()

|

||||||

|

|

||||||

|

pixels_header=( ( (img[:,:,0]==255) & (regions_model_full[:,:,0]==2) )*1 ).sum()

|

||||||

|

pixels_main=all_pixels-pixels_header

|

||||||

|

|

||||||

|

|

||||||

|

if (pixels_header>=pixels_main) and ( (length_con[ii]/float(height_con[ii]) )>=1.3 ):

|

||||||

|

regions_model_1[:,:][(regions_model_1[:,:]==1) & (img[:,:,0]==255) ]=2

|

||||||

|

contours_only_text_parent_head.append(con)

|

||||||

|

if contours_only_text_parent_d_ordered is not None:

|

||||||

|

contours_only_text_parent_head_d.append(contours_only_text_parent_d_ordered[ii])

|

||||||

|

all_box_coord_head.append(all_box_coord[ii])

|

||||||

|

slopes_head.append(slopes[ii])

|

||||||

|

all_found_texline_polygons_head.append(all_found_texline_polygons[ii])

|

||||||

|

else:

|

||||||

|

regions_model_1[:,:][(regions_model_1[:,:]==1) & (img[:,:,0]==255) ]=1

|

||||||

|

contours_only_text_parent_main.append(con)

|

||||||

|

if contours_only_text_parent_d_ordered is not None:

|

||||||

|

contours_only_text_parent_main_d.append(contours_only_text_parent_d_ordered[ii])

|

||||||

|

all_box_coord_main.append(all_box_coord[ii])

|

||||||

|

slopes_main.append(slopes[ii])

|

||||||

|

all_found_texline_polygons_main.append(all_found_texline_polygons[ii])

|

||||||

|

|

||||||

|

#print(all_pixels,pixels_main,pixels_header)

|

||||||

|

|

||||||

|

return regions_model_1,contours_only_text_parent_main,contours_only_text_parent_head,all_box_coord_main,all_box_coord_head,all_found_texline_polygons_main,all_found_texline_polygons_head,slopes_main,slopes_head,contours_only_text_parent_main_d,contours_only_text_parent_head_d

|

||||||

|

|

||||||

|

|

||||||

|

def check_any_text_region_in_model_one_is_main_or_header_light(regions_model_1,regions_model_full,contours_only_text_parent,all_box_coord,all_found_texline_polygons,slopes,contours_only_text_parent_d_ordered):

|

||||||

|

|

||||||

|

### to make it faster

|

||||||

|

h_o = regions_model_1.shape[0]

|

||||||

|

w_o = regions_model_1.shape[1]

|

||||||

|

|

||||||

|

regions_model_1 = cv2.resize(regions_model_1, (int(regions_model_1.shape[1]/3.), int(regions_model_1.shape[0]/3.)), interpolation=cv2.INTER_NEAREST)

|

||||||

|

regions_model_full = cv2.resize(regions_model_full, (int(regions_model_full.shape[1]/3.), int(regions_model_full.shape[0]/3.)), interpolation=cv2.INTER_NEAREST)

|

||||||

|

contours_only_text_parent = [ (i/3.).astype(np.int32) for i in contours_only_text_parent]

|

||||||

|

|

||||||

|

###

|

||||||

|

|

||||||

cx_main,cy_main ,x_min_main , x_max_main, y_min_main ,y_max_main,y_corr_x_min_from_argmin=find_new_features_of_contours(contours_only_text_parent)

|

cx_main,cy_main ,x_min_main , x_max_main, y_min_main ,y_max_main,y_corr_x_min_from_argmin=find_new_features_of_contours(contours_only_text_parent)

|

||||||

|

|

||||||

length_con=x_max_main-x_min_main

|

length_con=x_max_main-x_min_main

|

||||||

|

|

@ -853,8 +923,14 @@ def check_any_text_region_in_model_one_is_main_or_header(regions_model_1,regions

|

||||||

|

|

||||||

|

|

||||||

|

|

||||||

#plt.imshow(img[:,:,0])

|

### to make it faster

|

||||||

#plt.show()

|

|

||||||

|

regions_model_1 = cv2.resize(regions_model_1, (w_o, h_o), interpolation=cv2.INTER_NEAREST)

|

||||||

|

#regions_model_full = cv2.resize(img, (int(regions_model_full.shape[1]/3.), int(regions_model_full.shape[0]/3.)), interpolation=cv2.INTER_NEAREST)

|

||||||

|

contours_only_text_parent_head = [ (i*3.).astype(np.int32) for i in contours_only_text_parent_head]

|

||||||

|

contours_only_text_parent_main = [ (i*3.).astype(np.int32) for i in contours_only_text_parent_main]

|

||||||

|

###

|

||||||

|

|

||||||

return regions_model_1,contours_only_text_parent_main,contours_only_text_parent_head,all_box_coord_main,all_box_coord_head,all_found_texline_polygons_main,all_found_texline_polygons_head,slopes_main,slopes_head,contours_only_text_parent_main_d,contours_only_text_parent_head_d

|

return regions_model_1,contours_only_text_parent_main,contours_only_text_parent_head,all_box_coord_main,all_box_coord_head,all_found_texline_polygons_main,all_found_texline_polygons_head,slopes_main,slopes_head,contours_only_text_parent_main_d,contours_only_text_parent_head_d

|

||||||

|

|

||||||

def small_textlines_to_parent_adherence2(textlines_con, textline_iamge, num_col):

|

def small_textlines_to_parent_adherence2(textlines_con, textline_iamge, num_col):

|

||||||

|

|

|

||||||

|

|

@ -3,7 +3,8 @@ import numpy as np

|

||||||

from shapely import geometry

|

from shapely import geometry

|

||||||

|

|

||||||

from .rotate import rotate_image, rotation_image_new

|

from .rotate import rotate_image, rotation_image_new

|

||||||

|

from multiprocessing import Process, Queue, cpu_count

|

||||||

|

from multiprocessing import Pool

|

||||||

def contours_in_same_horizon(cy_main_hor):

|

def contours_in_same_horizon(cy_main_hor):

|

||||||

X1 = np.zeros((len(cy_main_hor), len(cy_main_hor)))

|

X1 = np.zeros((len(cy_main_hor), len(cy_main_hor)))

|

||||||

X2 = np.zeros((len(cy_main_hor), len(cy_main_hor)))

|

X2 = np.zeros((len(cy_main_hor), len(cy_main_hor)))

|

||||||

|

|

@ -147,6 +148,96 @@ def return_contours_of_interested_region(region_pre_p, pixel, min_area=0.0002):

|

||||||

|

|

||||||

return contours_imgs

|

return contours_imgs

|

||||||

|

|

||||||

|

def do_work_of_contours_in_image(queue_of_all_params, contours_per_process, indexes_r_con_per_pro, img, slope_first):

|

||||||

|

cnts_org_per_each_subprocess = []

|

||||||

|

index_by_text_region_contours = []

|

||||||

|

for mv in range(len(contours_per_process)):

|

||||||

|

index_by_text_region_contours.append(indexes_r_con_per_pro[mv])

|

||||||

|

|

||||||

|

img_copy = np.zeros(img.shape)

|

||||||

|

img_copy = cv2.fillPoly(img_copy, pts=[contours_per_process[mv]], color=(1, 1, 1))

|

||||||

|

|

||||||

|

img_copy = rotation_image_new(img_copy, -slope_first)

|

||||||

|

|

||||||

|

img_copy = img_copy.astype(np.uint8)

|

||||||

|

imgray = cv2.cvtColor(img_copy, cv2.COLOR_BGR2GRAY)

|

||||||

|

ret, thresh = cv2.threshold(imgray, 0, 255, 0)

|

||||||

|

|

||||||

|

cont_int, _ = cv2.findContours(thresh, cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

|

||||||

|

|

||||||

|

cont_int[0][:, 0, 0] = cont_int[0][:, 0, 0] + np.abs(img_copy.shape[1] - img.shape[1])

|

||||||

|

cont_int[0][:, 0, 1] = cont_int[0][:, 0, 1] + np.abs(img_copy.shape[0] - img.shape[0])

|

||||||

|

|

||||||

|

|

||||||

|

cnts_org_per_each_subprocess.append(cont_int[0])

|

||||||

|

|

||||||

|

queue_of_all_params.put([ cnts_org_per_each_subprocess, index_by_text_region_contours])

|

||||||

|

|

||||||

|

|

||||||

|

def get_textregion_contours_in_org_image_multi(cnts, img, slope_first):

|

||||||

|

|

||||||

|

num_cores = cpu_count()

|

||||||

|

queue_of_all_params = Queue()

|

||||||

|

|

||||||

|

processes = []

|

||||||

|

nh = np.linspace(0, len(cnts), num_cores + 1)

|

||||||

|

indexes_by_text_con = np.array(range(len(cnts)))

|

||||||

|

for i in range(num_cores):

|

||||||

|

contours_per_process = cnts[int(nh[i]) : int(nh[i + 1])]

|

||||||

|

indexes_text_con_per_process = indexes_by_text_con[int(nh[i]) : int(nh[i + 1])]

|

||||||

|

|

||||||

|

processes.append(Process(target=do_work_of_contours_in_image, args=(queue_of_all_params, contours_per_process, indexes_text_con_per_process, img,slope_first )))

|

||||||

|

for i in range(num_cores):

|

||||||

|

processes[i].start()

|

||||||

|

cnts_org = []

|

||||||

|

all_index_text_con = []

|

||||||

|

for i in range(num_cores):

|

||||||

|

list_all_par = queue_of_all_params.get(True)

|

||||||

|

contours_for_sub_process = list_all_par[0]

|

||||||

|

indexes_for_sub_process = list_all_par[1]

|

||||||

|

for j in range(len(contours_for_sub_process)):

|

||||||

|

cnts_org.append(contours_for_sub_process[j])

|

||||||

|

all_index_text_con.append(indexes_for_sub_process[j])

|

||||||

|

for i in range(num_cores):

|

||||||

|

processes[i].join()

|

||||||

|

|

||||||

|

print(all_index_text_con)

|

||||||

|

return cnts_org

|

||||||

|

def loop_contour_image(index_l, cnts,img, slope_first):

|

||||||

|

img_copy = np.zeros(img.shape)

|

||||||

|

img_copy = cv2.fillPoly(img_copy, pts=[cnts[index_l]], color=(1, 1, 1))

|

||||||

|

|

||||||

|

# plt.imshow(img_copy)

|

||||||

|

# plt.show()

|

||||||

|

|

||||||

|

# print(img.shape,'img')

|

||||||

|

img_copy = rotation_image_new(img_copy, -slope_first)

|

||||||

|

##print(img_copy.shape,'img_copy')

|

||||||

|

# plt.imshow(img_copy)

|

||||||

|

# plt.show()

|

||||||

|

|

||||||

|

img_copy = img_copy.astype(np.uint8)

|

||||||

|

imgray = cv2.cvtColor(img_copy, cv2.COLOR_BGR2GRAY)

|

||||||

|

ret, thresh = cv2.threshold(imgray, 0, 255, 0)

|

||||||

|

|

||||||

|

cont_int, _ = cv2.findContours(thresh, cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

|

||||||

|

|

||||||

|

cont_int[0][:, 0, 0] = cont_int[0][:, 0, 0] + np.abs(img_copy.shape[1] - img.shape[1])

|

||||||

|

cont_int[0][:, 0, 1] = cont_int[0][:, 0, 1] + np.abs(img_copy.shape[0] - img.shape[0])

|

||||||

|

# print(np.shape(cont_int[0]))

|

||||||

|

return cont_int[0]

|

||||||

|

|

||||||

|

def get_textregion_contours_in_org_image_multi2(cnts, img, slope_first):

|

||||||

|

|

||||||

|

cnts_org = []

|

||||||

|

# print(cnts,'cnts')

|

||||||

|

with Pool(cpu_count()) as p:

|

||||||

|

cnts_org = p.starmap(loop_contour_image, [(index_l,cnts, img,slope_first) for index_l in range(len(cnts))])

|

||||||

|

|

||||||

|

print(len(cnts_org),'lendiha')

|

||||||

|

|

||||||

|

return cnts_org

|

||||||

|

|

||||||

def get_textregion_contours_in_org_image(cnts, img, slope_first):

|

def get_textregion_contours_in_org_image(cnts, img, slope_first):

|

||||||

|

|

||||||

cnts_org = []

|

cnts_org = []

|

||||||

|

|

@ -175,11 +266,43 @@ def get_textregion_contours_in_org_image(cnts, img, slope_first):

|

||||||

# print(np.shape(cont_int[0]))

|

# print(np.shape(cont_int[0]))

|

||||||

cnts_org.append(cont_int[0])

|

cnts_org.append(cont_int[0])

|

||||||

|

|

||||||

# print(cnts_org,'cnts_org')

|

return cnts_org

|

||||||

|

|

||||||

|

def get_textregion_contours_in_org_image_light(cnts, img, slope_first):

|

||||||

|

|

||||||

|

h_o = img.shape[0]

|

||||||

|

w_o = img.shape[1]

|

||||||

|

|

||||||

|

img = cv2.resize(img, (int(img.shape[1]/3.), int(img.shape[0]/3.)), interpolation=cv2.INTER_NEAREST)

|

||||||

|

##cnts = list( (np.array(cnts)/2).astype(np.int16) )

|

||||||

|

#cnts = cnts/2

|

||||||

|

cnts = [(i/ 3).astype(np.int32) for i in cnts]

|

||||||

|

cnts_org = []

|

||||||

|

#print(cnts,'cnts')

|

||||||

|

for i in range(len(cnts)):

|

||||||

|

img_copy = np.zeros(img.shape)

|

||||||

|

img_copy = cv2.fillPoly(img_copy, pts=[cnts[i]], color=(1, 1, 1))

|

||||||

|

|

||||||

|

# plt.imshow(img_copy)

|

||||||

|

# plt.show()

|

||||||

|

|

||||||

|

# print(img.shape,'img')

|

||||||

|

img_copy = rotation_image_new(img_copy, -slope_first)

|

||||||

|

##print(img_copy.shape,'img_copy')

|

||||||

|

# plt.imshow(img_copy)

|

||||||

|

# plt.show()

|

||||||

|

|

||||||

|

img_copy = img_copy.astype(np.uint8)

|

||||||

|

imgray = cv2.cvtColor(img_copy, cv2.COLOR_BGR2GRAY)

|

||||||

|

ret, thresh = cv2.threshold(imgray, 0, 255, 0)

|

||||||

|

|

||||||

|

cont_int, _ = cv2.findContours(thresh, cv2.RETR_TREE, cv2.CHAIN_APPROX_SIMPLE)

|

||||||

|

|

||||||

|

cont_int[0][:, 0, 0] = cont_int[0][:, 0, 0] + np.abs(img_copy.shape[1] - img.shape[1])

|

||||||

|

cont_int[0][:, 0, 1] = cont_int[0][:, 0, 1] + np.abs(img_copy.shape[0] - img.shape[0])

|

||||||

|

# print(np.shape(cont_int[0]))

|

||||||

|

cnts_org.append(cont_int[0]*3)

|

||||||

|

|

||||||

# sys.exit()

|

|

||||||

# self.y_shift = np.abs(img_copy.shape[0] - img.shape[0])

|

|

||||||

# self.x_shift = np.abs(img_copy.shape[1] - img.shape[1])

|

|

||||||

return cnts_org

|

return cnts_org

|

||||||

|

|

||||||

def return_contours_of_interested_textline(region_pre_p, pixel):

|

def return_contours_of_interested_textline(region_pre_p, pixel):

|

||||||

|

|

|

||||||

|

|

@ -22,12 +22,13 @@ import numpy as np

|

||||||

|

|

||||||

class EynollahXmlWriter():

|

class EynollahXmlWriter():

|

||||||

|

|

||||||

def __init__(self, *, dir_out, image_filename, curved_line, pcgts=None):

|

def __init__(self, *, dir_out, image_filename, curved_line,textline_light, pcgts=None):

|

||||||

self.logger = getLogger('eynollah.writer')

|

self.logger = getLogger('eynollah.writer')

|

||||||

self.counter = EynollahIdCounter()

|

self.counter = EynollahIdCounter()

|

||||||

self.dir_out = dir_out

|

self.dir_out = dir_out

|

||||||

self.image_filename = image_filename

|

self.image_filename = image_filename

|

||||||

self.curved_line = curved_line

|

self.curved_line = curved_line

|

||||||

|

self.textline_light = textline_light

|

||||||

self.pcgts = pcgts

|

self.pcgts = pcgts

|

||||||

self.scale_x = None # XXX set outside __init__

|

self.scale_x = None # XXX set outside __init__

|

||||||

self.scale_y = None # XXX set outside __init__

|

self.scale_y = None # XXX set outside __init__

|

||||||

|

|

@ -60,7 +61,7 @@ class EynollahXmlWriter():

|

||||||

marginal_region.add_TextLine(textline)

|

marginal_region.add_TextLine(textline)

|

||||||

points_co = ''

|

points_co = ''

|

||||||

for l in range(len(all_found_texline_polygons_marginals[marginal_idx][j])):

|

for l in range(len(all_found_texline_polygons_marginals[marginal_idx][j])):

|

||||||

if not self.curved_line:

|

if not (self.curved_line or self.textline_light):

|

||||||

if len(all_found_texline_polygons_marginals[marginal_idx][j][l]) == 2:

|

if len(all_found_texline_polygons_marginals[marginal_idx][j][l]) == 2:

|

||||||

textline_x_coord = max(0, int((all_found_texline_polygons_marginals[marginal_idx][j][l][0] + all_box_coord_marginals[marginal_idx][2] + page_coord[2]) / self.scale_x) )

|

textline_x_coord = max(0, int((all_found_texline_polygons_marginals[marginal_idx][j][l][0] + all_box_coord_marginals[marginal_idx][2] + page_coord[2]) / self.scale_x) )

|

||||||

textline_y_coord = max(0, int((all_found_texline_polygons_marginals[marginal_idx][j][l][1] + all_box_coord_marginals[marginal_idx][0] + page_coord[0]) / self.scale_y) )

|

textline_y_coord = max(0, int((all_found_texline_polygons_marginals[marginal_idx][j][l][1] + all_box_coord_marginals[marginal_idx][0] + page_coord[0]) / self.scale_y) )

|

||||||

|

|

@ -70,7 +71,7 @@ class EynollahXmlWriter():

|

||||||

points_co += str(textline_x_coord)

|

points_co += str(textline_x_coord)

|

||||||

points_co += ','

|

points_co += ','

|

||||||

points_co += str(textline_y_coord)

|

points_co += str(textline_y_coord)

|

||||||

if self.curved_line and np.abs(slopes_marginals[marginal_idx]) <= 45:

|

if (self.curved_line or self.textline_light) and np.abs(slopes_marginals[marginal_idx]) <= 45:

|

||||||

if len(all_found_texline_polygons_marginals[marginal_idx][j][l]) == 2:

|

if len(all_found_texline_polygons_marginals[marginal_idx][j][l]) == 2:

|

||||||

points_co += str(int((all_found_texline_polygons_marginals[marginal_idx][j][l][0] + page_coord[2]) / self.scale_x))

|

points_co += str(int((all_found_texline_polygons_marginals[marginal_idx][j][l][0] + page_coord[2]) / self.scale_x))

|

||||||

points_co += ','

|

points_co += ','

|

||||||

|

|

@ -80,7 +81,7 @@ class EynollahXmlWriter():

|

||||||

points_co += ','

|

points_co += ','

|

||||||

points_co += str(int((all_found_texline_polygons_marginals[marginal_idx][j][l][0][1] + page_coord[0]) / self.scale_y))

|

points_co += str(int((all_found_texline_polygons_marginals[marginal_idx][j][l][0][1] + page_coord[0]) / self.scale_y))

|

||||||

|

|

||||||

elif self.curved_line and np.abs(slopes_marginals[marginal_idx]) > 45:

|

elif (self.curved_line or self.textline_light) and np.abs(slopes_marginals[marginal_idx]) > 45:

|

||||||

if len(all_found_texline_polygons_marginals[marginal_idx][j][l]) == 2:

|

if len(all_found_texline_polygons_marginals[marginal_idx][j][l]) == 2:

|

||||||

points_co += str(int((all_found_texline_polygons_marginals[marginal_idx][j][l][0] + all_box_coord_marginals[marginal_idx][2] + page_coord[2]) / self.scale_x))

|

points_co += str(int((all_found_texline_polygons_marginals[marginal_idx][j][l][0] + all_box_coord_marginals[marginal_idx][2] + page_coord[2]) / self.scale_x))

|

||||||

points_co += ','

|

points_co += ','

|

||||||

|

|

@ -101,7 +102,7 @@ class EynollahXmlWriter():

|

||||||

region_bboxes = all_box_coord[region_idx]

|

region_bboxes = all_box_coord[region_idx]

|

||||||

points_co = ''

|

points_co = ''

|

||||||

for idx_contour_textline, contour_textline in enumerate(all_found_texline_polygons[region_idx][j]):

|

for idx_contour_textline, contour_textline in enumerate(all_found_texline_polygons[region_idx][j]):

|

||||||

if not self.curved_line:

|

if not (self.curved_line or self.textline_light):

|

||||||

if len(contour_textline) == 2:

|

if len(contour_textline) == 2:

|

||||||

textline_x_coord = max(0, int((contour_textline[0] + region_bboxes[2] + page_coord[2]) / self.scale_x))

|

textline_x_coord = max(0, int((contour_textline[0] + region_bboxes[2] + page_coord[2]) / self.scale_x))

|

||||||

textline_y_coord = max(0, int((contour_textline[1] + region_bboxes[0] + page_coord[0]) / self.scale_y))

|

textline_y_coord = max(0, int((contour_textline[1] + region_bboxes[0] + page_coord[0]) / self.scale_y))

|

||||||

|

|

@ -112,7 +113,7 @@ class EynollahXmlWriter():

|

||||||

points_co += ','

|

points_co += ','

|

||||||

points_co += str(textline_y_coord)

|

points_co += str(textline_y_coord)

|

||||||

|

|

||||||

if self.curved_line and np.abs(slopes[region_idx]) <= 45:

|

if (self.curved_line or self.textline_light) and np.abs(slopes[region_idx]) <= 45:

|

||||||

if len(contour_textline) == 2:

|

if len(contour_textline) == 2:

|

||||||

points_co += str(int((contour_textline[0] + page_coord[2]) / self.scale_x))

|

points_co += str(int((contour_textline[0] + page_coord[2]) / self.scale_x))

|

||||||

points_co += ','

|

points_co += ','

|

||||||

|

|

@ -121,7 +122,7 @@ class EynollahXmlWriter():

|

||||||

points_co += str(int((contour_textline[0][0] + page_coord[2]) / self.scale_x))

|

points_co += str(int((contour_textline[0][0] + page_coord[2]) / self.scale_x))

|

||||||

points_co += ','

|

points_co += ','

|

||||||

points_co += str(int((contour_textline[0][1] + page_coord[0])/self.scale_y))

|

points_co += str(int((contour_textline[0][1] + page_coord[0])/self.scale_y))

|

||||||

elif self.curved_line and np.abs(slopes[region_idx]) > 45:

|

elif (self.curved_line or self.textline_light) and np.abs(slopes[region_idx]) > 45:

|

||||||

if len(contour_textline)==2:

|

if len(contour_textline)==2:

|

||||||

points_co += str(int((contour_textline[0] + region_bboxes[2] + page_coord[2])/self.scale_x))

|

points_co += str(int((contour_textline[0] + region_bboxes[2] + page_coord[2])/self.scale_x))

|

||||||

points_co += ','

|

points_co += ','

|

||||||

|

|

|

||||||

Loading…

Add table

Add a link

Reference in a new issue