mirror of

https://github.com/qurator-spk/eynollah.git

synced 2026-03-20 14:12:08 +01:00

Compare commits

No commits in common. "main" and "v0.0.8" have entirely different histories.

115 changed files with 5053 additions and 24796 deletions

28

.circleci/config.yml

Normal file

28

.circleci/config.yml

Normal file

|

|

@ -0,0 +1,28 @@

|

|||

version: 2

|

||||

|

||||

jobs:

|

||||

|

||||

build-python36:

|

||||

docker:

|

||||

- image: python:3.6

|

||||

steps:

|

||||

- checkout

|

||||

- restore_cache:

|

||||

keys:

|

||||

- model-cache

|

||||

- run: make models

|

||||

- save_cache:

|

||||

key: model-cache

|

||||

paths:

|

||||

models_eynollah.tar.gz

|

||||

models_eynollah

|

||||

- run: make install

|

||||

- run: make smoke-test

|

||||

|

||||

workflows:

|

||||

version: 2

|

||||

build:

|

||||

jobs:

|

||||

- build-python36

|

||||

#- build-python37

|

||||

#- build-python38 # no tensorflow for python 3.8

|

||||

|

|

@ -1,6 +0,0 @@

|

|||

tests

|

||||

dist

|

||||

build

|

||||

env*

|

||||

*.egg-info

|

||||

models_eynollah*

|

||||

44

.github/workflows/build-docker.yml

vendored

44

.github/workflows/build-docker.yml

vendored

|

|

@ -1,44 +0,0 @@

|

|||

name: CD

|

||||

|

||||

on:

|

||||

push:

|

||||

branches: [ "main" ]

|

||||

workflow_dispatch: # run manually

|

||||

|

||||

jobs:

|

||||

|

||||

build:

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

packages: write

|

||||

contents: read

|

||||

steps:

|

||||

- name: Checkout

|

||||

uses: actions/checkout@v4

|

||||

with:

|

||||

# we need tags for docker version tagging

|

||||

fetch-tags: true

|

||||

fetch-depth: 0

|

||||

- # Activate cache export feature to reduce build time of images

|

||||

name: Set up Docker Buildx

|

||||

uses: docker/setup-buildx-action@v3

|

||||

- name: Login to GitHub Container Registry

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

registry: ghcr.io

|

||||

username: ${{ github.actor }}

|

||||

password: ${{ secrets.GITHUB_TOKEN }}

|

||||

- name: Log in to Docker Hub

|

||||

uses: docker/login-action@v3

|

||||

with:

|

||||

username: ${{ secrets.DOCKERIO_USERNAME }}

|

||||

password: ${{ secrets.DOCKERIO_PASSWORD }}

|

||||

- name: Build the Docker image

|

||||

# build both tags at the same time

|

||||

run: make docker DOCKER_TAG="docker.io/ocrd/eynollah -t ghcr.io/qurator-spk/eynollah"

|

||||

- name: Test the Docker image

|

||||

run: docker run --rm ocrd/eynollah ocrd-eynollah-segment -h

|

||||

- name: Push to Dockerhub

|

||||

run: docker push docker.io/ocrd/eynollah

|

||||

- name: Push to Github Container Registry

|

||||

run: docker push ghcr.io/qurator-spk/eynollah

|

||||

24

.github/workflows/pypi.yml

vendored

24

.github/workflows/pypi.yml

vendored

|

|

@ -1,24 +0,0 @@

|

|||

name: PyPI CD

|

||||

|

||||

on:

|

||||

release:

|

||||

types: [published]

|

||||

workflow_dispatch:

|

||||

|

||||

jobs:

|

||||

pypi-publish:

|

||||

name: upload release to PyPI

|

||||

runs-on: ubuntu-latest

|

||||

permissions:

|

||||

# IMPORTANT: this permission is mandatory for Trusted Publishing

|

||||

id-token: write

|

||||

steps:

|

||||

- uses: actions/checkout@v4

|

||||

- name: Set up Python

|

||||

uses: actions/setup-python@v5

|

||||

- name: Build package

|

||||

run: make build

|

||||

- name: Publish package distributions to PyPI

|

||||

uses: pypa/gh-action-pypi-publish@release/v1

|

||||

with:

|

||||

verbose: true

|

||||

90

.github/workflows/test-eynollah.yml

vendored

90

.github/workflows/test-eynollah.yml

vendored

|

|

@ -1,9 +1,9 @@

|

|||

# This workflow will install Python dependencies, run tests and lint with a variety of Python versions

|

||||

# For more information see: https://help.github.com/actions/language-and-framework-guides/using-python-with-github-actions

|

||||

|

||||

name: Test

|

||||

name: Python package

|

||||

|

||||

on: [push]

|

||||

on: [push, pull_request]

|

||||

|

||||

jobs:

|

||||

build:

|

||||

|

|

@ -11,90 +11,26 @@ jobs:

|

|||

runs-on: ubuntu-latest

|

||||

strategy:

|

||||

matrix:

|

||||

python-version: ['3.8', '3.9', '3.10', '3.11']

|

||||

python-version: ['3.6'] # '3.7'

|

||||

|

||||

steps:

|

||||

- name: clean up

|

||||

run: |

|

||||

df -h

|

||||

sudo rm -rf /usr/share/dotnet

|

||||

sudo rm -rf /usr/local/lib/android

|

||||

sudo rm -rf /opt/ghc

|

||||

sudo rm -rf "/usr/local/share/boost"

|

||||

sudo rm -rf "$AGENT_TOOLSDIRECTORY"

|

||||

df -h

|

||||

- uses: actions/checkout@v4

|

||||

|

||||

# - name: Lint with ruff

|

||||

# uses: astral-sh/ruff-action@v3

|

||||

# with:

|

||||

# src: "./src"

|

||||

|

||||

- name: Try to restore models_eynollah

|

||||

uses: actions/cache/restore@v4

|

||||

id: all_model_cache

|

||||

- uses: actions/checkout@v2

|

||||

- uses: actions/cache@v2

|

||||

id: model_cache

|

||||

with:

|

||||

path: models_eynollah

|

||||

key: models_eynollah-${{ hashFiles('src/eynollah/model_zoo/default_specs.py') }}

|

||||

|

||||

key: ${{ runner.os }}-models

|

||||

- name: Download models

|

||||

if: steps.all_model_cache.outputs.cache-hit != 'true'

|

||||

run: |

|

||||

make models

|

||||

ls -la models_eynollah

|

||||

|

||||

- uses: actions/cache/save@v4

|

||||

if: steps.all_model_cache.outputs.cache-hit != 'true'

|

||||

with:

|

||||

path: models_eynollah

|

||||

key: models_eynollah-${{ hashFiles('src/eynollah/model_zoo/default_specs.py') }}

|

||||

|

||||

if: steps.model_cache.outputs.cache-hit != 'true'

|

||||

run: make models

|

||||

- name: Set up Python ${{ matrix.python-version }}

|

||||

uses: actions/setup-python@v5

|

||||

uses: actions/setup-python@v2

|

||||

with:

|

||||

python-version: ${{ matrix.python-version }}

|

||||

|

||||

# - uses: actions/cache@v4

|

||||

# with:

|

||||

# path: |

|

||||

# path/to/dependencies

|

||||

# some/other/dependencies

|

||||

# key: ${{ runner.os }}-${{ hashFiles('**/lockfiles') }}

|

||||

|

||||

- name: Install dependencies

|

||||

run: |

|

||||

python -m pip install --upgrade pip

|

||||

make install-dev EXTRAS=OCR,plotting

|

||||

make deps-test EXTRAS=OCR,plotting

|

||||

|

||||

pip install .

|

||||

pip install -r requirements-test.txt

|

||||

- name: Test with pytest

|

||||

run: make coverage PYTEST_ARGS="-vv --junitxml=pytest.xml"

|

||||

|

||||

- name: Get coverage results

|

||||

run: |

|

||||

coverage report --format=markdown >> $GITHUB_STEP_SUMMARY

|

||||

coverage html

|

||||

coverage json

|

||||

coverage xml

|

||||

|

||||

- name: Store coverage results

|

||||

uses: actions/upload-artifact@v4

|

||||

with:

|

||||

name: coverage-report_${{ matrix.python-version }}

|

||||

path: |

|

||||

htmlcov

|

||||

pytest.xml

|

||||

coverage.xml

|

||||

coverage.json

|

||||

|

||||

- name: Upload coverage results

|

||||

uses: codecov/codecov-action@v4

|

||||

with:

|

||||

files: coverage.xml

|

||||

fail_ci_if_error: false

|

||||

|

||||

- name: Test standalone CLI

|

||||

run: make smoke-test

|

||||

|

||||

- name: Test OCR-D CLI

|

||||

run: make ocrd-test

|

||||

run: make test

|

||||

|

|

|

|||

7

.gitignore

vendored

7

.gitignore

vendored

|

|

@ -2,13 +2,6 @@

|

|||

__pycache__

|

||||

sbb_newspapers_org_image/pylint.log

|

||||

models_eynollah*

|

||||

models_ocr*

|

||||

models_layout*

|

||||

default-2021-03-09

|

||||

output.html

|

||||

/build

|

||||

/dist

|

||||

*.tif

|

||||

*.sw?

|

||||

TAGS

|

||||

uv.lock

|

||||

|

|

|

|||

283

CHANGELOG.md

283

CHANGELOG.md

|

|

@ -5,275 +5,7 @@ Versioned according to [Semantic Versioning](http://semver.org/).

|

|||

|

||||

## Unreleased

|

||||

|

||||

Added:

|

||||

|

||||

* "Model zoo", central place to describe and load models, #207

|

||||

* Training code for the CNN/RNN OCR model

|

||||

|

||||

Changed:

|

||||

|

||||

* Lint training code, #204

|

||||

* Update documentation: README, pyproject.toml metadata, guides in `docs/`, #209

|

||||

|

||||

|

||||

## [0.6.0] - 2025-10-17

|

||||

|

||||

Added:

|

||||

|

||||

* `eynollah-training` CLI and docs for training the models, #187, #193, https://github.com/qurator-spk/sbb_pixelwise_segmentation/tree/unifying-training-models

|

||||

|

||||

Fixed:

|

||||

|

||||

* `join_polygons` always returning Polygon, not MultiPolygon, #203

|

||||

|

||||

## [0.6.0rc2] - 2025-10-14

|

||||

|

||||

Fixed:

|

||||

|

||||

* Prevent OOM GPU error by avoiding loading the `region_fl` model, #199

|

||||

* XML output: encoding should be `utf-8`, not `utf8`, #196, #197

|

||||

|

||||

## [0.6.0rc1] - 2025-10-10

|

||||

|

||||

Fixed:

|

||||

|

||||

* continue processing when no columns detected but text regions exist

|

||||

* convert marginalia to main text if no main text is present

|

||||

* reset deskewing angle to 0° when text covers <30% image area and detected angle >45°

|

||||

* :fire: polygons: avoid invalid paths (use `Polygon.buffer()` instead of dilation etc.)

|

||||

* `return_boxes_of_images_by_order_of_reading_new`: avoid Numpy.dtype mismatch, simplify

|

||||

* `return_boxes_of_images_by_order_of_reading_new`: log any exceptions instead of ignoring

|

||||

* `filter_contours_without_textline_inside`: avoid removing from duplicate lists twice

|

||||

* `get_marginals`: exit early if no peaks found to avoid spurious overlap mask

|

||||

* `get_smallest_skew`: after shifting search range of rotation angle, use overall best result

|

||||

* Dockerfile: fix CUDA installation (cuDNN contested between Torch and TF due to extra OCR)

|

||||

* OCR: re-instate missing methods and fix `utils_ocr` function calls

|

||||

* mbreorder/enhancement CLIs: missing imports

|

||||

* :fire: writer: `SeparatorRegion` needs `SeparatorRegionType` (not `ImageRegionType`), f458e3e

|

||||

* tests: switch from `pytest-subtests` to `parametrize` so we can use `pytest-isolate`

|

||||

(so CUDA memory gets freed between tests if running on GPU)

|

||||

|

||||

Added:

|

||||

* :fire: `layout` CLI: new option `--model_version` to override default choices

|

||||

* test coverage for OCR options in `layout`

|

||||

* test coverage for table detection in `layout`

|

||||

* CI linting with ruff

|

||||

|

||||

Changed:

|

||||

|

||||

* polygons: slightly widen for regions and lines, increase for separators

|

||||

* various refactorings, some code style and identifier improvements

|

||||

* deskewing/multiprocessing: switch back to ProcessPoolExecutor (faster),

|

||||

but use shared memory if necessary, and switch back from `loky` to stdlib,

|

||||

and shutdown in `del()` instead of `atexit`

|

||||

* :fire: OCR: switch CNN-RNN model to `20250930` version compatible with TF 2.12 on CPU, too

|

||||

* OCR: allow running `-tr` without `-fl`, too

|

||||

* :fire: writer: use `@type='heading'` instead of `'header'` for headings

|

||||

* :fire: performance gains via refactoring (simplification, less copy-code, vectorization,

|

||||

avoiding unused calculations, avoiding unnecessary 3-channel image operations)

|

||||

* :fire: heuristic reading order detection: many improvements

|

||||

- contour vs splitter box matching:

|

||||

* contour must be contained in box exactly instead of heuristics

|

||||

* make fallback center matching, center must be contained in box

|

||||

- original vs deskewed contour matching:

|

||||

* same min-area filter on both sides

|

||||

* similar area score in addition to center proximity

|

||||

* avoid duplicate and missing mappings by allowing N:M

|

||||

matches and splitting+joining where necessary

|

||||

* CI: update+improve model caching

|

||||

|

||||

|

||||

## [0.5.0] - 2025-09-26

|

||||

|

||||

Fixed:

|

||||

|

||||

* restoring the contour in the original image caused an error due to an empty tuple, #154

|

||||

* removed NumPy warnings calculating sigma, mean, (fixed issue #158)

|

||||

* fixed bug in `separate_lines.py`, #124

|

||||

* Drop capitals are now handled separately from their corresponding textline

|

||||

* Marginals are now divided into left and right. Their reading order is written first for left marginals, then for right marginals, and within each side from top to bottom

|

||||

* Added a new page extraction model. Instead of bounding boxes, it outputs page contours in the XML file, improving results for skewed pages

|

||||

* Improved reading order for cases where a textline is segmented into multiple smaller textlines

|

||||

|

||||

Changed

|

||||

|

||||

* CLIs: read only allowed filename suffixes (image or XML) with `--dir_in`

|

||||

* CLIs: make all output option required, and `-i` / `-di` required but mutually exclusive

|

||||

* ocr CLI: drop redundant `-brb` in favour of just `-dib`

|

||||

* APIs: move all input/output path options from class (kwarg and attribute) ro `run` kwarg

|

||||

* layout textlines: polygonal also without `-cl`

|

||||

|

||||

Added:

|

||||

|

||||

* `eynollah machine-based-reading-order` CLI to run reading order detection, #175

|

||||

* `eynollah enhancement` CLI to run image enhancement, #175

|

||||

* Improved models for page extraction and reading order detection, #175

|

||||

* For the lightweight version (layout and textline detection), thresholds are now assigned to the artificial class. Users can apply these thresholds to improve detection of isolated textlines and regions. To counteract the drawback of thresholding, the skeleton of the artificial class is used to keep lines as thin as possible (resolved issues #163 and #161)

|

||||

* Added and integrated a trained CNN-RNN OCR models

|

||||

* Added and integrated a trained TrOCR model

|

||||

* Improved OCR detection to support vertical and curved textlines

|

||||

* Introduced a new machine-based reading order model with rotation augmentation

|

||||

* Optimized reading order speed by clustering text regions that belong to the same block, maintaining top-to-bottom order

|

||||

* Implemented text merging across textlines based on hyphenation when a line ends with a hyphen

|

||||

* Integrated image enhancement as a separate use case

|

||||

* Added reading order functionality on the layout level as a separate use case

|

||||

* CNN-RNN OCR models provide confidence scores for predictions

|

||||

* Added OCR visualization: predicted OCR can be overlaid on an image of the same size as the input

|

||||

* Introduced a threshold value for CNN-RNN OCR models, allowing users to filter out low-confidence textline predictions

|

||||

* For OCR, users can specify a single model by name instead of always using the default model

|

||||

* Under the OCR use case, if Ground Truth XMLs and images are available, textline image and corresponding text extraction can now be performed

|

||||

|

||||

Merged PRs:

|

||||

|

||||

* better machine based reading order + layout and textline + ocr by @vahidrezanezhad in https://github.com/qurator-spk/eynollah/pull/175

|

||||

* CI: pypi by @kba in https://github.com/qurator-spk/eynollah/pull/154

|

||||

* CI: Use most recent actions/setup-python@v5 by @kba in https://github.com/qurator-spk/eynollah/pull/157

|

||||

* update docker by @bertsky in https://github.com/qurator-spk/eynollah/pull/159

|

||||

* Ocrd fixes by @kba in https://github.com/qurator-spk/eynollah/pull/167

|

||||

* Updating readme for eynollah use cases cli by @kba in https://github.com/qurator-spk/eynollah/pull/166

|

||||

* OCR-D processor: expose reading_order_machine_based by @bertsky in https://github.com/qurator-spk/eynollah/pull/171

|

||||

* prepare release v0.5.0: fix logging by @bertsky in https://github.com/qurator-spk/eynollah/pull/180

|

||||

* mb_ro_on_layout: remove copy-pasta code not actually used by @kba in https://github.com/qurator-spk/eynollah/pull/181

|

||||

* prepare release v0.5.0: improve CLI docstring, refactor I/O path options from class to run kwargs, increase test coverage @bertsky in #182

|

||||

* prepare release v0.5.0: fix for OCR doit subtest by @bertsky in https://github.com/qurator-spk/eynollah/pull/183

|

||||

* Prepare release v0.5.0 by @kba in https://github.com/qurator-spk/eynollah/pull/178

|

||||

* updating eynollah README, how to use it for use cases by @vahidrezanezhad in https://github.com/qurator-spk/eynollah/pull/156

|

||||

* add feedback to command line interface by @michalbubula in https://github.com/qurator-spk/eynollah/pull/170

|

||||

|

||||

## [0.4.0] - 2025-04-07

|

||||

|

||||

Fixed:

|

||||

|

||||

* allow empty imports for optional dependencies

|

||||

* avoid Numpy warnings (empty slices etc.)

|

||||

* remove deprecated Numpy types

|

||||

* binarization CLI: make `dir_in` usable again

|

||||

|

||||

Added:

|

||||

|

||||

* Continuous Deployment via Dockerhub and GHCR

|

||||

* CI: also test CLIs and OCR-D

|

||||

* CI: measure code coverage, annotate+upload reports

|

||||

* smoke-test: also check results

|

||||

* smoke-test: also test sbb-binarize

|

||||

* ocrd-test: analog for OCR-D CLI (segment and binarize)

|

||||

* pytest: add asserts, extend coverage, use subtests for various options

|

||||

* pytest: also add binarization

|

||||

* pytest: add `dir_in` mode (segment and binarize)

|

||||

* make install: control optional dependencies via `EXTRAS` variable

|

||||

* OCR-D: expose and describe recently added parameters:

|

||||

- `ignore_page_extraction`

|

||||

- `allow_enhancement`

|

||||

- `textline_light`

|

||||

- `right_to_left`

|

||||

* OCR-D: :fire: integrate ocrd-sbb-binarize

|

||||

* add detection confidence in `TextRegion/Coords/@conf`

|

||||

(but only in light version and not for marginalia)

|

||||

|

||||

Changed:

|

||||

|

||||

* Docker build: simplify, w/ `OCR`, conform to OCR-D spec

|

||||

* OCR-D: :fire: migrate to core v3

|

||||

- initialize+setup only once

|

||||

- restrict number of parallel page workers to 1

|

||||

(conflicts with existing multiprocessing; TF parts not mp-compatible)

|

||||

- do query maximally annotated page image

|

||||

(but filtering existing binarization/cropping/deskewing),

|

||||

rebase (as new `@imageFilename`) if necessary

|

||||

- add behavioural docstring

|

||||

|

||||

* :fire: refactor `Eynollah` API:

|

||||

- no more data (kw)args at init,

|

||||

but kwargs `dir_in` / `image_filename` for `run()`

|

||||

- no more data attributes, but function kwargs

|

||||

(`pcgts`, `image_filename`, `image_pil`, `dir_in`, `override_dpi`)

|

||||

- remove redundant TF session/model loaders

|

||||

(only load once during init)

|

||||

- factor `run_single()` out of `run()` (loop body),

|

||||

expose for independent calls (like OCR-D)

|

||||

- expose `cache_images()`, add `dpi` kwarg, set `self._imgs`

|

||||

- single-image mode writes PAGE file result

|

||||

(just as directory mode does)

|

||||

|

||||

* CLI: assertions (instead of print+exit) for options checks

|

||||

* light mode: fine-tune ratio to better detect a region as header

|

||||

|

||||

## [0.3.1] - 2024-08-27

|

||||

|

||||

Fixed:

|

||||

|

||||

* regression in OCR-D processor, #106

|

||||

* Expected Ptrcv::UMat for argument 'contour', #110

|

||||

* Memory usage explosion with very narrow images (e.g. book spine), #67

|

||||

|

||||

## [0.3.0] - 2023-05-13

|

||||

|

||||

Changed:

|

||||

|

||||

* Eynollah light integration, #86

|

||||

* use PEP420 style qurator namespace, #97

|

||||

* set_memory_growth to all GPU devices alike, #100

|

||||

|

||||

Fixed:

|

||||

|

||||

* PAGE-XML coordinates can have self-intersections, #20

|

||||

* reading order representation (XML order vs index), #22

|

||||

* allow cropping separately, #26

|

||||

* Order of regions, #51

|

||||

* error while running inference, #75

|

||||

* Eynollah crashes while processing image, #77

|

||||

* ValueError: bad marshal data, #87

|

||||

* contour extraction: inhomogeneous shape, #92

|

||||

* Confusing model dir variables, #93

|

||||

* New release?, #96

|

||||

|

||||

## [0.2.0] - 2023-03-24

|

||||

|

||||

Changed:

|

||||

|

||||

* Convert default model from HDFS to TF SavedModel, #91

|

||||

|

||||

Added:

|

||||

|

||||

* parmeter `tables` to toggle table detectino, #91

|

||||

* default model described in ocrd-tool.json, #91

|

||||

|

||||

## [0.1.0] - 2023-03-22

|

||||

|

||||

Fixed:

|

||||

|

||||

* Do not produce spurious `TextEquiv`, #68

|

||||

* Less spammy logging, #64, #65, #71

|

||||

|

||||

Changed:

|

||||

|

||||

* Upgrade to tensorflow 2.4.0, #74

|

||||

* Improved README

|

||||

* CI: test for python 3.7+, #90

|

||||

|

||||

## [0.0.11] - 2022-02-02

|

||||

|

||||

Fixed:

|

||||

|

||||

* `models` parameter should have `content-type`, #61, OCR-D/core#777

|

||||

|

||||

## [0.0.10] - 2021-09-27

|

||||

|

||||

Fixed:

|

||||

|

||||

* call to `uild_pagexml_no_full_layout` for empty pages, #52

|

||||

|

||||

## [0.0.9] - 2021-08-16

|

||||

|

||||

Added:

|

||||

|

||||

* Table detection, #48

|

||||

|

||||

Fixed:

|

||||

|

||||

* Catch exception, #47

|

||||

|

||||

## [0.0.8] - 2021-07-27

|

||||

## [0.0.7] - 2021-07-27

|

||||

|

||||

Fixed:

|

||||

|

||||

|

|

@ -318,19 +50,6 @@ Fixed:

|

|||

Initial release

|

||||

|

||||

<!-- link-labels -->

|

||||

[0.7.0]: ../../compare/v0.7.0...v0.6.0

|

||||

[0.6.0]: ../../compare/v0.6.0...v0.6.0rc2

|

||||

[0.6.0rc2]: ../../compare/v0.6.0rc2...v0.6.0rc1

|

||||

[0.6.0rc1]: ../../compare/v0.6.0rc1...v0.5.0

|

||||

[0.5.0]: ../../compare/v0.5.0...v0.4.0

|

||||

[0.4.0]: ../../compare/v0.4.0...v0.3.1

|

||||

[0.3.1]: ../../compare/v0.3.1...v0.3.0

|

||||

[0.3.0]: ../../compare/v0.3.0...v0.2.0

|

||||

[0.2.0]: ../../compare/v0.2.0...v0.1.0

|

||||

[0.1.0]: ../../compare/v0.1.0...v0.0.11

|

||||

[0.0.11]: ../../compare/v0.0.11...v0.0.10

|

||||

[0.0.10]: ../../compare/v0.0.10...v0.0.9

|

||||

[0.0.9]: ../../compare/v0.0.9...v0.0.8

|

||||

[0.0.8]: ../../compare/v0.0.8...v0.0.7

|

||||

[0.0.7]: ../../compare/v0.0.7...v0.0.6

|

||||

[0.0.6]: ../../compare/v0.0.6...v0.0.5

|

||||

|

|

|

|||

49

Dockerfile

49

Dockerfile

|

|

@ -1,49 +0,0 @@

|

|||

ARG DOCKER_BASE_IMAGE

|

||||

FROM $DOCKER_BASE_IMAGE

|

||||

|

||||

ARG VCS_REF

|

||||

ARG BUILD_DATE

|

||||

LABEL \

|

||||

maintainer="https://ocr-d.de/en/contact" \

|

||||

org.label-schema.vcs-ref=$VCS_REF \

|

||||

org.label-schema.vcs-url="https://github.com/qurator-spk/eynollah" \

|

||||

org.label-schema.build-date=$BUILD_DATE \

|

||||

org.opencontainers.image.vendor="DFG-Funded Initiative for Optical Character Recognition Development" \

|

||||

org.opencontainers.image.title="Eynollah" \

|

||||

org.opencontainers.image.description="" \

|

||||

org.opencontainers.image.source="https://github.com/qurator-spk/eynollah" \

|

||||

org.opencontainers.image.documentation="https://github.com/qurator-spk/eynollah/blob/${VCS_REF}/README.md" \

|

||||

org.opencontainers.image.revision=$VCS_REF \

|

||||

org.opencontainers.image.created=$BUILD_DATE \

|

||||

org.opencontainers.image.base.name=ocrd/core-cuda-tf2

|

||||

|

||||

ENV DEBIAN_FRONTEND=noninteractive

|

||||

# set proper locales

|

||||

ENV PYTHONIOENCODING=utf8

|

||||

ENV LANG=C.UTF-8

|

||||

ENV LC_ALL=C.UTF-8

|

||||

|

||||

# avoid HOME/.local/share (hard to predict USER here)

|

||||

# so let XDG_DATA_HOME coincide with fixed system location

|

||||

# (can still be overridden by derived stages)

|

||||

ENV XDG_DATA_HOME /usr/local/share

|

||||

# avoid the need for an extra volume for persistent resource user db

|

||||

# (i.e. XDG_CONFIG_HOME/ocrd/resources.yml)

|

||||

ENV XDG_CONFIG_HOME /usr/local/share/ocrd-resources

|

||||

|

||||

WORKDIR /build/eynollah

|

||||

COPY . .

|

||||

COPY ocrd-tool.json .

|

||||

# prepackage ocrd-tool.json as ocrd-all-tool.json

|

||||

RUN ocrd ocrd-tool ocrd-tool.json dump-tools > $(dirname $(ocrd bashlib filename))/ocrd-all-tool.json

|

||||

# prepackage ocrd-all-module-dir.json

|

||||

RUN ocrd ocrd-tool ocrd-tool.json dump-module-dirs > $(dirname $(ocrd bashlib filename))/ocrd-all-module-dir.json

|

||||

# install everything and reduce image size

|

||||

RUN make install EXTRAS=OCR && rm -rf /build/eynollah

|

||||

# fixup for broken cuDNN installation (Torch pulls in 8.5.0, which is incompatible with Tensorflow)

|

||||

RUN pip install nvidia-cudnn-cu11==8.6.0.163

|

||||

# smoke test

|

||||

RUN eynollah --help

|

||||

|

||||

WORKDIR /data

|

||||

VOLUME /data

|

||||

118

Makefile

118

Makefile

|

|

@ -1,24 +1,5 @@

|

|||

PYTHON ?= python3

|

||||

PIP ?= pip3

|

||||

EXTRAS ?=

|

||||

|

||||

# DOCKER_BASE_IMAGE = artefakt.dev.sbb.berlin:5000/sbb/ocrd_core:v2.68.0

|

||||

DOCKER_BASE_IMAGE ?= docker.io/ocrd/core-cuda-tf2:latest

|

||||

DOCKER_TAG ?= ocrd/eynollah

|

||||

DOCKER ?= docker

|

||||

WGET = wget -O

|

||||

|

||||

#SEG_MODEL := https://qurator-data.de/eynollah/2021-04-25/models_eynollah.tar.gz

|

||||

#SEG_MODEL := https://qurator-data.de/eynollah/2022-04-05/models_eynollah_renamed.tar.gz

|

||||

# SEG_MODEL := https://qurator-data.de/eynollah/2022-04-05/models_eynollah.tar.gz

|

||||

#SEG_MODEL := https://github.com/qurator-spk/eynollah/releases/download/v0.3.0/models_eynollah.tar.gz

|

||||

#SEG_MODEL := https://github.com/qurator-spk/eynollah/releases/download/v0.3.1/models_eynollah.tar.gz

|

||||

#SEG_MODEL := https://zenodo.org/records/17194824/files/models_layout_v0_5_0.tar.gz?download=1

|

||||

EYNOLLAH_MODELS_URL := https://zenodo.org/records/17580627/files/models_all_v0_7_0.zip

|

||||

EYNOLLAH_MODELS_ZIP = $(notdir $(EYNOLLAH_MODELS_URL))

|

||||

EYNOLLAH_MODELS_DIR = $(EYNOLLAH_MODELS_ZIP:%.zip=%)

|

||||

|

||||

PYTEST_ARGS ?= -vv --isolate

|

||||

EYNOLLAH_MODELS ?= $(PWD)/models_eynollah

|

||||

export EYNOLLAH_MODELS

|

||||

|

||||

# BEGIN-EVAL makefile-parser --make-help Makefile

|

||||

|

||||

|

|

@ -26,106 +7,37 @@ help:

|

|||

@echo ""

|

||||

@echo " Targets"

|

||||

@echo ""

|

||||

@echo " docker Build Docker image"

|

||||

@echo " build Build Python source and binary distribution"

|

||||

@echo " install Install package with pip"

|

||||

@echo " models Download and extract models to $(PWD)/models_eynollah"

|

||||

@echo " install Install with pip"

|

||||

@echo " install-dev Install editable with pip"

|

||||

@echo " deps-test Install test dependencies with pip"

|

||||

@echo " models Download and extract models to $(CURDIR):"

|

||||

@echo " $(EYNOLLAH_MODELS_DIR)"

|

||||

@echo " smoke-test Run simple CLI check"

|

||||

@echo " ocrd-test Run OCR-D CLI check"

|

||||

@echo " test Run unit tests"

|

||||

@echo ""

|

||||

@echo " Variables"

|

||||

@echo " EXTRAS comma-separated list of features (like 'OCR,plotting') for 'install' [$(EXTRAS)]"

|

||||

@echo " DOCKER_TAG Docker image tag for 'docker' [$(DOCKER_TAG)]"

|

||||

@echo " PYTEST_ARGS pytest args for 'test' (Set to '-s' to see log output during test execution, '-vv' to see individual tests. [$(PYTEST_ARGS)]"

|

||||

@echo " ALL_MODELS URL of archive of all models [$(ALL_MODELS)]"

|

||||

@echo ""

|

||||

|

||||

# END-EVAL

|

||||

|

||||

# Download and extract models to $(PWD)/models_layout_v0_6_0

|

||||

models: $(EYNOLLAH_MODELS_DIR)

|

||||

|

||||

# do not download these files if we already have the directories

|

||||

.INTERMEDIATE: $(EYNOLLAH_MODELS_ZIP)

|

||||

# Download and extract models to $(PWD)/models_eynollah

|

||||

models: models_eynollah

|

||||

|

||||

$(EYNOLLAH_MODELS_ZIP):

|

||||

$(WGET) $@ $(EYNOLLAH_MODELS_URL)

|

||||

models_eynollah: models_eynollah.tar.gz

|

||||

tar xf models_eynollah.tar.gz

|

||||

|

||||

$(EYNOLLAH_MODELS_DIR): $(EYNOLLAH_MODELS_ZIP)

|

||||

unzip $<

|

||||

|

||||

build:

|

||||

$(PIP) install build

|

||||

$(PYTHON) -m build .

|

||||

models_eynollah.tar.gz:

|

||||

wget 'https://qurator-data.de/eynollah/models_eynollah.tar.gz'

|

||||

|

||||

# Install with pip

|

||||

install:

|

||||

$(PIP) install .$(and $(EXTRAS),[$(EXTRAS)])

|

||||

pip install .

|

||||

|

||||

# Install editable with pip

|

||||

install-dev:

|

||||

$(PIP) install -e .$(and $(EXTRAS),[$(EXTRAS)])

|

||||

pip install -e .

|

||||

|

||||

deps-test:

|

||||

$(PIP) install -r requirements-test.txt

|

||||

|

||||

smoke-test: TMPDIR != mktemp -d

|

||||

smoke-test: tests/resources/2files/kant_aufklaerung_1784_0020.tif

|

||||

# layout analysis:

|

||||

eynollah -m $(CURDIR) layout -i $< -o $(TMPDIR)

|

||||

fgrep -q http://schema.primaresearch.org/PAGE/gts/pagecontent/2019-07-15 $(TMPDIR)/$(basename $(<F)).xml

|

||||

fgrep -c -e TextRegion -e ImageRegion -e SeparatorRegion $(TMPDIR)/$(basename $(<F)).xml

|

||||

# layout, directory mode (skip one, add one):

|

||||

eynollah -m $(CURDIR) layout -di $(<D) -o $(TMPDIR)

|

||||

test -s $(TMPDIR)/euler_rechenkunst01_1738_0025.xml

|

||||

# mbreorder, directory mode (overwrite):

|

||||

eynollah -m $(CURDIR) machine-based-reading-order -di $(<D) -o $(TMPDIR)

|

||||

fgrep -q http://schema.primaresearch.org/PAGE/gts/pagecontent/2019-07-15 $(TMPDIR)/$(basename $(<F)).xml

|

||||

fgrep -c -e RegionRefIndexed $(TMPDIR)/$(basename $(<F)).xml

|

||||

# binarize:

|

||||

eynollah -m $(CURDIR) binarization -i $< -o $(TMPDIR)/$(<F)

|

||||

test -s $(TMPDIR)/$(<F)

|

||||

@set -x; test "$$(identify -format '%w %h' $<)" = "$$(identify -format '%w %h' $(TMPDIR)/$(<F))"

|

||||

# enhance:

|

||||

eynollah -m $(CURDIR) enhancement -sos -i $< -o $(TMPDIR) -O

|

||||

test -s $(TMPDIR)/$(<F)

|

||||

@set -x; test "$$(identify -format '%w %h' $<)" = "$$(identify -format '%w %h' $(TMPDIR)/$(<F))"

|

||||

$(RM) -r $(TMPDIR)

|

||||

|

||||

ocrd-test: export OCRD_MISSING_OUTPUT := ABORT

|

||||

ocrd-test: TMPDIR != mktemp -d

|

||||

ocrd-test: tests/resources/2files/kant_aufklaerung_1784_0020.tif

|

||||

cp $< $(TMPDIR)

|

||||

ocrd workspace -d $(TMPDIR) init

|

||||

ocrd workspace -d $(TMPDIR) add -G OCR-D-IMG -g PHYS_0020 -i OCR-D-IMG_0020 $(<F)

|

||||

ocrd-eynollah-segment -w $(TMPDIR) -I OCR-D-IMG -O OCR-D-SEG -P models $(CURDIR)

|

||||

result=$$(ocrd workspace -d $(TMPDIR) find -G OCR-D-SEG); \

|

||||

fgrep -q http://schema.primaresearch.org/PAGE/gts/pagecontent/2019-07-15 $(TMPDIR)/$$result && \

|

||||

fgrep -c -e TextRegion -e ImageRegion -e SeparatorRegion $(TMPDIR)/$$result

|

||||

ocrd-sbb-binarize -w $(TMPDIR) -I OCR-D-IMG -O OCR-D-BIN -P model $(CURDIR)

|

||||

ocrd-sbb-binarize -w $(TMPDIR) -I OCR-D-SEG -O OCR-D-SEG-BIN -P model $(CURDIR) -P operation_level region

|

||||

$(RM) -r $(TMPDIR)

|

||||

smoke-test:

|

||||

eynollah -i tests/resources/kant_aufklaerung_1784_0020.tif -o . -m $(PWD)/models_eynollah

|

||||

|

||||

# Run unit tests

|

||||

test: export EYNOLLAH_MODELS_DIR := $(CURDIR)

|

||||

test:

|

||||

$(PYTHON) -m pytest tests --durations=0 --continue-on-collection-errors $(PYTEST_ARGS)

|

||||

|

||||

coverage:

|

||||

coverage erase

|

||||

$(MAKE) test PYTHON="coverage run"

|

||||

coverage report -m

|

||||

|

||||

# Build docker image

|

||||

docker:

|

||||

$(DOCKER) build \

|

||||

--build-arg DOCKER_BASE_IMAGE=$(DOCKER_BASE_IMAGE) \

|

||||

--build-arg VCS_REF=$$(git rev-parse --short HEAD) \

|

||||

--build-arg BUILD_DATE=$$(date -u +"%Y-%m-%dT%H:%M:%SZ") \

|

||||

-t $(DOCKER_TAG) .

|

||||

|

||||

.PHONY: models build install install-dev test smoke-test ocrd-test coverage docker help

|

||||

pytest tests

|

||||

|

|

|

|||

268

README.md

268

README.md

|

|

@ -1,212 +1,122 @@

|

|||

# Eynollah

|

||||

|

||||

> Document Layout Analysis, Binarization and OCR with Deep Learning and Heuristics

|

||||

|

||||

[](https://pypi.python.org/pypi/eynollah)

|

||||

[](https://pypi.org/project/eynollah/)

|

||||

[](https://github.com/qurator-spk/eynollah/actions/workflows/test-eynollah.yml)

|

||||

[](https://github.com/qurator-spk/eynollah/actions/workflows/build-docker.yml)

|

||||

[](https://opensource.org/license/apache-2-0/)

|

||||

[](https://doi.org/10.1145/3604951.3605513)

|

||||

> Document Layout Analysis

|

||||

|

||||

|

||||

|

||||

## Features

|

||||

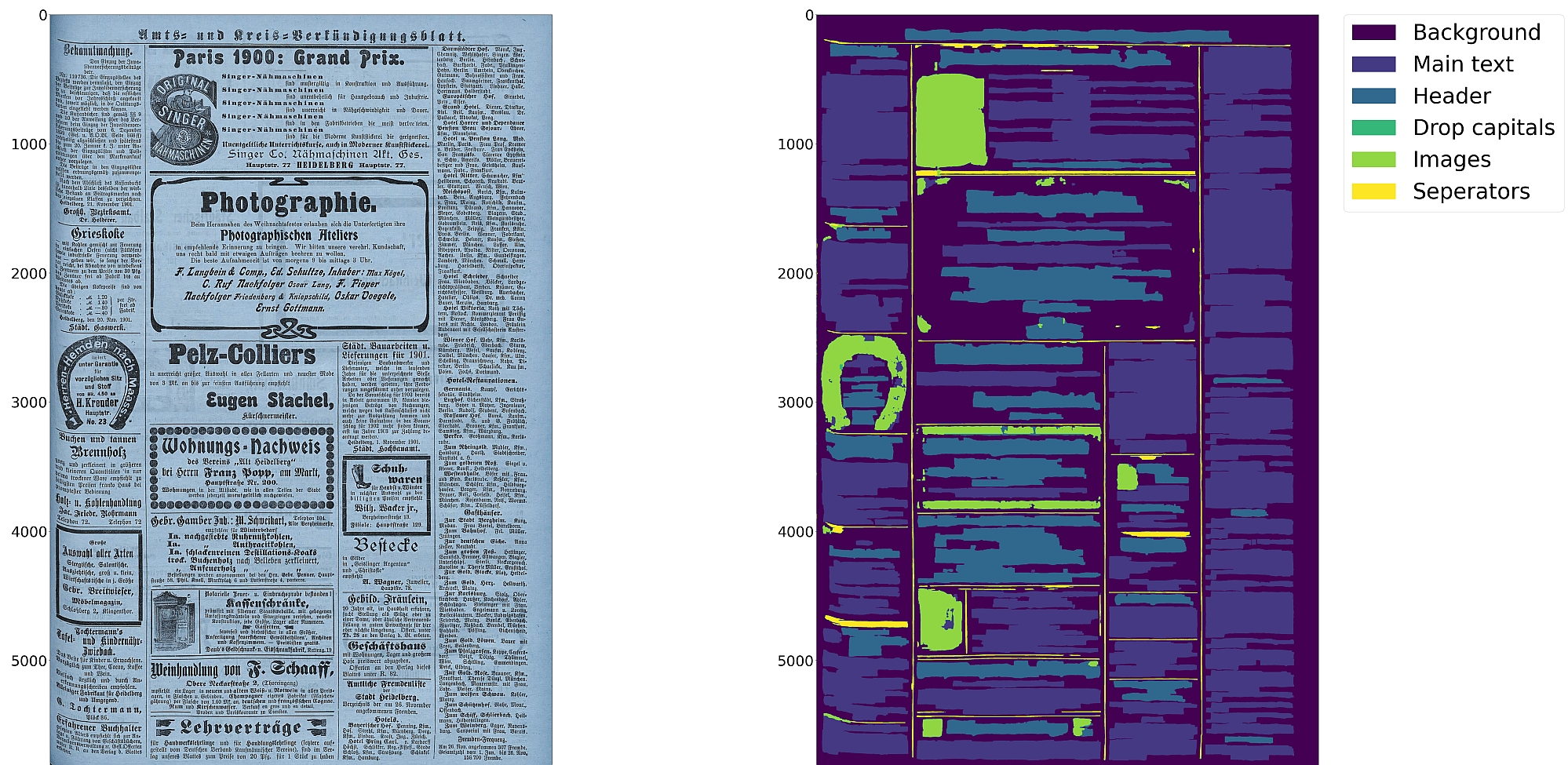

* Document layout analysis using pixelwise segmentation models with support for 10 segmentation classes:

|

||||

* background, [page border](https://ocr-d.de/en/gt-guidelines/trans/lyRand.html), [text region](https://ocr-d.de/en/gt-guidelines/trans/lytextregion.html#textregionen__textregion_), [text line](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextLineType.html), [header](https://ocr-d.de/en/gt-guidelines/trans/lyUeberschrift.html), [image](https://ocr-d.de/en/gt-guidelines/trans/lyBildbereiche.html), [separator](https://ocr-d.de/en/gt-guidelines/trans/lySeparatoren.html), [marginalia](https://ocr-d.de/en/gt-guidelines/trans/lyMarginalie.html), [initial](https://ocr-d.de/en/gt-guidelines/trans/lyInitiale.html), [table](https://ocr-d.de/en/gt-guidelines/trans/lyTabellen.html)

|

||||

* Textline segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

||||

* Document image binarization with pixelwise segmentation or hybrid CNN-Transformer models

|

||||

* Text recognition (OCR) with CNN-RNN or TrOCR models

|

||||

* Detection of reading order (left-to-right or right-to-left) using heuristics or trainable models

|

||||

* Output in [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML)

|

||||

* [OCR-D](https://github.com/qurator-spk/eynollah#use-as-ocr-d-processor) interface

|

||||

## Introduction

|

||||

This tool performs document layout analysis (segmentation) from image data and returns the results as [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML).

|

||||

|

||||

:warning: Development is focused on achieving the best quality of results for a wide variety of historical

|

||||

documents using a combination of multiple deep learning models and heuristics; therefore processing can be slow.

|

||||

It can currently detect the following layout classes/elements:

|

||||

* [Border](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_BorderType.html)

|

||||

* [Textregion](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextRegionType.html)

|

||||

* [Textline](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextLineType.html)

|

||||

* [Image](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_ImageRegionType.html)

|

||||

* [Separator](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_SeparatorRegionType.html)

|

||||

* [Marginalia](https://ocr-d.de/en/gt-guidelines/trans/lyMarginalie.html)

|

||||

* [Initial (Drop Capital)](https://ocr-d.de/en/gt-guidelines/trans/lyInitiale.html)

|

||||

|

||||

In addition, the tool can be used to detect the _[ReadingOrder](https://ocr-d.de/en/gt-guidelines/trans/lyLeserichtung.html)_ of regions. The final goal is to feed the output to an OCR model.

|

||||

|

||||

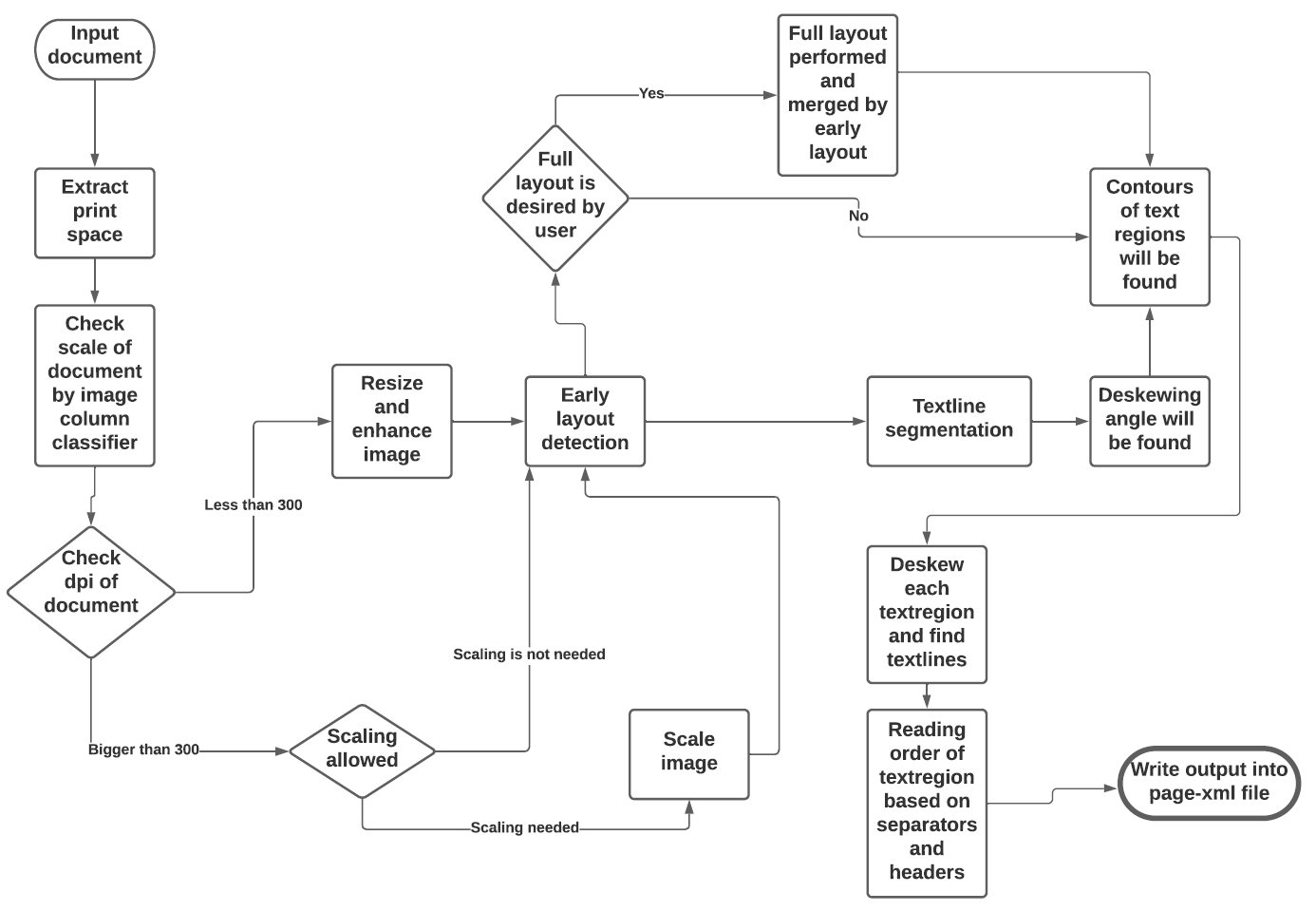

The tool uses a combination of various models and heuristics (see flowchart below for the different stages and how they interact):

|

||||

* [Border detection](https://github.com/qurator-spk/eynollah#border-detection)

|

||||

* [Layout detection](https://github.com/qurator-spk/eynollah#layout-detection)

|

||||

* [Textline detection](https://github.com/qurator-spk/eynollah#textline-detection)

|

||||

* [Image enhancement](https://github.com/qurator-spk/eynollah#Image_enhancement)

|

||||

* [Scale classification](https://github.com/qurator-spk/eynollah#Scale_classification)

|

||||

* [Heuristic methods](https://https://github.com/qurator-spk/eynollah#heuristic-methods)

|

||||

|

||||

The first three stages are based on [pixel-wise segmentation](https://github.com/qurator-spk/sbb_pixelwise_segmentation).

|

||||

|

||||

|

||||

|

||||

## Border detection

|

||||

For the purpose of text recognition (OCR) and in order to avoid noise being introduced from texts outside the printspace, one first needs to detect the border of the printed frame. This is done by a binary pixel-wise-segmentation model trained on a dataset of 2,000 documents where about 1,200 of them come from the [dhSegment](https://github.com/dhlab-epfl/dhSegment/) project (you can download the dataset from [here](https://github.com/dhlab-epfl/dhSegment/releases/download/v0.2/pages.zip)) and the remainder having been annotated in SBB. For border detection, the model needs to be fed with the whole image at once rather than separated in patches.

|

||||

|

||||

## Layout detection

|

||||

As a next step, text regions need to be identified by means of layout detection. Again a pixel-wise segmentation model was trained on 131 labeled images from the SBB digital collections, including some data augmentation. Since the target of this tool are historical documents, we consider as main region types text regions, separators, images, tables and background - each with their own subclasses, e.g. in the case of text regions, subclasses like header/heading, drop capital, main body text etc. While it would be desirable to detect and classify each of these classes in a granular way, there are also limitations due to having a suitably large and balanced training set. Accordingly, the current version of this tool is focussed on the main region types background, text region, image and separator.

|

||||

|

||||

## Textline detection

|

||||

In a subsequent step, binary pixel-wise segmentation is used again to classify pixels in a document that constitute textlines. For textline segmentation, a model was initially trained on documents with only one column/block of text and some augmentation with regard to scaling. By fine-tuning the parameters also for multi-column documents, additional training data was produced that resulted in a much more robust textline detection model.

|

||||

|

||||

## Image enhancement

|

||||

This is an image to image model which input was low quality of an image and label was actually the original image. For this one we did not have any GT, so we decreased the quality of documents in SBB and then feed them into model.

|

||||

|

||||

## Scale classification

|

||||

This is simply an image classifier which classifies images based on their scales or better to say based on their number of columns.

|

||||

|

||||

## Heuristic methods

|

||||

Some heuristic methods are also employed to further improve the model predictions:

|

||||

* After border detection, the largest contour is determined by a bounding box, and the image cropped to these coordinates.

|

||||

* For text region detection, the image is scaled up to make it easier for the model to detect background space between text regions.

|

||||

* A minimum area is defined for text regions in relation to the overall image dimensions, so that very small regions that are noise can be filtered out.

|

||||

* Deskewing is applied on the text region level (due to regions having different degrees of skew) in order to improve the textline segmentation result.

|

||||

* After deskewing, a calculation of the pixel distribution on the X-axis allows the separation of textlines (foreground) and background pixels.

|

||||

* Finally, using the derived coordinates, bounding boxes are determined for each textline.

|

||||

|

||||

## Installation

|

||||

Python `3.8-3.11` with Tensorflow `<2.13` on Linux are currently supported.

|

||||

For (limited) GPU support the CUDA toolkit needs to be installed.

|

||||

A working config is CUDA `11.8` with cuDNN `8.6`.

|

||||

`pip install .` or

|

||||

|

||||

You can either install from PyPI

|

||||

`pip install . -e` for editable installation

|

||||

|

||||

```

|

||||

pip install eynollah

|

||||

```

|

||||

Alternatively, you can also use `make` with these targets:

|

||||

|

||||

or clone the repository, enter it and install (editable) with

|

||||

`make install` or

|

||||

|

||||

```

|

||||

git clone git@github.com:qurator-spk/eynollah.git

|

||||

cd eynollah; pip install -e .

|

||||

```

|

||||

`make install-dev` for editable installation

|

||||

|

||||

Alternatively, you can run `make install` or `make install-dev` for editable installation.

|

||||

### Models

|

||||

|

||||

To also install the dependencies for the OCR engines:

|

||||

In order to run this tool you also need trained models. You can download our pretrained models from [qurator-data.de](https://qurator-data.de/eynollah/).

|

||||

|

||||

```

|

||||

pip install "eynollah[OCR]"

|

||||

# or

|

||||

make install EXTRAS=OCR

|

||||

```

|

||||

|

||||

### Docker

|

||||

|

||||

Use

|

||||

|

||||

```

|

||||

docker pull ghcr.io/qurator-spk/eynollah:latest

|

||||

```

|

||||

|

||||

When using Eynollah with Docker, see [`docker.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/docker.md).

|

||||

|

||||

## Models

|

||||

|

||||

Pretrained models can be downloaded from [Zenodo](https://zenodo.org/records/17194824) or [Hugging Face](https://huggingface.co/SBB?search_models=eynollah).

|

||||

|

||||

For model documentation and model cards, see [`models.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/models.md).

|

||||

|

||||

## Training

|

||||

|

||||

To train your own model with Eynollah, see [`train.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/train.md) and use the tools in the [`train`](https://github.com/qurator-spk/eynollah/tree/main/train) folder.

|

||||

Alternatively, running `make models` will download and extract models to `$(PWD)/models_eynollah`.

|

||||

|

||||

## Usage

|

||||

|

||||

Eynollah supports five use cases:

|

||||

1. [layout analysis (segmentation)](#layout-analysis),

|

||||

2. [binarization](#binarization),

|

||||

3. [image enhancement](#image-enhancement),

|

||||

4. [text recognition (OCR)](#ocr), and

|

||||

5. [reading order detection](#reading-order-detection).

|

||||

|

||||

Some example outputs can be found in [`examples.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/examples.md).

|

||||

|

||||

### Layout Analysis

|

||||

|

||||

The layout analysis module is responsible for detecting layout elements, identifying text lines, and determining reading

|

||||

order using heuristic methods or a [pretrained model](https://github.com/qurator-spk/eynollah#machine-based-reading-order).

|

||||

|

||||

The command-line interface for layout analysis can be called like this:

|

||||

The basic command-line interface can be called like this:

|

||||

|

||||

```sh

|

||||

eynollah layout \

|

||||

-i <single image file> | -di <directory containing image files> \

|

||||

-o <output directory> \

|

||||

-m <directory containing model files> \

|

||||

[OPTIONS]

|

||||

eynollah \

|

||||

-i <image file name> \

|

||||

-o <directory to write output xml or enhanced image> \

|

||||

-m <directory of models> \

|

||||

-fl <if true, the tool will perform full layout analysis> \

|

||||

-ae <if true, the tool will resize and enhance the image and produce the resulting image as output> \

|

||||

-as <if true, the tool will check whether the document needs rescaling or not> \

|

||||

-cl <if true, the tool will extract the contours of curved textlines instead of rectangle bounding boxes> \

|

||||

-si <if a directory is given here, the tool will output image regions inside documents there>

|

||||

```

|

||||

|

||||

The following options can be used to further configure the processing:

|

||||

The tool does accept and works better on original images (RGB format) than binarized images.

|

||||

|

||||

| option | description |

|

||||

|-------------------|:--------------------------------------------------------------------------------------------|

|

||||

| `-fl` | full layout analysis including all steps and segmentation classes (recommended) |

|

||||

| `-tab` | apply table detection |

|

||||

| `-ae` | apply enhancement (the resulting image is saved to the output directory) |

|

||||

| `-as` | apply scaling |

|

||||

| `-cl` | apply contour detection for curved text lines instead of bounding boxes |

|

||||

| `-ib` | apply binarization (the resulting image is saved to the output directory) |

|

||||

| `-ep` | enable plotting (MUST always be used with `-sl`, `-sd`, `-sa`, `-si` or `-ae`) |

|

||||

| `-ho` | ignore headers for reading order dectection |

|

||||

| `-si <directory>` | save image regions detected to this directory |

|

||||

| `-sd <directory>` | save deskewed image to this directory |

|

||||

| `-sl <directory>` | save layout prediction as plot to this directory |

|

||||

| `-sp <directory>` | save cropped page image to this directory |

|

||||

| `-sa <directory>` | save all (plot, enhanced/binary image, layout) to this directory |

|

||||

| `-thart` | threshold of artifical class in the case of textline detection. The default value is 0.1 |

|

||||

| `-tharl` | threshold of artifical class in the case of layout detection. The default value is 0.1 |

|

||||

| `-ncu` | upper limit of columns in document image |

|

||||

| `-ncl` | lower limit of columns in document image |

|

||||

| `-slro` | skip layout detection and reading order |

|

||||

| `-romb` | apply machine based reading order detection |

|

||||

| `-ipe` | ignore page extraction |

|

||||

### `--full-layout` vs `--no-full-layout`

|

||||

|

||||

Here are the difference in elements detected depending on the `--full-layout`/`--no-full-layout` command line flags:

|

||||

|

||||

If no further option is set, the tool performs layout detection of main regions (background, text, images, separators

|

||||

and marginals).

|

||||

The best output quality is achieved when RGB images are used as input rather than greyscale or binarized images.

|

||||

| | `--full-layout` | `--no-full-layout` |

|

||||

| --- | --- | --- |

|

||||

| reading order | x | x |

|

||||

| header regions | x | - |

|

||||

| text regions | x | x |

|

||||

| text regions / text line | x | x |

|

||||

| drop-capitals | x | - |

|

||||

| marginals | x | x |

|

||||

| marginals / text line | x | x |

|

||||

| image region | x | x |

|

||||

|

||||

Additional documentation can be found in [`usage.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/usage.md).

|

||||

### How to use

|

||||

|

||||

### Binarization

|

||||

First, this model makes use of up to 9 trained models which are responsible for different operations like size detection, column classification, image enhancement, page extraction, main layout detection, full layout detection and textline detection.That does not mean that all 9 models are always required for every document. Based on the document characteristics and parameters specified, different scenarios can be applied.

|

||||

|

||||

The binarization module performs document image binarization using pretrained pixelwise segmentation models.

|

||||

* If none of the parameters is set to `true`, the tool will perform a layout detection of main regions (background, text, images, separators and marginals). An advantage of this tool is that it tries to extract main text regions separately as much as possible.

|

||||

|

||||

The command-line interface for binarization can be called like this:

|

||||

* If you set `-ae` (**a**llow image **e**nhancement) parameter to `true`, the tool will first check the ppi (pixel-per-inch) of the image and when it is less than 300, the tool will resize it and only then image enhancement will occur. Image enhancement can also take place without this option, but by setting this option to `true`, the layout xml data (e.g. coordinates) will be based on the resized and enhanced image instead of the original image.

|

||||

|

||||

```sh

|

||||

eynollah binarization \

|

||||

-i <single image file> | -di <directory containing image files> \

|

||||

-o <output directory> \

|

||||

-m <directory containing model files>

|

||||

```

|

||||

* For some documents, while the quality is good, their scale is very large, and the performance of tool decreases. In such cases you can set `-as` (**a**llow **s**caling) to `true`. With this option enabled, the tool will try to rescale the image and only then the layout detection process will begin.

|

||||

|

||||

### Image Enhancement

|

||||

TODO

|

||||

* If you care about drop capitals (initials) and headings, you can set `-fl` (**f**ull **l**ayout) to `true`. With this setting, the tool can currently distinguish 7 document layout classes/elements.

|

||||

|

||||

### OCR

|

||||

* In cases where the document includes curved headers or curved lines, rectangular bounding boxes for textlines will not be a great option. In such cases it is strongly recommended setting the flag `-cl` (**c**urved **l**ines) to `true` to find contours of curved lines instead of rectangular bounding boxes. Be advised that enabling this option increases the processing time of the tool.

|

||||

|

||||

The OCR module performs text recognition using either a CNN-RNN model or a Transformer model.

|

||||

* To crop and save image regions inside the document, set the parameter `-si` (**s**ave **i**mages) to true and provide a directory path to store the extracted images.

|

||||

|

||||

The command-line interface for OCR can be called like this:

|

||||

|

||||

```sh

|

||||

eynollah ocr \

|

||||

-i <single image file> | -di <directory containing image files> \

|

||||

-dx <directory of xmls> \

|

||||

-o <output directory> \

|

||||

-m <directory containing model files> | --model_name <path to specific model>

|

||||

```

|

||||

|

||||

The following options can be used to further configure the ocr processing:

|

||||

|

||||

| option | description |

|

||||

|-------------------|:-------------------------------------------------------------------------------------------|

|

||||

| `-dib` | directory of binarized images (file type must be '.png'), prediction with both RGB and bin |

|

||||

| `-doit` | directory for output images rendered with the predicted text |

|

||||

| `--model_name` | file path to use specific model for OCR |

|

||||

| `-trocr` | use transformer ocr model (otherwise cnn_rnn model is used) |

|

||||

| `-etit` | export textline images and text in xml to output dir (OCR training data) |

|

||||

| `-nmtc` | cropped textline images will not be masked with textline contour |

|

||||

| `-bs` | ocr inference batch size. Default batch size is 2 for trocr and 8 for cnn_rnn models |

|

||||

| `-ds_pref` | add an abbrevation of dataset name to generated training data |

|

||||

| `-min_conf` | minimum OCR confidence value. OCR with textline conf lower than this will be ignored |

|

||||

|

||||

|

||||

### Reading Order Detection

|

||||

Reading order detection can be performed either as part of layout analysis based on image input, or, currently under

|

||||

development, based on pre-existing layout analysis data in PAGE-XML format as input.

|

||||

|

||||

The reading order detection module employs a pretrained model to identify the reading order from layouts represented in PAGE-XML files.

|

||||

|

||||

The command-line interface for machine based reading order can be called like this:

|

||||

|

||||

```sh

|

||||

eynollah machine-based-reading-order \

|

||||

-i <single image file> | -di <directory containing image files> \

|

||||

-xml <xml file name> | -dx <directory containing xml files> \

|

||||

-m <path to directory containing model files> \

|

||||

-o <output directory>

|

||||

```

|

||||

|

||||

## Use as OCR-D processor

|

||||

|

||||

See [`ocrd.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/ocrd.md).

|

||||

|

||||

## How to cite

|

||||

|

||||

```bibtex

|

||||

@inproceedings{hip23rezanezhad,

|

||||

title = {Document Layout Analysis with Deep Learning and Heuristics},

|

||||

author = {Rezanezhad, Vahid and Baierer, Konstantin and Gerber, Mike and Labusch, Kai and Neudecker, Clemens},

|

||||

booktitle = {Proceedings of the 7th International Workshop on Historical Document Imaging and Processing {HIP} 2023,

|

||||

San José, CA, USA, August 25-26, 2023},

|

||||

publisher = {Association for Computing Machinery},

|

||||

address = {New York, NY, USA},

|

||||

year = {2023},

|

||||

pages = {73--78},

|

||||

url = {https://doi.org/10.1145/3604951.3605513}

|

||||

}

|

||||

```

|

||||

* This tool is actively being developed. If problems occur, or the performance does not meet your expectations, we welcome your feedback via [issues](https://github.com/qurator-spk/eynollah/issues).

|

||||

|

|

|

|||

|

|

@ -1,43 +0,0 @@

|

|||

## Inference with Docker

|

||||

|

||||

docker pull ghcr.io/qurator-spk/eynollah:latest

|

||||

|

||||

### 1. ocrd resource manager

|

||||

(just once, to get the models and install them into a named volume for later re-use)

|

||||

|

||||

vol_models=ocrd-resources:/usr/local/share/ocrd-resources

|

||||

docker run --rm -v $vol_models ocrd/eynollah ocrd resmgr download ocrd-eynollah-segment default

|

||||

|

||||

Now, each time you want to use Eynollah, pass the same resources volume again.

|

||||

Also, bind-mount some data directory, e.g. current working directory $PWD (/data is default working directory in the container).

|

||||

|

||||

Either use standalone CLI (2) or OCR-D CLI (3):

|

||||

|

||||

### 2. standalone CLI

|

||||

(follow self-help, cf. readme)

|

||||

|

||||

docker run --rm -v $vol_models -v $PWD:/data ocrd/eynollah eynollah binarization --help

|

||||

docker run --rm -v $vol_models -v $PWD:/data ocrd/eynollah eynollah layout --help

|

||||

docker run --rm -v $vol_models -v $PWD:/data ocrd/eynollah eynollah ocr --help

|

||||

|

||||

### 3. OCR-D CLI

|

||||

(follow self-help, cf. readme and https://ocr-d.de/en/spec/cli)

|

||||

|

||||

docker run --rm -v $vol_models -v $PWD:/data ocrd/eynollah ocrd-eynollah-segment -h

|

||||

docker run --rm -v $vol_models -v $PWD:/data ocrd/eynollah ocrd-sbb-binarize -h

|

||||

|

||||

Alternatively, just "log in" to the container once and use the commands there:

|

||||

|

||||

docker run --rm -v $vol_models -v $PWD:/data -it ocrd/eynollah bash

|

||||

|

||||

## Training with Docker

|

||||

|

||||

Build the Docker training image

|

||||

|

||||

cd train

|

||||

docker build -t model-training .

|

||||

|

||||

Run the Docker training image

|

||||

|

||||

cd train

|

||||

docker run --gpus all -v $PWD:/entry_point_dir model-training

|

||||

|

|

@ -1,18 +0,0 @@

|

|||

# Examples

|

||||

|

||||

Example outputs of various Eynollah models

|

||||

|

||||

# Binarisation

|

||||

|

||||

<img src="https://user-images.githubusercontent.com/952378/63592437-e433e400-c5b1-11e9-9c2d-889c6e93d748.jpg" width="45%"><img src="https://user-images.githubusercontent.com/952378/63592435-e433e400-c5b1-11e9-88e4-3e441b61fa67.jpg" width="45%">

|

||||

<img src="https://user-images.githubusercontent.com/952378/63592440-e4cc7a80-c5b1-11e9-8964-2cd1b22c87be.jpg" width="45%"><img src="https://user-images.githubusercontent.com/952378/63592438-e4cc7a80-c5b1-11e9-86dc-a9e9f8555422.jpg" width="45%">

|

||||

|

||||

# Reading Order Detection

|

||||

|

||||

<img src="https://github.com/user-attachments/assets/42df2582-4579-415e-92f1-54858a02c830" alt="Input Image" width="45%">

|

||||

<img src="https://github.com/user-attachments/assets/77fc819e-6302-4fc9-967c-ee11d10d863e" alt="Output Image" width="45%">

|

||||

|

||||

# OCR

|

||||

|

||||

<img src="https://github.com/user-attachments/assets/71054636-51c6-4117-b3cf-361c5cda3528" alt="Input Image" width="45%"><img src="https://github.com/user-attachments/assets/cfb3ce38-007a-4037-b547-21324a7d56dd" alt="Output Image" width="45%">

|

||||

<img src="https://github.com/user-attachments/assets/343b2ed8-d818-4d4a-b301-f304cbbebfcd" alt="Input Image" width="45%"><img src="https://github.com/user-attachments/assets/accb5ba7-e37f-477e-84aa-92eafa0d136e" alt="Output Image" width="45%">

|

||||

226

docs/models.md

226

docs/models.md

|

|

@ -1,226 +0,0 @@

|

|||

# Models documentation

|

||||

|

||||

This suite of 15 models presents a document layout analysis (DLA) system for historical documents implemented by

|

||||

pixel-wise segmentation using a combination of a ResNet50 encoder with various U-Net decoders. In addition, heuristic

|

||||

methods are applied to detect marginals and to determine the reading order of text regions.

|

||||

|

||||

The detection and classification of multiple classes of layout elements such as headings, images, tables etc. as part of

|

||||

DLA is required in order to extract and process them in subsequent steps. Altogether, the combination of image

|

||||

detection, classification and segmentation on the wide variety that can be found in over 400 years of printed cultural

|

||||

heritage makes this a very challenging task. Deep learning models are complemented with heuristics for the detection of

|

||||

text lines, marginals, and reading order. Furthermore, an optional image enhancement step was added in case of documents

|

||||

that either have insufficient pixel density and/or require scaling. Also, a column classifier for the analysis of

|

||||

multi-column documents was added. With these additions, DLA performance was improved, and a high accuracy in the

|

||||

prediction of the reading order is accomplished.

|

||||

|

||||

Two Arabic/Persian terms form the name of the model suite: عين الله, which can be transcribed as "ain'allah" or

|

||||

"eynollah"; it translates into English as "God's Eye" -- it sees (nearly) everything on the document image.

|

||||

|

||||

See the flowchart below for the different stages and how they interact:

|

||||

|

||||

<img width="810" height="691" alt="eynollah_flowchart" src="https://github.com/user-attachments/assets/42dd55bc-7b85-4b46-9afe-15ff712607f0" />

|

||||

|

||||

|

||||

|

||||

## Models

|

||||

|

||||

### Image enhancement

|

||||

|

||||

Model card: [Image Enhancement](https://huggingface.co/SBB/eynollah-enhancement)

|

||||

|

||||

This model addresses image resolution, specifically targeting documents with suboptimal resolution. In instances where

|

||||

the detection of document layout exhibits inadequate performance, the proposed enhancement aims to significantly improve

|

||||

the quality and clarity of the images, thus facilitating enhanced visual interpretation and analysis.

|

||||

|

||||

### Page extraction / border detection

|

||||

|

||||

Model card: [Page Extraction/Border Detection](https://huggingface.co/SBB/eynollah-page-extraction)

|

||||

|

||||

A problem that can negatively affect OCR are black margins around a page caused by document scanning. A deep learning

|

||||

model helps to crop to the page borders by using a pixel-wise segmentation method.

|

||||

|

||||

### Column classification

|

||||

|

||||

Model card: [Column Classification](https://huggingface.co/SBB/eynollah-column-classifier)

|

||||

|

||||

This model is a trained classifier that recognizes the number of columns in a document by use of a training set with

|

||||

manual classification of all documents into six classes with either one, two, three, four, five, or six and more columns

|

||||

respectively.

|

||||

|

||||

### Binarization

|

||||

|

||||

Model card: [Binarization](https://huggingface.co/SBB/eynollah-binarization)

|

||||

|

||||

This model is designed to tackle the intricate task of document image binarization, which involves segmentation of the

|

||||

image into white and black pixels. This process significantly contributes to the overall performance of the layout

|

||||