mirror of

https://github.com/qurator-spk/eynollah.git

synced 2025-10-26 15:24:12 +01:00

Compare commits

179 commits

| Author | SHA1 | Date | |

|---|---|---|---|

|

|

38c028c6b5 | ||

|

|

ca8edb35e3 | ||

|

|

50e8b2c266 | ||

|

|

46d25647f7 | ||

|

|

2ac01ecacc | ||

|

|

2e0fb64dcb | ||

|

|

76c13bcfd7 | ||

|

|

af5abb77fd | ||

|

|

d2f0a43088 | ||

|

|

3bd3faef68 | ||

|

|

1e66c85222 | ||

|

|

bd8c8bfeac | ||

|

|

948c8c3441 | ||

|

|

f485dd4181 | ||

|

|

c1f0158806 | ||

|

|

7daa0a1bd5 | ||

|

|

2febf53479 | ||

|

|

8299e7009a | ||

|

|

e8b7212f36 | ||

|

|

745cf3be48 | ||

|

|

8a9b4f8f55 | ||

|

|

f60e0543ab | ||

|

|

1c043c586a | ||

|

|

690d47444c | ||

|

|

2baf42e878 | ||

|

|

4f5cdf3140 | ||

|

|

f0ef2b5db2 | ||

|

|

95bb5908bb | ||

|

|

48266b1ee0 | ||

|

|

733af1e9a7 | ||

|

|

4514d417a7 | ||

|

|

e027bc038e | ||

|

|

91d2a74ac9 | ||

|

|

f2f93e0251 | ||

|

|

70af00182b | ||

|

|

1d0616eb69 | ||

|

|

9ce127eb51 | ||

|

|

558867eb24 | ||

|

|

070dafca75 | ||

|

|

53c1ca11fc | ||

|

|

9d8b858dfc | ||

|

|

2bcd20ebc7 | ||

|

|

ce02a3553b | ||

|

|

6d379782ab | ||

|

|

52a7c93319 | ||

|

|

ea05461dfe | ||

|

|

56c4b7af88 | ||

|

|

3b9548d0bd | ||

|

|

a65405bead | ||

|

|

530897c6c2 | ||

|

|

68a71be8bc | ||

|

|

cf4983da54 | ||

|

|

263da755ef | ||

|

|

6462ea5b33 | ||

|

|

1b222594d6 | ||

|

|

f5a1d1a255 | ||

|

|

0e7de52f5e | ||

|

|

eb91000490 | ||

|

|

25e3a2a99f | ||

|

|

f9390c71e7 | ||

|

|

25abc0fabc | ||

|

|

4a7728bb34 | ||

|

|

4ddc84dee8 | ||

|

|

3a9fc0efde | ||

|

|

6fa766d6a5 | ||

|

|

92954b1b7b | ||

|

|

5694d971c5 | ||

|

|

3b123b039c | ||

|

|

44d02687c6 | ||

|

|

4635dd219d | ||

|

|

dd21a3b33a | ||

|

|

825b2634f9 | ||

|

|

363c343b37 | ||

|

|

90a1b186f7 | ||

|

|

e9b860b275 | ||

|

|

238ea3bd8e | ||

|

|

7b4d14b19f | ||

|

|

fd14e656aa | ||

|

|

f09eed1197 | ||

|

|

a524f8b1a7 | ||

|

|

3f354e1c34 | ||

|

|

e3da494470 | ||

|

|

a57a31673d | ||

|

|

5bbd0980b2 | ||

|

|

61cdd2acb8 | ||

|

|

aeb2ee4e3e | ||

|

|

445c45cb87 | ||

|

|

5e1821a741 | ||

|

|

bf5837bf6e | ||

|

|

3b90347a94 | ||

|

|

2d83b8faad | ||

|

|

6fb28d6ce8 | ||

|

|

381976099f | ||

|

|

2c822dae4e | ||

|

|

840d7c2283 | ||

|

|

861f0b1ebd | ||

|

|

453d0fbf92 | ||

|

|

3bceec9c19 | ||

|

|

9260d2962a | ||

|

|

fe69b9c4a8 | ||

|

|

b3cd01de37 | ||

|

|

66022cf771 | ||

|

|

22d7359db2 | ||

|

|

95faf1a4c8 | ||

|

|

29da23da76 | ||

|

|

1921e6754f | ||

|

|

cc91e4b12c | ||

|

|

4c376289e9 | ||

|

|

0e4dd0b9ef | ||

|

|

5a5914e06c | ||

|

|

742e3c2aa2 | ||

|

|

13ebe71d13 | ||

|

|

3ef0dbdd42 | ||

|

|

47a1646451 | ||

|

|

09789619a8 | ||

|

|

06ed006193 | ||

|

|

4fb45a6711 | ||

|

|

cc7577d2c1 | ||

|

|

467bbb2884 | ||

|

|

ccf520d3c7 | ||

|

|

9638098ae7 | ||

|

|

d346b317fb | ||

|

|

61487bf782 | ||

|

|

a83d53c27d | ||

|

|

348d323c7c | ||

|

|

47c6bf6b97 | ||

|

|

f1c2913c03 | ||

|

|

b2085a1d01 | ||

|

|

faeac997e1 | ||

|

|

d6a057ba70 | ||

|

|

d277ec4b31 | ||

|

|

241cb907cb | ||

|

|

bc2ca71802 | ||

|

|

e1f62c2e98 | ||

|

|

c989f7ac61 | ||

|

|

ca63c097c3 | ||

|

|

6e06742e66 | ||

|

|

666a62622e | ||

|

|

39aa88669b | ||

|

|

d0b0395059 | ||

|

|

4565229497 | ||

|

|

ced1f851e2 | ||

|

|

57dae564b3 | ||

|

|

5282caa328 | ||

|

|

083f5ae881 | ||

|

|

bcc900be17 | ||

|

|

09c0d5e318 | ||

|

|

49853bb291 | ||

|

|

b1c8bdf106 | ||

|

|

310a709ac7 | ||

|

|

76c75d1365 | ||

|

|

491cdbf934 | ||

|

|

15407393e2 | ||

|

|

2d9ba85467 | ||

|

|

2e2b6eeafd | ||

|

|

8884b90f05 | ||

|

|

070c2e0462 | ||

|

|

b54285b196 | ||

|

|

4e216475dc | ||

|

|

325864eef1 | ||

|

|

66d7138343 | ||

|

|

ad1360b179 | ||

|

|

c07d16d843 | ||

|

|

df536d62c0 | ||

|

|

b5f9b9c54a | ||

|

|

943628e0b2 | ||

|

|

4229ad92d7 | ||

|

|

8084e136ba | ||

| 979b824aa8 | |||

|

|

350378af16 | ||

|

|

ac54266581 | ||

|

|

cf18aa7fbb | ||

|

|

7eb3dd26ad | ||

|

|

99a02a1bf5 | ||

|

|

e8afb370ba | ||

|

|

1882dd8f53 | ||

|

|

226330535d | ||

|

|

4601237427 | ||

|

|

95635d5b9c |

34 changed files with 6272 additions and 183 deletions

1

.gitignore

vendored

1

.gitignore

vendored

|

|

@ -9,4 +9,5 @@ output.html

|

|||

/build

|

||||

/dist

|

||||

*.tif

|

||||

*.sw?

|

||||

TAGS

|

||||

|

|

|

|||

24

CHANGELOG.md

24

CHANGELOG.md

|

|

@ -5,6 +5,23 @@ Versioned according to [Semantic Versioning](http://semver.org/).

|

|||

|

||||

## Unreleased

|

||||

|

||||

## [0.6.0] - 2025-10-17

|

||||

|

||||

Added:

|

||||

|

||||

* `eynollah-training` CLI and docs for training the models, #187, #193, https://github.com/qurator-spk/sbb_pixelwise_segmentation/tree/unifying-training-models

|

||||

|

||||

Fixed:

|

||||

|

||||

* `join_polygons` always returning Polygon, not MultiPolygon, #203

|

||||

|

||||

## [0.6.0rc2] - 2025-10-14

|

||||

|

||||

Fixed:

|

||||

|

||||

* Prevent OOM GPU error by avoiding loading the `region_fl` model, #199

|

||||

* XML output: encoding should be `utf-8`, not `utf8`, #196, #197

|

||||

|

||||

## [0.6.0rc1] - 2025-10-10

|

||||

|

||||

Fixed:

|

||||

|

|

@ -21,8 +38,7 @@ Fixed:

|

|||

* Dockerfile: fix CUDA installation (cuDNN contested between Torch and TF due to extra OCR)

|

||||

* OCR: re-instate missing methods and fix `utils_ocr` function calls

|

||||

* mbreorder/enhancement CLIs: missing imports

|

||||

* :fire: writer: `SeparatorRegion` needs `SeparatorRegionType` (not `ImageRegionType`)

|

||||

f458e3e

|

||||

* :fire: writer: `SeparatorRegion` needs `SeparatorRegionType` (not `ImageRegionType`), f458e3e

|

||||

* tests: switch from `pytest-subtests` to `parametrize` so we can use `pytest-isolate`

|

||||

(so CUDA memory gets freed between tests if running on GPU)

|

||||

|

||||

|

|

@ -118,7 +134,7 @@ Merged PRs:

|

|||

Fixed:

|

||||

|

||||

* allow empty imports for optional dependencies

|

||||

* avoid Numpy warnings (empty slices etc)

|

||||

* avoid Numpy warnings (empty slices etc.)

|

||||

* remove deprecated Numpy types

|

||||

* binarization CLI: make `dir_in` usable again

|

||||

|

||||

|

|

@ -291,6 +307,8 @@ Fixed:

|

|||

Initial release

|

||||

|

||||

<!-- link-labels -->

|

||||

[0.6.0]: ../../compare/v0.6.0...v0.6.0rc2

|

||||

[0.6.0rc2]: ../../compare/v0.6.0rc2...v0.6.0rc1

|

||||

[0.6.0rc1]: ../../compare/v0.6.0rc1...v0.5.0

|

||||

[0.5.0]: ../../compare/v0.5.0...v0.4.0

|

||||

[0.4.0]: ../../compare/v0.4.0...v0.3.1

|

||||

|

|

|

|||

55

README.md

55

README.md

|

|

@ -11,23 +11,24 @@

|

|||

|

||||

|

||||

## Features

|

||||

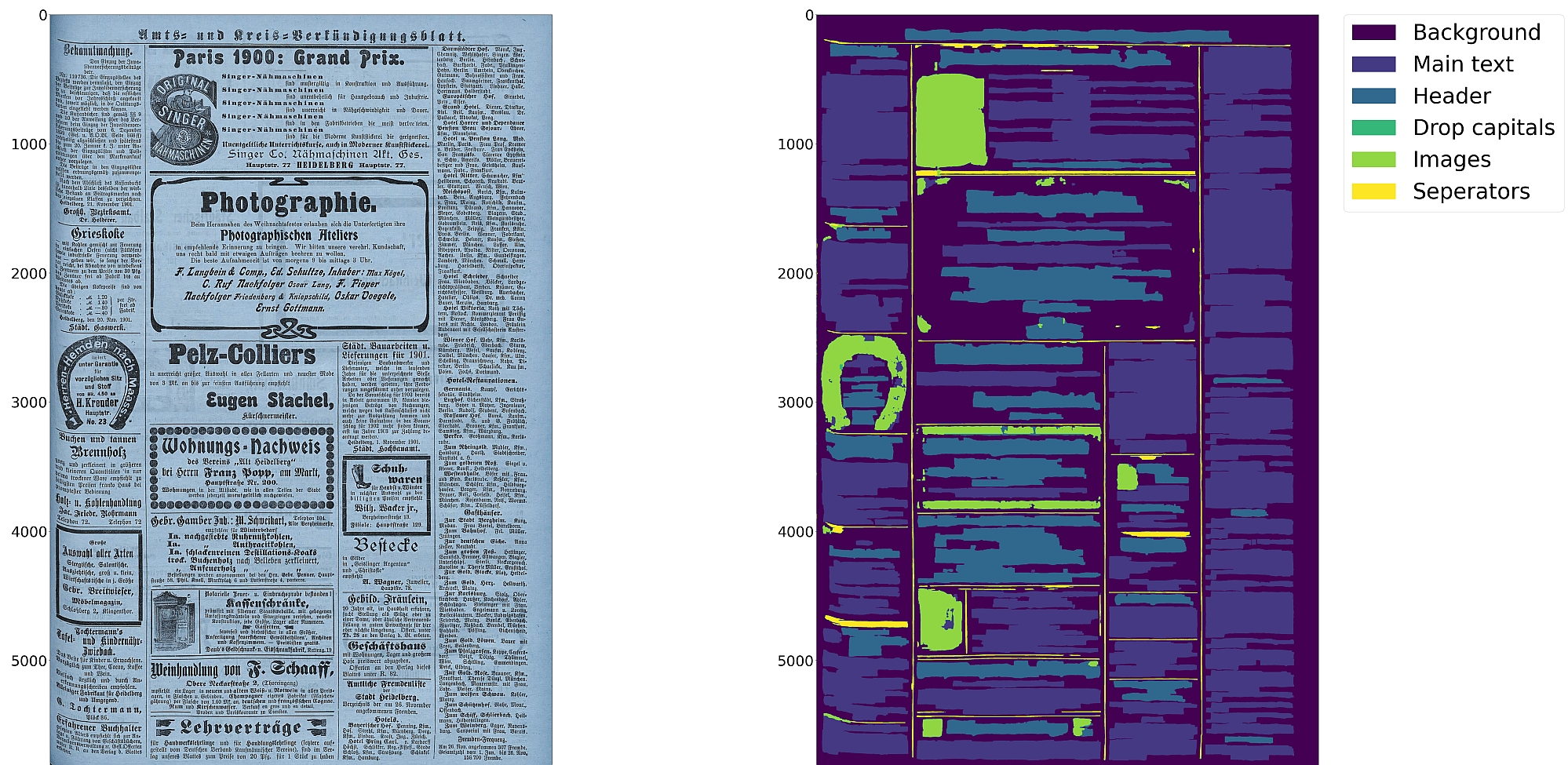

* Support for up to 10 segmentation classes:

|

||||

* Support for 10 distinct segmentation classes:

|

||||

* background, [page border](https://ocr-d.de/en/gt-guidelines/trans/lyRand.html), [text region](https://ocr-d.de/en/gt-guidelines/trans/lytextregion.html#textregionen__textregion_), [text line](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextLineType.html), [header](https://ocr-d.de/en/gt-guidelines/trans/lyUeberschrift.html), [image](https://ocr-d.de/en/gt-guidelines/trans/lyBildbereiche.html), [separator](https://ocr-d.de/en/gt-guidelines/trans/lySeparatoren.html), [marginalia](https://ocr-d.de/en/gt-guidelines/trans/lyMarginalie.html), [initial](https://ocr-d.de/en/gt-guidelines/trans/lyInitiale.html), [table](https://ocr-d.de/en/gt-guidelines/trans/lyTabellen.html)

|

||||

* Support for various image optimization operations:

|

||||

* cropping (border detection), binarization, deskewing, dewarping, scaling, enhancing, resizing

|

||||

* Text line segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

||||

* Detection of reading order (left-to-right or right-to-left)

|

||||

* Textline segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

|

||||

* Text recognition (OCR) using either CNN-RNN or Transformer models

|

||||

* Detection of reading order (left-to-right or right-to-left) using either heuristics or trainable models

|

||||

* Output in [PAGE-XML](https://github.com/PRImA-Research-Lab/PAGE-XML)

|

||||

* [OCR-D](https://github.com/qurator-spk/eynollah#use-as-ocr-d-processor) interface

|

||||

|

||||

:warning: Development is currently focused on achieving the best possible quality of results for a wide variety of

|

||||

historical documents and therefore processing can be very slow. We aim to improve this, but contributions are welcome.

|

||||

:warning: Development is focused on achieving the best quality of results for a wide variety of historical

|

||||

documents and therefore processing can be very slow. We aim to improve this, but contributions are welcome.

|

||||

|

||||

## Installation

|

||||

|

||||

Python `3.8-3.11` with Tensorflow `<2.13` on Linux are currently supported.

|

||||

|

||||

For (limited) GPU support the CUDA toolkit needs to be installed.

|

||||

For (limited) GPU support the CUDA toolkit needs to be installed. A known working config is CUDA `11` with cuDNN `8.6`.

|

||||

|

||||

You can either install from PyPI

|

||||

|

||||

|

|

@ -53,26 +54,30 @@ make install EXTRAS=OCR

|

|||

```

|

||||

|

||||

## Models

|

||||

|

||||

Pretrained models can be downloaded from [zenodo](https://zenodo.org/records/17194824) or [huggingface](https://huggingface.co/SBB?search_models=eynollah).

|

||||

|

||||

For documentation on methods and models, have a look at [`models.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/models.md).

|

||||

For documentation on models, have a look at [`models.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/models.md).

|

||||

Model cards are also provided for our trained models.

|

||||

|

||||

## Train

|

||||

## Training

|

||||

|

||||

In case you want to train your own model with Eynollah, have a look at [`train.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/train.md).

|

||||

In case you want to train your own model with Eynollah, see the

|

||||

documentation in [`train.md`](https://github.com/qurator-spk/eynollah/tree/main/docs/train.md) and use the

|

||||

tools in the [`train` folder](https://github.com/qurator-spk/eynollah/tree/main/train).

|

||||

|

||||

## Usage

|

||||

|

||||

Eynollah supports five use cases: layout analysis (segmentation), binarization,

|

||||

image enhancement, text recognition (OCR), and (trainable) reading order detection.

|

||||

image enhancement, text recognition (OCR), and reading order detection.

|

||||

|

||||

### Layout Analysis

|

||||

|

||||

The layout analysis module is responsible for detecting layouts, identifying text lines, and determining reading order

|

||||

using both heuristic methods or a machine-based reading order detection model.

|

||||

The layout analysis module is responsible for detecting layout elements, identifying text lines, and determining reading

|

||||

order using either heuristic methods or a [pretrained reading order detection model](https://github.com/qurator-spk/eynollah#machine-based-reading-order).

|

||||

|

||||

Note that there are currently two supported ways for reading order detection: either as part of layout analysis based

|

||||

on image input, or, currently under development, for given layout analysis results based on PAGE-XML data as input.

|

||||

Reading order detection can be performed either as part of layout analysis based on image input, or, currently under

|

||||

development, based on pre-existing layout analysis results in PAGE-XML format as input.

|

||||

|

||||

The command-line interface for layout analysis can be called like this:

|

||||

|

||||

|

|

@ -105,15 +110,15 @@ The following options can be used to further configure the processing:

|

|||

| `-sp <directory>` | save cropped page image to this directory |

|

||||

| `-sa <directory>` | save all (plot, enhanced/binary image, layout) to this directory |

|

||||

|

||||

If no option is set, the tool performs layout detection of main regions (background, text, images, separators

|

||||

If no further option is set, the tool performs layout detection of main regions (background, text, images, separators

|

||||

and marginals).

|

||||

The best output quality is produced when RGB images are used as input rather than greyscale or binarized images.

|

||||

The best output quality is achieved when RGB images are used as input rather than greyscale or binarized images.

|

||||

|

||||

### Binarization

|

||||

|

||||

The binarization module performs document image binarization using pretrained pixelwise segmentation models.

|

||||

|

||||

The command-line interface for binarization of single image can be called like this:

|

||||

The command-line interface for binarization can be called like this:

|

||||

|

||||

```sh

|

||||

eynollah binarization \

|

||||

|

|

@ -124,16 +129,16 @@ eynollah binarization \

|

|||

|

||||

### OCR

|

||||

|

||||

The OCR module performs text recognition from images using two main families of pretrained models: CNN-RNN–based OCR and Transformer-based OCR.

|

||||

The OCR module performs text recognition using either a CNN-RNN model or a Transformer model.

|

||||

|

||||

The command-line interface for ocr can be called like this:

|

||||

The command-line interface for OCR can be called like this:

|

||||

|

||||

```sh

|

||||

eynollah ocr \

|

||||

-i <single image file> | -di <directory containing image files> \

|

||||

-dx <directory of xmls> \

|

||||

-o <output directory> \

|

||||

-m <path to directory containing model files> | --model_name <path to specific model> \

|

||||

-m <directory containing model files> | --model_name <path to specific model> \

|

||||

```

|

||||

|

||||

### Machine-based-reading-order

|

||||

|

|

@ -169,22 +174,20 @@ If the input file group is PAGE-XML (from a previous OCR-D workflow step), Eynol

|

|||

(because some other preprocessing step was in effect like `denoised`), then

|

||||

the output PAGE-XML will be based on that as new top-level (`@imageFilename`)

|

||||

|

||||

ocrd-eynollah-segment -I OCR-D-XYZ -O OCR-D-SEG -P models eynollah_layout_v0_5_0

|

||||

ocrd-eynollah-segment -I OCR-D-XYZ -O OCR-D-SEG -P models eynollah_layout_v0_5_0

|

||||

|

||||

Still, in general, it makes more sense to add other workflow steps **after** Eynollah.

|

||||

In general, it makes more sense to add other workflow steps **after** Eynollah.

|

||||

|

||||

There is also an OCR-D processor for the binarization:

|

||||

There is also an OCR-D processor for binarization:

|

||||

|

||||

ocrd-sbb-binarize -I OCR-D-IMG -O OCR-D-BIN -P models default-2021-03-09

|

||||

|

||||

#### Additional documentation

|

||||

|

||||

Please check the [wiki](https://github.com/qurator-spk/eynollah/wiki).

|

||||

Additional documentation is available in the [docs](https://github.com/qurator-spk/eynollah/tree/main/docs) directory.

|

||||

|

||||

## How to cite

|

||||

|

||||

If you find this tool useful in your work, please consider citing our paper:

|

||||

|

||||

```bibtex

|

||||

@inproceedings{hip23rezanezhad,

|

||||

title = {Document Layout Analysis with Deep Learning and Heuristics},

|

||||

|

|

|

|||

308

docs/train.md

308

docs/train.md

|

|

@ -1,24 +1,34 @@

|

|||

# Training documentation

|

||||

|

||||

This aims to assist users in preparing training datasets, training models, and performing inference with trained models.

|

||||

We cover various use cases including pixel-wise segmentation, image classification, image enhancement, and machine-based

|

||||

reading order detection. For each use case, we provide guidance on how to generate the corresponding training dataset.

|

||||

This document aims to assist users in preparing training datasets, training models, and

|

||||

performing inference with trained models. We cover various use cases including

|

||||

pixel-wise segmentation, image classification, image enhancement, and

|

||||

machine-based reading order detection. For each use case, we provide guidance

|

||||

on how to generate the corresponding training dataset.

|

||||

|

||||

The following three tasks can all be accomplished using the code in the

|

||||

[`train`](https://github.com/qurator-spk/sbb_pixelwise_segmentation/tree/unifying-training-models) directory:

|

||||

The following three tasks can all be accomplished using the code in the

|

||||

[`train`](https://github.com/qurator-spk/eynollah/tree/main/train) directory:

|

||||

|

||||

* generate training dataset

|

||||

* train a model

|

||||

* inference with the trained model

|

||||

|

||||

## Training, evaluation and output

|

||||

|

||||

The train and evaluation folders should contain subfolders of `images` and `labels`.

|

||||

|

||||

The output folder should be an empty folder where the output model will be written to.

|

||||

|

||||

## Generate training dataset

|

||||

|

||||

The script `generate_gt_for_training.py` is used for generating training datasets. As the results of the following

|

||||

command demonstrates, the dataset generator provides three different commands:

|

||||

The script `generate_gt_for_training.py` is used for generating training datasets. As the results of the following

|

||||

command demonstrates, the dataset generator provides several subcommands:

|

||||

|

||||

`python generate_gt_for_training.py --help`

|

||||

```sh

|

||||

eynollah-training generate-gt --help

|

||||

```

|

||||

|

||||

These three commands are:

|

||||

The three most important subcommands are:

|

||||

|

||||

* image-enhancement

|

||||

* machine-based-reading-order

|

||||

|

|

@ -26,20 +36,20 @@ These three commands are:

|

|||

|

||||

### image-enhancement

|

||||

|

||||

Generating a training dataset for image enhancement is quite straightforward. All that is needed is a set of

|

||||

Generating a training dataset for image enhancement is quite straightforward. All that is needed is a set of

|

||||

high-resolution images. The training dataset can then be generated using the following command:

|

||||

|

||||

```sh

|

||||

python generate_gt_for_training.py image-enhancement \

|

||||

eynollah-training image-enhancement \

|

||||

-dis "dir of high resolution images" \

|

||||

-dois "dir where degraded images will be written" \

|

||||

-dols "dir where the corresponding high resolution image will be written as label" \

|

||||

-scs "degrading scales json file"

|

||||

```

|

||||

|

||||

The scales JSON file is a dictionary with a key named `scales` and values representing scales smaller than 1. Images are

|

||||

downscaled based on these scales and then upscaled again to their original size. This process causes the images to lose

|

||||

resolution at different scales. The degraded images are used as input images, and the original high-resolution images

|

||||

The scales JSON file is a dictionary with a key named `scales` and values representing scales smaller than 1. Images are

|

||||

downscaled based on these scales and then upscaled again to their original size. This process causes the images to lose

|

||||

resolution at different scales. The degraded images are used as input images, and the original high-resolution images

|

||||

serve as labels. The enhancement model can be trained with this generated dataset. The scales JSON file looks like this:

|

||||

|

||||

```yaml

|

||||

|

|

@ -50,21 +60,21 @@ serve as labels. The enhancement model can be trained with this generated datase

|

|||

|

||||

### machine-based-reading-order

|

||||

|

||||

For machine-based reading order, we aim to determine the reading priority between two sets of text regions. The model's

|

||||

input is a three-channel image: the first and last channels contain information about each of the two text regions,

|

||||

while the middle channel encodes prominent layout elements necessary for reading order, such as separators and headers.

|

||||

To generate the training dataset, our script requires a page XML file that specifies the image layout with the correct

|

||||

For machine-based reading order, we aim to determine the reading priority between two sets of text regions. The model's

|

||||

input is a three-channel image: the first and last channels contain information about each of the two text regions,

|

||||

while the middle channel encodes prominent layout elements necessary for reading order, such as separators and headers.

|

||||

To generate the training dataset, our script requires a page XML file that specifies the image layout with the correct

|

||||

reading order.

|

||||

|

||||

For output images, it is necessary to specify the width and height. Additionally, a minimum text region size can be set

|

||||

to filter out regions smaller than this minimum size. This minimum size is defined as the ratio of the text region area

|

||||

For output images, it is necessary to specify the width and height. Additionally, a minimum text region size can be set

|

||||

to filter out regions smaller than this minimum size. This minimum size is defined as the ratio of the text region area

|

||||

to the image area, with a default value of zero. To run the dataset generator, use the following command:

|

||||

|

||||

```shell

|

||||

python generate_gt_for_training.py machine-based-reading-order \

|

||||

eynollah-training generate-gt machine-based-reading-order \

|

||||

-dx "dir of GT xml files" \

|

||||

-domi "dir where output images will be written" \

|

||||

-docl "dir where the labels will be written" \

|

||||

"" -docl "dir where the labels will be written" \

|

||||

-ih "height" \

|

||||

-iw "width" \

|

||||

-min "min area ratio"

|

||||

|

|

@ -74,15 +84,15 @@ python generate_gt_for_training.py machine-based-reading-order \

|

|||

|

||||

pagexml2label is designed to generate labels from GT page XML files for various pixel-wise segmentation use cases,

|

||||

including 'layout,' 'textline,' 'printspace,' 'glyph,' and 'word' segmentation.

|

||||

To train a pixel-wise segmentation model, we require images along with their corresponding labels. Our training script

|

||||

expects a PNG image where each pixel corresponds to a label, represented by an integer. The background is always labeled

|

||||

as zero, while other elements are assigned different integers. For instance, if we have ground truth data with four

|

||||

To train a pixel-wise segmentation model, we require images along with their corresponding labels. Our training script

|

||||

expects a PNG image where each pixel corresponds to a label, represented by an integer. The background is always labeled

|

||||

as zero, while other elements are assigned different integers. For instance, if we have ground truth data with four

|

||||

elements including the background, the classes would be labeled as 0, 1, 2, and 3 respectively.

|

||||

|

||||

In binary segmentation scenarios such as textline or page extraction, the background is encoded as 0, and the desired

|

||||

In binary segmentation scenarios such as textline or page extraction, the background is encoded as 0, and the desired

|

||||

element is automatically encoded as 1 in the PNG label.

|

||||

|

||||

To specify the desired use case and the elements to be extracted in the PNG labels, a custom JSON file can be passed.

|

||||

To specify the desired use case and the elements to be extracted in the PNG labels, a custom JSON file can be passed.

|

||||

For example, in the case of 'textline' detection, the JSON file would resemble this:

|

||||

|

||||

```yaml

|

||||

|

|

@ -116,35 +126,35 @@ A possible custom config json file for layout segmentation where the "printspace

|

|||

}

|

||||

```

|

||||

|

||||

For the layout use case, it is beneficial to first understand the structure of the page XML file and its elements.

|

||||

In a given image, the annotations of elements are recorded in a page XML file, including their contours and classes.

|

||||

For an image document, the known regions are 'textregion', 'separatorregion', 'imageregion', 'graphicregion',

|

||||

For the layout use case, it is beneficial to first understand the structure of the page XML file and its elements.

|

||||

In a given image, the annotations of elements are recorded in a page XML file, including their contours and classes.

|

||||

For an image document, the known regions are 'textregion', 'separatorregion', 'imageregion', 'graphicregion',

|

||||

'noiseregion', and 'tableregion'.

|

||||

|

||||

Text regions and graphic regions also have their own specific types. The known types for text regions are 'paragraph',

|

||||

'header', 'heading', 'marginalia', 'drop-capital', 'footnote', 'footnote-continued', 'signature-mark', 'page-number',

|

||||

and 'catch-word'. The known types for graphic regions are 'handwritten-annotation', 'decoration', 'stamp', and

|

||||

Text regions and graphic regions also have their own specific types. The known types for text regions are 'paragraph',

|

||||

'header', 'heading', 'marginalia', 'drop-capital', 'footnote', 'footnote-continued', 'signature-mark', 'page-number',

|

||||

and 'catch-word'. The known types for graphic regions are 'handwritten-annotation', 'decoration', 'stamp', and

|

||||

'signature'.

|

||||

Since we don't know all types of text and graphic regions, unknown cases can arise. To handle these, we have defined

|

||||

two additional types, "rest_as_paragraph" and "rest_as_decoration", to ensure that no unknown types are missed.

|

||||

Since we don't know all types of text and graphic regions, unknown cases can arise. To handle these, we have defined

|

||||

two additional types, "rest_as_paragraph" and "rest_as_decoration", to ensure that no unknown types are missed.

|

||||

This way, users can extract all known types from the labels and be confident that no unknown types are overlooked.

|

||||

|

||||

In the custom JSON file shown above, "header" and "heading" are extracted as the same class, while "marginalia" is shown

|

||||

as a different class. All other text region types, including "drop-capital," are grouped into the same class. For the

|

||||

graphic region, "stamp" has its own class, while all other types are classified together. "Image region" and "separator

|

||||

region" are also present in the label. However, other regions like "noise region" and "table region" will not be

|

||||

In the custom JSON file shown above, "header" and "heading" are extracted as the same class, while "marginalia" is shown

|

||||

as a different class. All other text region types, including "drop-capital," are grouped into the same class. For the

|

||||

graphic region, "stamp" has its own class, while all other types are classified together. "Image region" and "separator

|

||||

region" are also present in the label. However, other regions like "noise region" and "table region" will not be

|

||||

included in the label PNG file, even if they have information in the page XML files, as we chose not to include them.

|

||||

|

||||

```sh

|

||||

python generate_gt_for_training.py pagexml2label \

|

||||

eynollah-training generate-gt pagexml2label \

|

||||

-dx "dir of GT xml files" \

|

||||

-do "dir where output label png files will be written" \

|

||||

-cfg "custom config json file" \

|

||||

-to "output type which has 2d and 3d. 2d is used for training and 3d is just to visualise the labels"

|

||||

```

|

||||

|

||||

We have also defined an artificial class that can be added to the boundary of text region types or text lines. This key

|

||||

is called "artificial_class_on_boundary." If users want to apply this to certain text regions in the layout use case,

|

||||

We have also defined an artificial class that can be added to the boundary of text region types or text lines. This key

|

||||

is called "artificial_class_on_boundary." If users want to apply this to certain text regions in the layout use case,

|

||||

the example JSON config file should look like this:

|

||||

|

||||

```yaml

|

||||

|

|

@ -167,13 +177,13 @@ the example JSON config file should look like this:

|

|||

}

|

||||

```

|

||||

|

||||

This implies that the artificial class label, denoted by 7, will be present on PNG files and will only be added to the

|

||||

This implies that the artificial class label, denoted by 7, will be present on PNG files and will only be added to the

|

||||

elements labeled as "paragraph," "header," "heading," and "marginalia."

|

||||

|

||||

For "textline", "word", and "glyph", the artificial class on the boundaries will be activated only if the

|

||||

"artificial_class_label" key is specified in the config file. Its value should be set as 2 since these elements

|

||||

represent binary cases. For example, if the background and textline are denoted as 0 and 1 respectively, then the

|

||||

artificial class should be assigned the value 2. The example JSON config file should look like this for "textline" use

|

||||

For "textline", "word", and "glyph", the artificial class on the boundaries will be activated only if the

|

||||

"artificial_class_label" key is specified in the config file. Its value should be set as 2 since these elements

|

||||

represent binary cases. For example, if the background and textline are denoted as 0 and 1 respectively, then the

|

||||

artificial class should be assigned the value 2. The example JSON config file should look like this for "textline" use

|

||||

case:

|

||||

|

||||

```yaml

|

||||

|

|

@ -183,14 +193,14 @@ case:

|

|||

}

|

||||

```

|

||||

|

||||

If the coordinates of "PrintSpace" or "Border" are present in the page XML ground truth files, and the user wishes to

|

||||

crop only the print space area, this can be achieved by activating the "-ps" argument. However, it should be noted that

|

||||

in this scenario, since cropping will be applied to the label files, the directory of the original images must be

|

||||

provided to ensure that they are cropped in sync with the labels. This ensures that the correct images and labels

|

||||

If the coordinates of "PrintSpace" or "Border" are present in the page XML ground truth files, and the user wishes to

|

||||

crop only the print space area, this can be achieved by activating the "-ps" argument. However, it should be noted that

|

||||

in this scenario, since cropping will be applied to the label files, the directory of the original images must be

|

||||

provided to ensure that they are cropped in sync with the labels. This ensures that the correct images and labels

|

||||

required for training are obtained. The command should resemble the following:

|

||||

|

||||

```sh

|

||||

python generate_gt_for_training.py pagexml2label \

|

||||

eynollah-training generate-gt pagexml2label \

|

||||

-dx "dir of GT xml files" \

|

||||

-do "dir where output label png files will be written" \

|

||||

-cfg "custom config json file" \

|

||||

|

|

@ -204,11 +214,11 @@ python generate_gt_for_training.py pagexml2label \

|

|||

|

||||

### classification

|

||||

|

||||

For the classification use case, we haven't provided a ground truth generator, as it's unnecessary. For classification,

|

||||

all we require is a training directory with subdirectories, each containing images of its respective classes. We need

|

||||

separate directories for training and evaluation, and the class names (subdirectories) must be consistent across both

|

||||

directories. Additionally, the class names should be specified in the config JSON file, as shown in the following

|

||||

example. If, for instance, we aim to classify "apple" and "orange," with a total of 2 classes, the

|

||||

For the classification use case, we haven't provided a ground truth generator, as it's unnecessary. For classification,

|

||||

all we require is a training directory with subdirectories, each containing images of its respective classes. We need

|

||||

separate directories for training and evaluation, and the class names (subdirectories) must be consistent across both

|

||||

directories. Additionally, the class names should be specified in the config JSON file, as shown in the following

|

||||

example. If, for instance, we aim to classify "apple" and "orange," with a total of 2 classes, the

|

||||

"classification_classes_name" key in the config file should appear as follows:

|

||||

|

||||

```yaml

|

||||

|

|

@ -233,7 +243,7 @@ example. If, for instance, we aim to classify "apple" and "orange," with a total

|

|||

|

||||

The "dir_train" should be like this:

|

||||

|

||||

```

|

||||

```

|

||||

.

|

||||

└── train # train directory

|

||||

├── apple # directory of images for apple class

|

||||

|

|

@ -242,7 +252,7 @@ The "dir_train" should be like this:

|

|||

|

||||

And the "dir_eval" the same structure as train directory:

|

||||

|

||||

```

|

||||

```

|

||||

.

|

||||

└── eval # evaluation directory

|

||||

├── apple # directory of images for apple class

|

||||

|

|

@ -253,12 +263,12 @@ And the "dir_eval" the same structure as train directory:

|

|||

The classification model can be trained using the following command line:

|

||||

|

||||

```sh

|

||||

python train.py with config_classification.json

|

||||

eynollah-training train with config_classification.json

|

||||

```

|

||||

|

||||

As evident in the example JSON file above, for classification, we utilize a "f1_threshold_classification" parameter.

|

||||

This parameter is employed to gather all models with an evaluation f1 score surpassing this threshold. Subsequently,

|

||||

an ensemble of these model weights is executed, and a model is saved in the output directory as "model_ens_avg".

|

||||

As evident in the example JSON file above, for classification, we utilize a "f1_threshold_classification" parameter.

|

||||

This parameter is employed to gather all models with an evaluation f1 score surpassing this threshold. Subsequently,

|

||||

an ensemble of these model weights is executed, and a model is saved in the output directory as "model_ens_avg".

|

||||

Additionally, the weight of the best model based on the evaluation f1 score is saved as "model_best".

|

||||

|

||||

### reading order

|

||||

|

|

@ -306,58 +316,63 @@ The classification model can be trained like the classification case command lin

|

|||

|

||||

#### Parameter configuration for segmentation or enhancement usecases

|

||||

|

||||

The following parameter configuration can be applied to all segmentation use cases and enhancements. The augmentation,

|

||||

its sub-parameters, and continued training are defined only for segmentation use cases and enhancements, not for

|

||||

The following parameter configuration can be applied to all segmentation use cases and enhancements. The augmentation,

|

||||

its sub-parameters, and continued training are defined only for segmentation use cases and enhancements, not for

|

||||

classification and machine-based reading order, as you can see in their example config files.

|

||||

|

||||

* backbone_type: For segmentation tasks (such as text line, binarization, and layout detection) and enhancement, we

|

||||

* offer two backbone options: a "nontransformer" and a "transformer" backbone. For the "transformer" backbone, we first

|

||||

* apply a CNN followed by a transformer. In contrast, the "nontransformer" backbone utilizes only a CNN ResNet-50.

|

||||

* task : The task parameter can have values such as "segmentation", "enhancement", "classification", and "reading_order".

|

||||

* patches: If you want to break input images into smaller patches (input size of the model) you need to set this

|

||||

* parameter to ``true``. In the case that the model should see the image once, like page extraction, patches should be

|

||||

* set to ``false``.

|

||||

* n_batch: Number of batches at each iteration.

|

||||

* n_classes: Number of classes. In the case of binary classification this should be 2. In the case of reading_order it

|

||||

* should set to 1. And for the case of layout detection just the unique number of classes should be given.

|

||||

* n_epochs: Number of epochs.

|

||||

* input_height: This indicates the height of model's input.

|

||||

* input_width: This indicates the width of model's input.

|

||||

* weight_decay: Weight decay of l2 regularization of model layers.

|

||||

* pretraining: Set to ``true`` to load pretrained weights of ResNet50 encoder. The downloaded weights should be saved

|

||||

* in a folder named "pretrained_model" in the same directory of "train.py" script.

|

||||

* augmentation: If you want to apply any kind of augmentation this parameter should first set to ``true``.

|

||||

* flip_aug: If ``true``, different types of filp will be applied on image. Type of flips is given with "flip_index" parameter.

|

||||

* blur_aug: If ``true``, different types of blurring will be applied on image. Type of blurrings is given with "blur_k" parameter.

|

||||

* scaling: If ``true``, scaling will be applied on image. Scale of scaling is given with "scales" parameter.

|

||||

* degrading: If ``true``, degrading will be applied to the image. The amount of degrading is defined with "degrade_scales" parameter.

|

||||

* brightening: If ``true``, brightening will be applied to the image. The amount of brightening is defined with "brightness" parameter.

|

||||

* rotation_not_90: If ``true``, rotation (not 90 degree) will be applied on image. Rotation angles are given with "thetha" parameter.

|

||||

* rotation: If ``true``, 90 degree rotation will be applied on image.

|

||||

* binarization: If ``true``,Otsu thresholding will be applied to augment the input data with binarized images.

|

||||

* scaling_bluring: If ``true``, combination of scaling and blurring will be applied on image.

|

||||

* scaling_binarization: If ``true``, combination of scaling and binarization will be applied on image.

|

||||

* scaling_flip: If ``true``, combination of scaling and flip will be applied on image.

|

||||

* flip_index: Type of flips.

|

||||

* blur_k: Type of blurrings.

|

||||

* scales: Scales of scaling.

|

||||

* brightness: The amount of brightenings.

|

||||

* thetha: Rotation angles.

|

||||

* degrade_scales: The amount of degradings.

|

||||

* continue_training: If ``true``, it means that you have already trained a model and you would like to continue the training. So it is needed to provide the dir of trained model with "dir_of_start_model" and index for naming the models. For example if you have already trained for 3 epochs then your last index is 2 and if you want to continue from model_1.h5, you can set ``index_start`` to 3 to start naming model with index 3.

|

||||

* weighted_loss: If ``true``, this means that you want to apply weighted categorical_crossentropy as loss fucntion. Be carefull if you set to ``true``the parameter "is_loss_soft_dice" should be ``false``

|

||||

* data_is_provided: If you have already provided the input data you can set this to ``true``. Be sure that the train and eval data are in "dir_output". Since when once we provide training data we resize and augment them and then we write them in sub-directories train and eval in "dir_output".

|

||||

* dir_train: This is the directory of "images" and "labels" (dir_train should include two subdirectories with names of images and labels ) for raw images and labels. Namely they are not prepared (not resized and not augmented) yet for training the model. When we run this tool these raw data will be transformed to suitable size needed for the model and they will be written in "dir_output" in train and eval directories. Each of train and eval include "images" and "labels" sub-directories.

|

||||

* index_start: Starting index for saved models in the case that "continue_training" is ``true``.

|

||||

* dir_of_start_model: Directory containing pretrained model to continue training the model in the case that "continue_training" is ``true``.

|

||||

* transformer_num_patches_xy: Number of patches for vision transformer in x and y direction respectively.

|

||||

* transformer_patchsize_x: Patch size of vision transformer patches in x direction.

|

||||

* transformer_patchsize_y: Patch size of vision transformer patches in y direction.

|

||||

* transformer_projection_dim: Transformer projection dimension. Default value is 64.

|

||||

* transformer_mlp_head_units: Transformer Multilayer Perceptron (MLP) head units. Default value is [128, 64].

|

||||

* transformer_layers: transformer layers. Default value is 8.

|

||||

* transformer_num_heads: Transformer number of heads. Default value is 4.

|

||||

* transformer_cnn_first: We have two types of vision transformers. In one type, a CNN is applied first, followed by a transformer. In the other type, this order is reversed. If transformer_cnn_first is true, it means the CNN will be applied before the transformer. Default value is true.

|

||||

* `backbone_type`: For segmentation tasks (such as text line, binarization, and layout detection) and enhancement, we

|

||||

offer two backbone options: a "nontransformer" and a "transformer" backbone. For the "transformer" backbone, we first

|

||||

apply a CNN followed by a transformer. In contrast, the "nontransformer" backbone utilizes only a CNN ResNet-50.

|

||||

* `task`: The task parameter can have values such as "segmentation", "enhancement", "classification", and "reading_order".

|

||||

* `patches`: If you want to break input images into smaller patches (input size of the model) you need to set this

|

||||

* parameter to `true`. In the case that the model should see the image once, like page extraction, patches should be

|

||||

set to ``false``.

|

||||

* `n_batch`: Number of batches at each iteration.

|

||||

* `n_classes`: Number of classes. In the case of binary classification this should be 2. In the case of reading_order it

|

||||

should set to 1. And for the case of layout detection just the unique number of classes should be given.

|

||||

* `n_epochs`: Number of epochs.

|

||||

* `input_height`: This indicates the height of model's input.

|

||||

* `input_width`: This indicates the width of model's input.

|

||||

* `weight_decay`: Weight decay of l2 regularization of model layers.

|

||||

* `pretraining`: Set to `true` to load pretrained weights of ResNet50 encoder. The downloaded weights should be saved

|

||||

in a folder named "pretrained_model" in the same directory of "train.py" script.

|

||||

* `augmentation`: If you want to apply any kind of augmentation this parameter should first set to `true`.

|

||||

* `flip_aug`: If `true`, different types of filp will be applied on image. Type of flips is given with "flip_index" parameter.

|

||||

* `blur_aug`: If `true`, different types of blurring will be applied on image. Type of blurrings is given with "blur_k" parameter.

|

||||

* `scaling`: If `true`, scaling will be applied on image. Scale of scaling is given with "scales" parameter.

|

||||

* `degrading`: If `true`, degrading will be applied to the image. The amount of degrading is defined with "degrade_scales" parameter.

|

||||

* `brightening`: If `true`, brightening will be applied to the image. The amount of brightening is defined with "brightness" parameter.

|

||||

* `rotation_not_90`: If `true`, rotation (not 90 degree) will be applied on image. Rotation angles are given with "thetha" parameter.

|

||||

* `rotation`: If `true`, 90 degree rotation will be applied on image.

|

||||

* `binarization`: If `true`,Otsu thresholding will be applied to augment the input data with binarized images.

|

||||

* `scaling_bluring`: If `true`, combination of scaling and blurring will be applied on image.

|

||||

* `scaling_binarization`: If `true`, combination of scaling and binarization will be applied on image.

|

||||

* `scaling_flip`: If `true`, combination of scaling and flip will be applied on image.

|

||||

* `flip_index`: Type of flips.

|

||||

* `blur_k`: Type of blurrings.

|

||||

* `scales`: Scales of scaling.

|

||||

* `brightness`: The amount of brightenings.

|

||||

* `thetha`: Rotation angles.

|

||||

* `degrade_scales`: The amount of degradings.

|

||||

* `continue_training`: If `true`, it means that you have already trained a model and you would like to continue the

|

||||

training. So it is needed to providethe dir of trained model with "dir_of_start_model" and index for naming

|

||||

themodels. For example if you have already trained for 3 epochs then your lastindex is 2 and if you want to continue

|

||||

from model_1.h5, you can set `index_start` to 3 to start naming model with index 3.

|

||||

* `weighted_loss`: If `true`, this means that you want to apply weighted categorical_crossentropy as loss fucntion. Be carefull if you set to `true`the parameter "is_loss_soft_dice" should be ``false``

|

||||

* `data_is_provided`: If you have already provided the input data you can set this to `true`. Be sure that the train

|

||||

and eval data are in"dir_output".Since when once we provide training data we resize and augmentthem and then wewrite

|

||||

them in sub-directories train and eval in "dir_output".

|

||||

* `dir_train`: This is the directory of "images" and "labels" (dir_train should include two subdirectories with names of images and labels ) for raw images and labels. Namely they are not prepared (not resized and not augmented) yet for training the model. When we run this tool these raw data will be transformed to suitable size needed for the model and they will be written in "dir_output" in train and eval directories. Each of train and eval include "images" and "labels" sub-directories.

|

||||

* `index_start`: Starting index for saved models in the case that "continue_training" is `true`.

|

||||

* `dir_of_start_model`: Directory containing pretrained model to continue training the model in the case that "continue_training" is `true`.

|

||||

* `transformer_num_patches_xy`: Number of patches for vision transformer in x and y direction respectively.

|

||||

* `transformer_patchsize_x`: Patch size of vision transformer patches in x direction.

|

||||

* `transformer_patchsize_y`: Patch size of vision transformer patches in y direction.

|

||||

* `transformer_projection_dim`: Transformer projection dimension. Default value is 64.

|

||||

* `transformer_mlp_head_units`: Transformer Multilayer Perceptron (MLP) head units. Default value is [128, 64].

|

||||

* `transformer_layers`: transformer layers. Default value is 8.

|

||||

* `transformer_num_heads`: Transformer number of heads. Default value is 4.

|

||||

* `transformer_cnn_first`: We have two types of vision transformers. In one type, a CNN is applied first, followed by a transformer. In the other type, this order is reversed. If transformer_cnn_first is true, it means the CNN will be applied before the transformer. Default value is true.

|

||||

|

||||

In the case of segmentation and enhancement the train and evaluation directory should be as following.

|

||||

|

||||

|

|

@ -379,13 +394,39 @@ And the "dir_eval" the same structure as train directory:

|

|||

└── labels # directory of labels

|

||||

```

|

||||

|

||||

After configuring the JSON file for segmentation or enhancement, training can be initiated by running the following

|

||||

After configuring the JSON file for segmentation or enhancement, training can be initiated by running the following

|

||||

command, similar to the process for classification and reading order:

|

||||

|

||||

`python train.py with config_classification.json`

|

||||

```

|

||||

eynollah-training train with config_classification.json`

|

||||

```

|

||||

|

||||

#### Binarization

|

||||

|

||||

### Ground truth format

|

||||

|

||||

Lables for each pixel are identified by a number. So if you have a

|

||||

binary case, ``n_classes`` should be set to ``2`` and labels should

|

||||

be ``0`` and ``1`` for each class and pixel.

|

||||

|

||||

In the case of multiclass, just set ``n_classes`` to the number of classes

|

||||

you have and the try to produce the labels by pixels set from ``0 , 1 ,2 .., n_classes-1``.

|

||||

The labels format should be png.

|

||||

Our lables are 3 channel png images but only information of first channel is used.

|

||||

If you have an image label with height and width of 10, for a binary case the first channel should look like this:

|

||||

|

||||

Label: [ [1, 0, 0, 1, 1, 0, 0, 1, 0, 0],

|

||||

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0],

|

||||

...,

|

||||

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0],

|

||||

[0, 0, 0, 0, 0, 0, 0, 0, 0, 0] ]

|

||||

|

||||

This means that you have an image by `10*10*3` and `pixel[0,0]` belongs

|

||||

to class `1` and `pixel[0,1]` belongs to class `0`.

|

||||

|

||||

A small sample of training data for binarization experiment can be found here, [Training data sample](https://qurator-data.de/~vahid.rezanezhad/binarization_training_data_sample/), which contains images and lables folders.

|

||||

|

||||

|

||||

An example config json file for binarization can be like this:

|

||||

|

||||

```yaml

|

||||

|

|

@ -429,7 +470,7 @@ An example config json file for binarization can be like this:

|

|||

"thetha" : [10, -10],

|

||||

"continue_training": false,

|

||||

"index_start" : 0,

|

||||

"dir_of_start_model" : " ",

|

||||

"dir_of_start_model" : " ",

|

||||

"weighted_loss": false,

|

||||

"is_loss_soft_dice": false,

|

||||

"data_is_provided": false,

|

||||

|

|

@ -474,7 +515,7 @@ An example config json file for binarization can be like this:

|

|||

"thetha" : [10, -10],

|

||||

"continue_training": false,

|

||||

"index_start" : 0,

|

||||

"dir_of_start_model" : " ",

|

||||

"dir_of_start_model" : " ",

|

||||

"weighted_loss": false,

|

||||

"is_loss_soft_dice": false,

|

||||

"data_is_provided": false,

|

||||

|

|

@ -519,7 +560,7 @@ An example config json file for binarization can be like this:

|

|||

"thetha" : [10, -10],

|

||||

"continue_training": false,

|

||||

"index_start" : 0,

|

||||

"dir_of_start_model" : " ",

|

||||

"dir_of_start_model" : " ",

|

||||

"weighted_loss": false,

|

||||

"is_loss_soft_dice": false,

|

||||

"data_is_provided": false,

|

||||

|

|

@ -529,7 +570,7 @@ An example config json file for binarization can be like this:

|

|||

}

|

||||

```

|

||||

|

||||

It's important to mention that the value of n_classes for enhancement should be 3, as the model's output is a 3-channel

|

||||

It's important to mention that the value of n_classes for enhancement should be 3, as the model's output is a 3-channel

|

||||

image.

|

||||

|

||||

#### Page extraction

|

||||

|

|

@ -567,7 +608,7 @@ image.

|

|||

"thetha" : [10, -10],

|

||||

"continue_training": false,

|

||||

"index_start" : 0,

|

||||

"dir_of_start_model" : " ",

|

||||

"dir_of_start_model" : " ",

|

||||

"weighted_loss": false,

|

||||

"is_loss_soft_dice": false,

|

||||

"data_is_provided": false,

|

||||

|

|

@ -577,8 +618,8 @@ image.

|

|||

}

|

||||

```

|

||||

|

||||

For page segmentation (or printspace or border segmentation), the model needs to view the input image in its entirety,

|

||||

hence the patches parameter should be set to false.

|

||||

For page segmentation (or print space or border segmentation), the model needs to view the input image in its

|

||||

entirety,hence the patches parameter should be set to false.

|

||||

|

||||

#### layout segmentation

|

||||

|

||||

|

|

@ -625,7 +666,7 @@ An example config json file for layout segmentation with 5 classes (including ba

|

|||

"thetha" : [10, -10],

|

||||

"continue_training": false,

|

||||

"index_start" : 0,

|

||||

"dir_of_start_model" : " ",

|

||||

"dir_of_start_model" : " ",

|

||||

"weighted_loss": false,

|

||||

"is_loss_soft_dice": false,

|

||||

"data_is_provided": false,

|

||||

|

|

@ -638,35 +679,34 @@ An example config json file for layout segmentation with 5 classes (including ba

|

|||

|

||||

### classification

|

||||

|

||||

For conducting inference with a trained model, you simply need to execute the following command line, specifying the

|

||||

For conducting inference with a trained model, you simply need to execute the following command line, specifying the

|

||||

directory of the model and the image on which to perform inference:

|

||||

|

||||

```sh

|

||||

python inference.py -m "model dir" -i "image"

|

||||

eynollah-training inference -m "model dir" -i "image"

|

||||

```

|

||||

|

||||

This will straightforwardly return the class of the image.

|

||||

|

||||

### machine based reading order

|

||||

|

||||

To infer the reading order using a reading order model, we need a page XML file containing layout information but

|

||||

without the reading order. We simply need to provide the model directory, the XML file, and the output directory.

|

||||

The new XML file with the added reading order will be written to the output directory with the same name.

|

||||

We need to run:

|

||||

To infer the reading order using a reading order model, we need a page XML file containing layout information but

|

||||

without the reading order. We simply need to provide the model directory, the XML file, and the output directory. The

|

||||

new XML file with the added reading order will be written to the output directory with the same name. We need to run:

|

||||

|

||||

```sh

|

||||

python inference.py \

|

||||

eynollah-training inference \

|

||||

-m "model dir" \

|

||||

-xml "page xml file" \

|

||||

-o "output dir to write new xml with reading order"

|

||||

```

|

||||

|

||||

### Segmentation (Textline, Binarization, Page extraction and layout) and enhancement

|

||||

For conducting inference with a trained model for segmentation and enhancement you need to run the following command

|

||||

line:

|

||||

|

||||

For conducting inference with a trained model for segmentation and enhancement you need to run the following command line:

|

||||

|

||||

```sh

|

||||

python inference.py \

|

||||

eynollah-training inference \

|

||||

-m "model dir" \

|

||||

-i "image" \

|

||||

-p \

|

||||

|

|

@ -675,5 +715,5 @@ python inference.py \

|

|||

|

||||

Note that in the case of page extraction the -p flag is not needed.

|

||||

|

||||

For segmentation or binarization tasks, if a ground truth (GT) label is available, the IoU evaluation metric can be

|

||||

For segmentation or binarization tasks, if a ground truth (GT) label is available, the IoU evaluation metric can be

|

||||

calculated for the output. To do this, you need to provide the GT label using the argument -gt.

|

||||

|

|

|

|||

|

|

@ -13,7 +13,11 @@ license.file = "LICENSE"

|

|||

requires-python = ">=3.8"

|

||||

keywords = ["document layout analysis", "image segmentation"]

|

||||

|

||||

dynamic = ["dependencies", "version"]

|

||||

dynamic = [

|

||||

"dependencies",

|

||||

"optional-dependencies",

|

||||

"version"

|

||||

]

|

||||

|

||||

classifiers = [

|

||||

"Development Status :: 4 - Beta",

|

||||

|

|

@ -25,12 +29,9 @@ classifiers = [

|

|||

"Topic :: Scientific/Engineering :: Image Processing",

|

||||

]

|

||||

|

||||

[project.optional-dependencies]

|

||||

OCR = ["torch <= 2.0.1", "transformers <= 4.30.2"]

|

||||

plotting = ["matplotlib"]

|

||||

|

||||

[project.scripts]

|

||||

eynollah = "eynollah.cli:main"

|

||||

eynollah-training = "eynollah.training.cli:main"

|

||||

ocrd-eynollah-segment = "eynollah.ocrd_cli:main"

|

||||

ocrd-sbb-binarize = "eynollah.ocrd_cli_binarization:main"

|

||||

|

||||

|

|

@ -41,6 +42,9 @@ Repository = "https://github.com/qurator-spk/eynollah.git"

|

|||

[tool.setuptools.dynamic]

|

||||

dependencies = {file = ["requirements.txt"]}

|

||||

optional-dependencies.test = {file = ["requirements-test.txt"]}

|

||||

optional-dependencies.OCR = {file = ["requirements-ocr.txt"]}

|

||||

optional-dependencies.plotting = {file = ["requirements-plotting.txt"]}

|

||||

optional-dependencies.training = {file = ["requirements-training.txt"]}

|

||||

|

||||

[tool.setuptools.packages.find]

|

||||

where = ["src"]

|

||||

|

|

@ -54,6 +58,8 @@ source = ["eynollah"]

|

|||

|

||||

[tool.ruff]

|

||||

line-length = 120

|

||||

# TODO: Reenable and fix after release v0.6.0

|

||||

exclude = ['src/eynollah/training']

|

||||

|

||||

[tool.ruff.lint]

|

||||

ignore = [

|

||||

|

|

@ -69,3 +75,4 @@ ignore = [

|

|||

|

||||

[tool.ruff.format]

|

||||

quote-style = "preserve"

|

||||

|

||||

|

|

|

|||

2

requirements-ocr.txt

Normal file

2

requirements-ocr.txt

Normal file

|

|

@ -0,0 +1,2 @@

|

|||

torch <= 2.0.1

|

||||

transformers <= 4.30.2

|

||||

1

requirements-plotting.txt

Normal file

1

requirements-plotting.txt

Normal file

|

|

@ -0,0 +1 @@

|

|||

matplotlib

|

||||

1

requirements-training.txt

Symbolic link

1

requirements-training.txt

Symbolic link

|

|

@ -0,0 +1 @@

|

|||

train/requirements.txt

|

||||

|

|

@ -385,6 +385,8 @@ class Eynollah:

|

|||

self.logger.warning("overriding default model %s version %s to %s", key, self.model_versions[key], val)

|

||||

self.model_versions[key] = val

|

||||

# load models, depending on modes

|

||||

# (note: loading too many models can cause OOM on GPU/CUDA,

|

||||

# thus, we try set up the minimal configuration for the current mode)

|

||||

loadable = [

|

||||

"col_classifier",

|

||||

"binarization",

|

||||

|

|

@ -400,8 +402,8 @@ class Eynollah:

|

|||

# if self.allow_enhancement:?

|

||||

loadable.append("enhancement")

|

||||

if self.full_layout:

|

||||

loadable.extend(["region_fl_np",

|

||||

"region_fl"])

|

||||

loadable.append("region_fl_np")

|

||||

#loadable.append("region_fl")

|

||||

if self.reading_order_machine_based:

|

||||

loadable.append("reading_order")

|

||||

if self.tables:

|

||||

|

|

@ -4415,9 +4417,9 @@ class Eynollah:

|

|||

textline_mask_tot_ea_org[img_revised_tab==drop_label_in_full_layout] = 0

|

||||

|

||||

|

||||

text_only = ((img_revised_tab[:, :] == 1)) * 1

|

||||

text_only = (img_revised_tab[:, :] == 1) * 1

|

||||

if np.abs(slope_deskew) >= SLOPE_THRESHOLD:

|

||||

text_only_d = ((text_regions_p_1_n[:, :] == 1)) * 1

|

||||

text_only_d = (text_regions_p_1_n[:, :] == 1) * 1

|

||||

|

||||

#print("text region early 2 in %.1fs", time.time() - t0)

|

||||

###min_con_area = 0.000005

|

||||

|

|

@ -5284,7 +5286,7 @@ class Eynollah_ocr:

|

|||

##unicode_textpage.text = tot_page_text

|

||||

|

||||

ET.register_namespace("",name_space)

|

||||

tree1.write(out_file_ocr,xml_declaration=True,method='xml',encoding="utf8",default_namespace=None)

|

||||

tree1.write(out_file_ocr,xml_declaration=True,method='xml',encoding="utf-8",default_namespace=None)

|

||||

else:

|

||||

###max_len = 280#512#280#512

|

||||

###padding_token = 1500#299#1500#299

|

||||

|

|

@ -5833,5 +5835,5 @@ class Eynollah_ocr:

|

|||

##unicode_textpage.text = tot_page_text

|

||||

|

||||

ET.register_namespace("",name_space)

|

||||

tree1.write(out_file_ocr,xml_declaration=True,method='xml',encoding="utf8",default_namespace=None)

|

||||

tree1.write(out_file_ocr,xml_declaration=True,method='xml',encoding="utf-8",default_namespace=None)

|

||||

#print("Job done in %.1fs", time.time() - t0)

|

||||

|

|

|

|||

|

|

@ -805,7 +805,7 @@ class machine_based_reading_order_on_layout:

|

|||

tree_xml.write(os.path.join(dir_out, file_name+'.xml'),

|

||||

xml_declaration=True,

|

||||

method='xml',

|

||||

encoding="utf8",

|

||||

encoding="utf-8",

|

||||

default_namespace=None)

|

||||

|

||||

#sys.exit()

|

||||

|

|

|

|||

|

|

@ -1,5 +1,5 @@

|

|||

{

|

||||

"version": "0.6.0rc1",

|

||||

"version": "0.6.0",

|

||||

"git_url": "https://github.com/qurator-spk/eynollah",

|

||||

"dockerhub": "ocrd/eynollah",

|

||||

"tools": {

|

||||

|

|

|

|||

|

|

@ -12,7 +12,7 @@ from .utils import crop_image_inside_box

|

|||

from .utils.rotate import rotate_image_different

|

||||

from .utils.resize import resize_image

|

||||

|

||||

class EynollahPlotter():

|

||||

class EynollahPlotter:

|

||||

"""

|

||||

Class collecting all the plotting and image writing methods

|

||||

"""

|

||||

|

|

|

|||

0

src/eynollah/training/__init__.py

Normal file

0

src/eynollah/training/__init__.py

Normal file

|

|

@ -0,0 +1,24 @@

|

|||

import click

|

||||

import tensorflow as tf

|

||||

|

||||

from .models import resnet50_unet

|

||||

|

||||

|

||||

def configuration():

|

||||

gpu_options = tf.compat.v1.GPUOptions(allow_growth=True)

|

||||