2020-11-30 15:40:32 +01:00

# Eynollah

2024-04-03 19:58:24 +02:00

> Document Layout Analysis with Deep Learning and Heuristics

2023-04-05 10:40:18 +02:00

2023-03-31 03:18:18 +02:00

[](https://pypi.org/project/eynollah/)

2023-04-14 02:11:51 +02:00

[](https://github.com/qurator-spk/eynollah/actions/workflows/test-eynollah.yml)

2025-04-05 01:34:28 +02:00

[](https://github.com/qurator-spk/eynollah/actions/workflows/build-docker.yml)

2023-03-31 03:19:44 +02:00

[](https://opensource.org/license/apache-2-0/)

2024-04-03 19:58:24 +02:00

[](https://doi.org/10.1145/3604951.3605513)

2020-11-20 12:49:27 +01:00

2020-12-16 15:52:37 +01:00

2023-04-14 02:48:42 +02:00

## Features

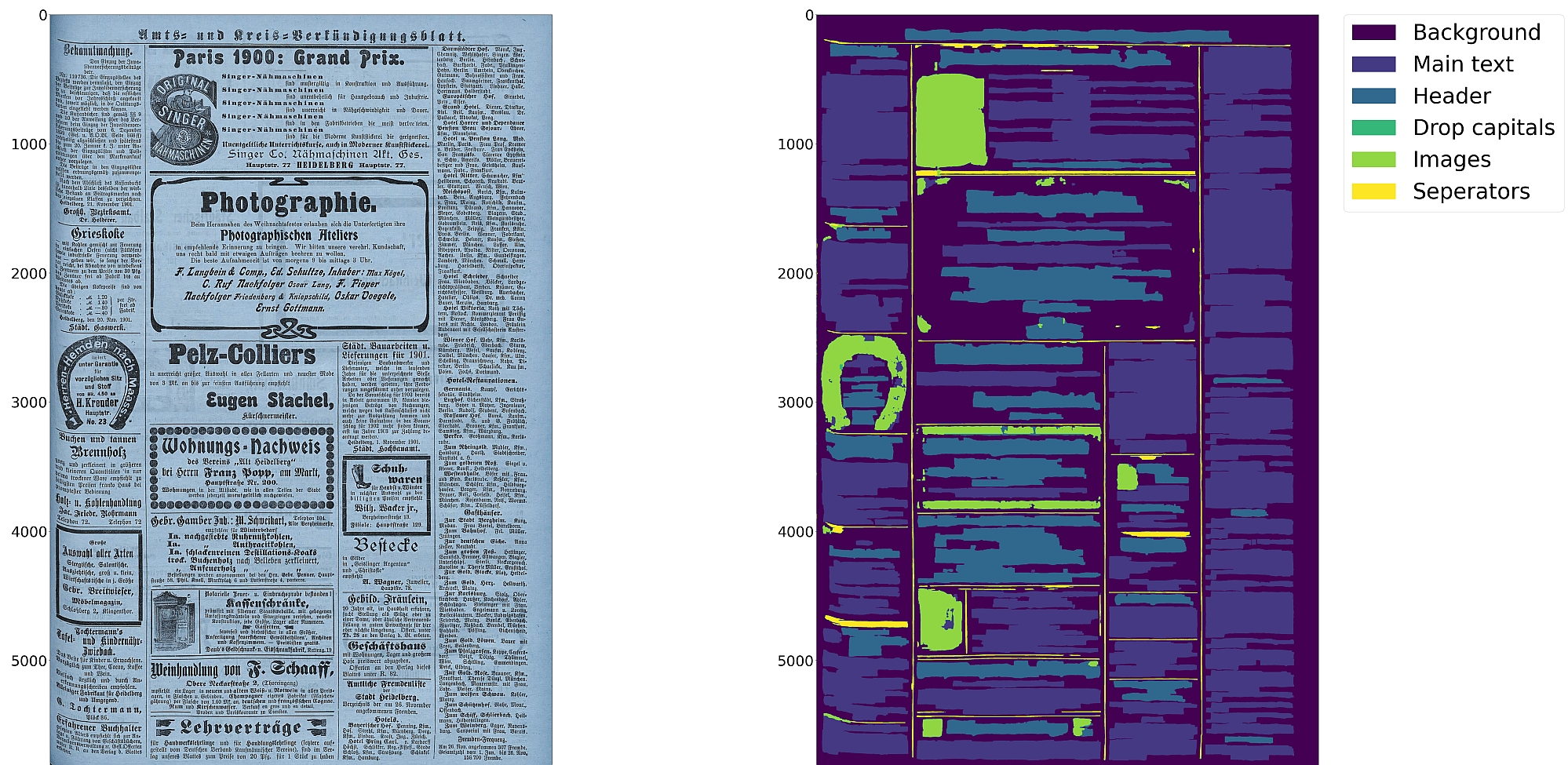

* Support for up to 10 segmentation classes:

2023-05-13 15:36:24 +02:00

* background, [page border ](https://ocr-d.de/en/gt-guidelines/trans/lyRand.html ), [text region ](https://ocr-d.de/en/gt-guidelines/trans/lytextregion.html#textregionen__textregion_ ), [text line ](https://ocr-d.de/en/gt-guidelines/pagexml/pagecontent_xsd_Complex_Type_pc_TextLineType.html ), [header ](https://ocr-d.de/en/gt-guidelines/trans/lyUeberschrift.html ), [image ](https://ocr-d.de/en/gt-guidelines/trans/lyBildbereiche.html ), [separator ](https://ocr-d.de/en/gt-guidelines/trans/lySeparatoren.html ), [marginalia ](https://ocr-d.de/en/gt-guidelines/trans/lyMarginalie.html ), [initial ](https://ocr-d.de/en/gt-guidelines/trans/lyInitiale.html ), [table ](https://ocr-d.de/en/gt-guidelines/trans/lyTabellen.html )

2023-04-14 02:48:42 +02:00

* Support for various image optimization operations:

* cropping (border detection), binarization, deskewing, dewarping, scaling, enhancing, resizing

2023-04-14 03:13:07 +02:00

* Text line segmentation to bounding boxes or polygons (contours) including for curved lines and vertical text

2024-04-03 19:58:24 +02:00

* Detection of reading order (left-to-right or right-to-left)

2023-04-14 03:13:07 +02:00

* Output in [PAGE-XML ](https://github.com/PRImA-Research-Lab/PAGE-XML )

2023-04-14 03:25:01 +02:00

* [OCR-D ](https://github.com/qurator-spk/eynollah#use-as-ocr-d-processor ) interface

2023-04-14 02:48:42 +02:00

2025-05-14 21:56:03 +02:00

:warning: Development is currently focused on achieving the best possible quality of results for a wide variety of

historical documents and therefore processing can be very slow. We aim to improve this, but contributions are welcome.

2024-04-03 19:58:24 +02:00

2022-04-04 21:13:21 -04:00

## Installation

2024-10-16 14:21:48 +02:00

Python `3.8-3.11` with Tensorflow `<2.13` on Linux are currently supported.

2022-04-04 21:13:21 -04:00

2023-09-26 18:54:14 +02:00

For (limited) GPU support the CUDA toolkit needs to be installed.

2022-04-04 21:13:21 -04:00

2024-04-03 19:58:24 +02:00

You can either install from PyPI

2022-04-04 21:13:21 -04:00

2023-04-14 02:48:42 +02:00

```

pip install eynollah

```

2022-04-04 21:13:21 -04:00

2023-04-14 02:48:42 +02:00

or clone the repository, enter it and install (editable) with

2022-04-04 21:13:21 -04:00

2023-04-14 02:48:42 +02:00

```

git clone git@github .com:qurator-spk/eynollah.git

cd eynollah; pip install -e .

```

2022-09-13 16:40:44 +02:00

2023-04-14 02:48:42 +02:00

Alternatively, you can run `make install` or `make install-dev` for editable installation.

2022-09-13 16:40:44 +02:00

2023-05-13 12:47:06 +02:00

## Models

2025-05-14 21:56:03 +02:00

Pretrained models can be downloaded from [qurator-data.de ](https://qurator-data.de/eynollah/ ) or [huggingface ](https://huggingface.co/SBB?search_models=eynollah ).

2023-05-13 12:47:06 +02:00

2025-03-27 22:41:10 +01:00

For documentation on methods and models, have a look at [`models.md` ](https://github.com/qurator-spk/eynollah/tree/main/docs/models.md ).

2024-04-03 19:58:24 +02:00

## Train

2025-03-27 22:41:10 +01:00

In case you want to train your own model with Eynollah, have a look at [`train.md` ](https://github.com/qurator-spk/eynollah/tree/main/docs/train.md ).

2023-05-13 12:47:06 +02:00

2022-04-04 21:13:21 -04:00

## Usage

2025-05-14 21:56:03 +02:00

Eynollah supports four use cases: layout analysis (segmentation), binarization, text recognition (OCR),

and (trainable) reading order detection.

2025-04-22 00:23:01 +02:00

2025-05-14 21:56:03 +02:00

### Layout Analysis

The layout analysis module is responsible for detecting layouts, identifying text lines, and determining reading order

using both heuristic methods or a machine-based reading order detection model.

2025-04-22 00:23:01 +02:00

2025-05-14 21:56:03 +02:00

Note that there are currently two supported ways for reading order detection: either as part of layout analysis based

on image input, or, currently under development, for given layout analysis results based on PAGE-XML data as input.

2025-04-22 00:23:01 +02:00

2025-05-14 21:56:03 +02:00

The command-line interface for layout analysis can be called like this:

2022-04-04 21:13:21 -04:00

```sh

2025-04-22 00:23:01 +02:00

eynollah layout \

2024-04-03 19:58:24 +02:00

-i < single image file > | -di < directory containing image files > \

2023-04-14 02:48:42 +02:00

-o < output directory > \

2024-04-03 19:58:24 +02:00

-m < directory containing model files > \

2023-04-14 02:48:42 +02:00

[OPTIONS]

2022-09-13 17:19:19 +02:00

```

2022-09-13 21:48:21 +02:00

The following options can be used to further configure the processing:

2022-04-04 21:13:21 -04:00

2024-07-31 22:53:36 +02:00

| option | description |

|-------------------|:-------------------------------------------------------------------------------|

| `-fl` | full layout analysis including all steps and segmentation classes |

| `-light` | lighter and faster but simpler method for main region detection and deskewing |

2025-04-22 00:23:01 +02:00

| `-tll` | this indicates the light textline and should be passed with light version |

2024-07-31 22:53:36 +02:00

| `-tab` | apply table detection |

| `-ae` | apply enhancement (the resulting image is saved to the output directory) |

| `-as` | apply scaling |

| `-cl` | apply contour detection for curved text lines instead of bounding boxes |

| `-ib` | apply binarization (the resulting image is saved to the output directory) |

| `-ep` | enable plotting (MUST always be used with `-sl` , `-sd` , `-sa` , `-si` or `-ae` ) |

2024-09-19 14:41:17 +02:00

| `-eoi` | extract only images to output directory (other processing will not be done) |

2024-07-31 22:53:36 +02:00

| `-ho` | ignore headers for reading order dectection |

| `-si <directory>` | save image regions detected to this directory |

| `-sd <directory>` | save deskewed image to this directory |

| `-sl <directory>` | save layout prediction as plot to this directory |

| `-sp <directory>` | save cropped page image to this directory |

| `-sa <directory>` | save all (plot, enhanced/binary image, layout) to this directory |

2022-04-04 21:13:21 -04:00

2025-05-14 21:56:03 +02:00

If no option is set, the tool performs layout detection of main regions (background, text, images, separators

and marginals).

2024-08-01 00:30:25 +02:00

The best output quality is produced when RGB images are used as input rather than greyscale or binarized images.

2023-05-13 02:39:18 +02:00

2025-04-22 00:23:01 +02:00

### Binarization

2025-05-14 21:56:03 +02:00

The binarization module performs document image binarization using pretrained pixelwise segmentation models.

2025-04-22 00:23:01 +02:00

The command-line interface for binarization of single image can be called like this:

```sh

eynollah binarization \

2025-05-14 21:56:03 +02:00

-m < directory containing model files > \

< single image file > \

2025-04-22 00:23:01 +02:00

< output image >

```

and for flowing from a directory like this:

```sh

eynollah binarization \

-m < path to directory containing model files > \

-di < directory containing image files > \

-do < output directory >

```

### OCR

Under development

### Machine-based-reading-order

Under development

2022-04-04 21:13:21 -04:00

#### Use as OCR-D processor

2025-04-04 20:23:23 +02:00

Eynollah ships with a CLI interface to be used as [OCR-D ](https://ocr-d.de ) [processor ](https://ocr-d.de/en/spec/cli ),

formally described in [`ocrd-tool.json` ](https://github.com/qurator-spk/eynollah/tree/main/src/eynollah/ocrd-tool.json ).

2020-11-20 12:49:27 +01:00

2023-04-14 13:24:13 +02:00

In this case, the source image file group with (preferably) RGB images should be used as input like this:

2020-11-20 12:49:27 +01:00

2025-04-01 23:26:38 +02:00

ocrd-eynollah-segment -I OCR-D-IMG -O OCR-D-SEG -P models 2022-04-05

2020-11-20 12:49:27 +01:00

2025-04-01 23:26:38 +02:00

If the input file group is PAGE-XML (from a previous OCR-D workflow step), Eynollah behaves as follows:

- existing regions are kept and ignored (i.e. in effect they might overlap segments from Eynollah results)

- existing annotation (and respective `AlternativeImage` s) are partially _ignored_ :

- previous page frame detection (`cropped` images)

- previous derotation (`deskewed` images)

- previous thresholding (`binarized` images)

- if the page-level image nevertheless deviates from the original (`@imageFilename` )

(because some other preprocessing step was in effect like `denoised` ), then

the output PAGE-XML will be based on that as new top-level (`@imageFilename` )

ocrd-eynollah-segment -I OCR-D-XYZ -O OCR-D-SEG -P models 2022-04-05

Still, in general, it makes more sense to add other workflow steps **after** Eynollah.

2023-06-23 13:54:04 +02:00

2024-04-03 19:58:24 +02:00

#### Additional documentation

Please check the [wiki ](https://github.com/qurator-spk/eynollah/wiki ).

2023-06-23 13:54:04 +02:00

## How to cite

If you find this tool useful in your work, please consider citing our paper:

2023-06-23 14:04:24 +02:00

```bibtex

2024-04-03 19:58:24 +02:00

@inproceedings {hip23rezanezhad,

2023-06-23 14:04:24 +02:00

title = {Document Layout Analysis with Deep Learning and Heuristics},

author = {Rezanezhad, Vahid and Baierer, Konstantin and Gerber, Mike and Labusch, Kai and Neudecker, Clemens},

2023-06-23 13:54:04 +02:00

booktitle = {Proceedings of the 7th International Workshop on Historical Document Imaging and Processing {HIP} 2023,

2023-08-09 18:39:49 +02:00

San José, CA, USA, August 25-26, 2023},

publisher = {Association for Computing Machinery},

address = {New York, NY, USA},

2023-06-23 14:04:24 +02:00

year = {2023},

2023-08-09 18:39:49 +02:00

pages = {73--78},

2023-06-23 14:04:24 +02:00

url = {https://doi.org/10.1145/3604951.3605513}

2023-06-23 13:54:04 +02:00

}

```